How to Extend a Video With AI (2026): Keep Motion and Style Consistent

TL;DR (3 steps)

- Extract and prepare the final frames of your original clip so the AI has a clean visual anchor.

- Use a continuation prompt with clear motion, camera, and style instructions.

- Run quality checks and iterate with small adjustments until motion and lighting feel natural.

Intro

AI video tools have made it easy to generate clips from scratch. Extending an existing video, however, is a different problem. You are no longer asking the model to “create something new.” You are asking it to continue motion, preserve identity, and stay consistent with what already exists.

That is where most workflows break. The model might change lighting, shift camera angles, or subtly alter the subject. The result looks almost right, but not usable. This is why extending a video with AI is less about creativity and more about control.

In this guide, I’ll walk through a practical workflow to extend a video with AI while keeping motion and style consistent. You’ll learn how to prepare your inputs, structure prompts, and evaluate outputs so the final result actually blends with your original footage.

If you are working with tools like Seedance 2.0, Kling 3.0, Veo 3, Sora, Runway, Pika, Luma, PixVerse, or Magic Hour, the principles are the same. The difference is how much control each tool gives you.

What you need (inputs/specs)

To extend a video with AI in a way that looks natural, you need more than just a clip and a prompt. Most low-quality outputs come from missing inputs or unclear references. Before you start, make sure you have the following ready:

- A source video (5-20 seconds works best). The last 1-2 seconds matter most because they guide the continuation.

- Extracted final frames (at least 2-5 still frames from the end of your clip).

- A clear understanding of motion direction (e.g., walking forward, camera panning left, zooming in).

- A defined visual style (cinematic, handheld, anime, product ad, etc.).

- Access to an AI video tool such as Seedance 2.0, Kling 3.0, Veo 3, Sora, Runway, Pika, Luma, PixVerse, or Magic Hour.

The key idea is simple: the AI is not “continuing your video” in a literal sense. It is generating a new sequence based on your last frames and instructions. That means your inputs must clearly communicate what should happen next.

Step-by-step: how to extend a video with AI

1. Trim and isolate the last moment of your clip

Start by trimming your video so the ending is clean and intentional. Avoid cuts that feel abrupt or include motion blur artifacts. The last second should clearly show:

- Subject position

- Camera angle

- Lighting condition

- Motion direction

If your clip ends mid-action (for example, someone turning their head), the AI will struggle to predict the continuation. Instead, try to end on a readable pose or moment.

A good ending frame acts like a “handoff” to the AI.

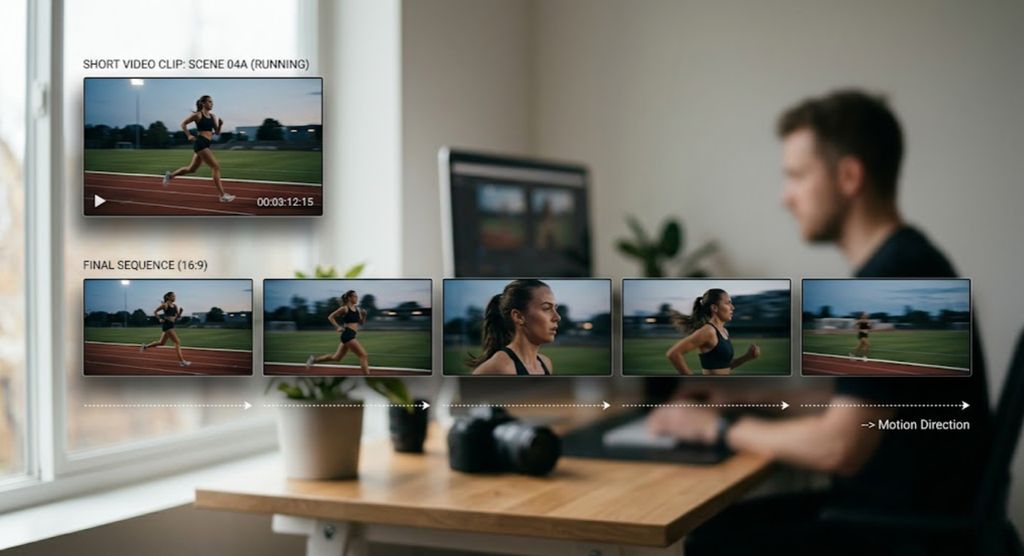

2. Extract final frames as visual references

Take 2-5 frames from the last second of your video. These will be used as reference images.

Why this matters:

- One frame is often too ambiguous

- Multiple frames help define motion trajectory

- They reduce flicker and identity drift

You can feed these into tools like Runway, Pika, or Magic Hour’s image-to-video pipeline to guide the continuation.

If you skip this step and rely only on text, you will often get style shifts, new characters, or inconsistent lighting.

3. Define the continuation intent clearly

Before writing a prompt, decide what should happen next. Be specific.

Instead of:

“continue the video naturally”

Think in terms of:

- Motion: walking forward, turning, reaching, flowing

- Camera: tracking shot, dolly in, static, handheld shake

- Environment: same room, outdoor street, lighting unchanged

- Style: cinematic, realistic, animated, product-focused

Write this down in plain language first. This becomes your prompt foundation.

4. Write a continuity-focused prompt

A strong continuation prompt has three parts:

- Reference to the existing scene

- Description of motion and camera

- Constraints to preserve style

Here are example prompts you can use and adapt:

Example 1 (cinematic continuation):

“Continue the scene from the last frame. The subject keeps walking forward at the same pace. Camera follows smoothly from behind. Lighting remains soft and warm. Maintain cinematic depth of field and consistent character appearance.”

Example 2 (product shot):

“Extend the shot of the product rotating slowly on the table. Keep the same lighting and reflections. Camera continues a slow clockwise orbit. Background remains minimal and clean.”

Example 3 (social content):

“Continue the clip with the person turning slightly toward the camera and smiling. Keep handheld camera feel and natural lighting. Maintain consistent face and clothing.”

You can run these prompts through tools like Kling 3.0, Veo 3, or Sora depending on access. Each model interprets continuity differently, so expect variation.

5. Choose the right generation mode

Different tools support different workflows:

- Video-to-video: best for direct continuation with strong consistency

- Image-to-video: best when using extracted frames as anchors

- Text-to-video: best for creative extensions but less consistent

For example, with Magic Hour, you can start with video-to-video for structure, then refine with text-to-video for creative variations using.

The choice depends on how strict you need the continuation to be.

6. Generate multiple variations

Never rely on a single output. Generate at least 3-5 versions.

Why this matters:

- AI outputs are probabilistic

- Small prompt changes can produce very different motion

- One version usually stands out as “natural”

Label your outputs and compare:

- Motion smoothness

- Character consistency

- Lighting continuity

- Camera logic

Pick the best one and iterate from there.

7. Refine with small prompt adjustments

Avoid rewriting the entire prompt. Instead, tweak specific elements:

- “slower movement”

- “more stable camera”

- “stronger match to original lighting”

This keeps the model anchored while improving specific issues.

8. Stitch and finalize

Once you have a good continuation:

- Trim the AI clip to remove unstable frames at the start

- Align it with your original video

- Add slight motion blur or color grading if needed

This helps hide minor inconsistencies and makes the transition seamless.

Common mistakes and how to fix them

Inconsistent character or subject

This happens when the model loses identity between frames.

Fix:

- Use more reference frames

- Add “maintain same character appearance” in prompt

- Use video-to-video instead of text-only generation

Sudden lighting changes

The AI may shift from warm to cool lighting or change exposure.

Fix:

- Explicitly mention lighting in the prompt

- Use frames with stable lighting as references

- Avoid clips with mixed lighting at the end

Unnatural motion

The continuation may feel floaty or disconnected.

Fix:

- Be specific about motion direction

- Use phrases like “continues smoothly” or “same speed”

- Avoid vague prompts like “continue naturally”

Camera inconsistency

The camera may jump angles or switch styles.

Fix:

- Define camera behavior clearly

- Example: “static camera”, “slow dolly forward”, “handheld slight shake”

Style drift

The output looks like a different video.

Fix:

- Reinforce style in prompt

- Use image references

- Avoid mixing too many stylistic keywords

“Good result” checklist

Before you finalize your extended video, check the following:

- Motion flows naturally from the original clip

- No sudden jumps in position or direction

- Lighting and color remain consistent

- Subject identity is stable

- Camera movement makes sense

- No flickering or visual artifacts in key frames

If at least 5 out of 6 are satisfied, your result is usually usable. If not, go back and adjust your prompt or references.

Variations you should try

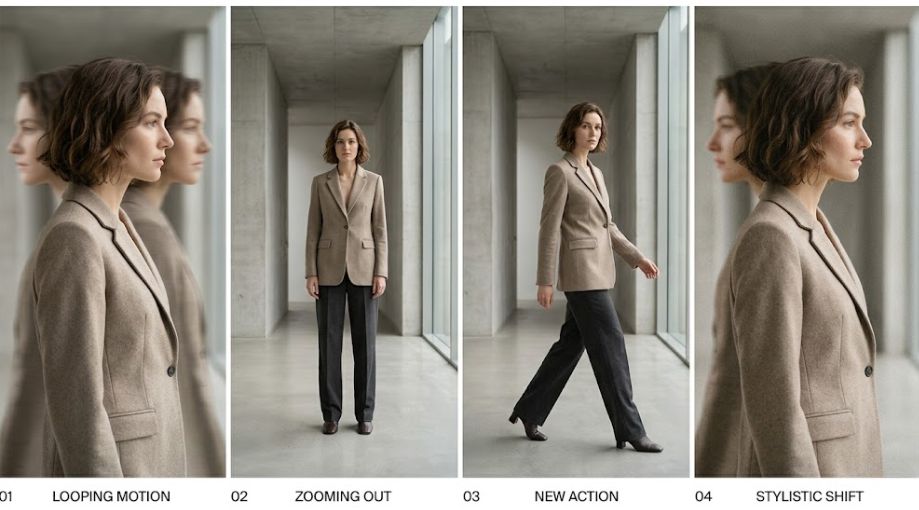

Once you understand the basic continuation workflow, the next step is to experiment with variations. These are not just creative ideas. They are practical ways to get more value from the same source clip and test how different models handle continuity.

1. Loop extension (for seamless playback)

Instead of extending your video forward, you generate a continuation that loops back to the beginning. This is especially useful for ads, landing pages, or social posts where the video auto-plays.

How it works:

- Identify the first frame and last frame of your clip

- Generate a continuation that visually transitions back to the starting state

- Trim both ends so the loop feels continuous

What to watch for:

- Motion direction must “close the loop” logically

- Lighting and camera position need to match both ends

- Avoid sudden speed changes at the transition point

In practice, this often requires a few iterations. You are not just extending time. You are aligning two endpoints so they feel like one continuous cycle.

2. Scene expansion (from tight shot to wider context)

This variation focuses on expanding the visual scope of your scene rather than just extending time. For example, you start with a close-up and extend into a wider shot that reveals more of the environment.

How it works:

- Use the last frame as a base

- Prompt the model to gradually pull back or widen the frame

- Keep subject position stable while revealing new context

Example prompt direction:

“Continue the scene by slowly pulling the camera back to reveal more of the environment. Keep the subject centered and maintain the same lighting and style.”

What to watch for:

- Background generation can introduce inconsistencies

- Perspective shifts must feel natural

- The subject should not drift or resize unnaturally

This works best in models that handle spatial consistency well, such as Veo 3 or Sora, but you can still get usable results in tools like Runway or Magic Hour with careful prompting.

3. Style-preserving continuation (same motion, stronger polish)

In this variation, you are not changing the scene or action. You are extending the clip while reinforcing visual quality or stylistic consistency.

How it works:

- Keep motion and composition almost identical

- Add constraints like “same cinematic lighting,” “consistent color grading,” or “same lens style”

- Focus on stabilizing the look rather than changing it

Example prompt direction:

“Continue the shot with the same motion and framing. Maintain cinematic lighting, consistent color grading, and realistic texture details.”

What to watch for:

- Over-specifying style can sometimes reduce motion quality

- Some models may prioritize aesthetics over continuity

This variation is useful when your original clip is already strong and you just need more duration without visual drift.

4. Narrative continuation (adding a new action)

This is the most complex variation. Instead of simply continuing motion, you introduce a new action or story beat.

How it works:

- Start with a stable end frame

- Define a clear next action (e.g., turning, picking up an object, interacting with something)

- Guide the transition from existing motion into the new action

Example prompt direction:

“Continue the scene as the subject slows down, turns toward the camera, and reaches out to pick up an object. Keep lighting and camera style consistent.”

What to watch for:

- Timing often feels unnatural on the first attempt

- The transition between old and new motion can look abrupt

- Requires more iterations than simple continuation

This variation is powerful for storytelling, especially in ads or short-form content, but it demands more control and patience.

5. Multi-pass extension (chaining short generations)

Instead of generating a long continuation in one go, you extend the video in small steps and chain them together.

How it works:

- Extend the video by 2-3 seconds

- Take the new ending frames

- Use them as input for the next extension

Why this works:

- Short generations are more stable

- You can correct issues at each step

- Better control over motion and consistency

What to watch for:

- Small errors can accumulate over multiple passes

- You may need to re-anchor with original frames occasionally

This is one of the most reliable approaches if you need longer sequences without major quality drops.

How this fits into modern AI video workflows

Extending a video with AI is no longer a niche trick. It is becoming a core step in how creators and teams actually produce content. Instead of generating entire videos from scratch, the workflow is shifting toward capturing a strong base clip and then using AI to scale it.

In practice, the workflow looks more like this:

- Shoot or source a short, high-quality clip (5-10 seconds)

- Extend it into multiple variations using AI

- Test different versions for performance or storytelling

- Iterate based on what works

This approach solves a real constraint: time and cost. Shooting multiple takes, locations, or variations is expensive. Extending a single clip with AI gives you more outputs from the same input.

What I’ve seen after testing tools like Runway, Pika, Luma, PixVerse, and Magic Hour is that continuation is often more reliable than full generation. When you start from an existing clip, you give the model structure. That structure reduces randomness and makes outputs easier to control.

Another important shift is how teams are using continuation for iteration, not just creation. For example:

- Marketing teams extend the same product shot into multiple hooks for ads

- Creators generate longer sequences from short viral clips

- Editors prototype scenes before committing to full production

This is why continuation sits right next to prompting in modern workflows. If prompting is about telling the model what to generate, continuation is about anchoring it to something real.

If you look at broader tooling, platforms like Magic Hour are combining video-to-video, image-to-video, and text-to-video into one pipeline. This lets you move from structured continuation to more creative exploration without switching tools.

Market trends shaping AI video continuation

The way video extension works today is heavily influenced by a few larger trends in AI video. These trends explain why some workflows feel stable while others still break.

1. From generation to control

Early AI video tools focused on generating clips from text prompts. The results looked impressive but were hard to use in real projects because they lacked consistency.

Now the focus is shifting toward control:

- Better handling of motion across frames

- More stable character identity

- Stronger alignment with input references

This is why continuation workflows are gaining traction. They give you partial control by anchoring the model to existing footage.

2. Multi-modal pipelines are becoming standard

Instead of choosing between text-to-video or image-to-video, most serious workflows now combine them.

A typical pipeline might look like:

- Extract frames (image input)

- Extend motion (video-to-video)

- Refine with prompts (text input)

This flexibility is important because no single mode solves everything. Continuation often works best when you layer them.

3. Short-form content is driving use cases

Most demand for video extension comes from short-form formats:

- TikTok-style videos

- Product ads

- Social media loops

These formats benefit directly from continuation because:

- You can extend runtime without reshooting

- You can test multiple variations quickly

- You can adapt one clip for different platforms

This is also why most tools perform best with short extensions (a few seconds at a time). The models are optimized for these use cases.

4. Model competition is accelerating quality

Models like Sora, Veo 3, Kling 3.0, and Seedance 2.0 are pushing improvements in:

- Temporal consistency (less flicker)

- Physics and motion realism

- Scene coherence over longer durations

At the same time, tools like Runway, Pika, Luma, and PixVerse are focusing on accessibility and speed.

The result is a split:

- High-end models aim for realism and longer sequences

- Creator tools focus on iteration speed and usability

Understanding this helps you choose the right tool depending on whether you need quality or speed.

5. Iteration speed is becoming the real advantage

The biggest shift is not just output quality. It is how fast you can iterate.

Teams that get the most value from AI video are not the ones generating perfect clips on the first try. They are the ones who:

- Generate multiple variations quickly

- Identify what works

- Refine prompts and inputs in small steps

Continuation workflows fit perfectly into this because they are modular. You can extend, evaluate, and adjust without starting over.

6. What to watch next

A few developments will likely improve video extension workflows in the near future:

- Longer stable generations without chaining

- Better subject tracking across frames

- More precise camera control (lens, movement, depth)

- Integration with traditional editing tools

As these improve, the line between “editing” and “generation” will continue to blur.

FAQs

What does “extend video with AI” actually mean?

It means generating new frames that continue your existing clip using AI models. The model predicts what should happen next based on your input frames and prompt.

Which tool is best for video continuation?

It depends on your needs. Tools like Runway, Pika, and Luma are accessible and fast. Models like Sora, Veo 3, and Kling 3.0 aim for higher realism. Magic Hour is useful when you want flexible workflows combining video-to-video and text-to-video.

How long can I extend a video?

Most tools work best with short extensions (2-5 seconds at a time). You can chain multiple generations, but quality may degrade over time.

Why does the subject sometimes change?

This happens when the model lacks strong reference signals. Using multiple frames and clear prompts reduces this issue.

Can I extend any type of video?

Yes, but results vary. Simple scenes with clear motion and stable lighting work best. Complex scenes with multiple subjects are harder to extend cleanly.

Is this suitable for professional editing?

Yes, especially for ads, social content, and prototypes. For high-end production, you will still need manual editing and post-processing.

How will this improve in the future?

Models are getting better at temporal consistency and control. Expect longer, more stable continuations and better integration with editing tools.