How to Keep Characters Consistent in AI Video (2026): References, Prompts, and Practical Fixes

TL;DR

- Choose the right reference method first. Most character consistency problems come from using the wrong input type, not from weak prompts.

- Combine reference images with controlled prompts. One reference image is rarely enough if the scene changes.

- When drift happens, adjust the reference structure before rewriting the prompt.

Introduction

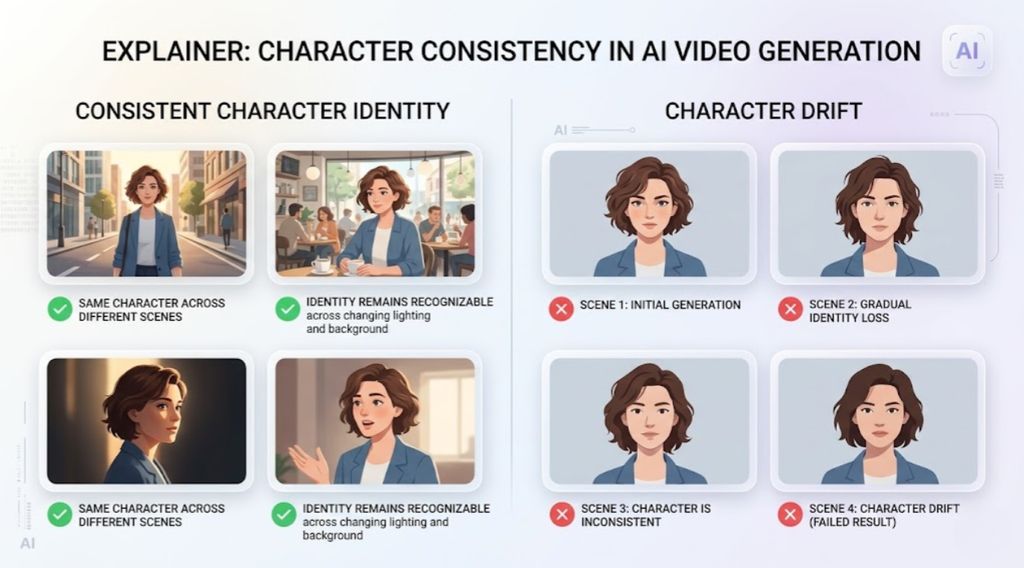

Character consistency is one of the hardest problems in AI video generation. Even when the prompt clearly describes the same person, many models will subtly change facial features, hairstyle, or proportions between shots. This effect, often called character drift, becomes more noticeable as clips get longer or when scenes change.

The problem does not come from a single tool. It appears across most major models, including Seedance 2.0, Kling 3.0, Veo 3, Sora, Runway, Pika, Luma, PixVerse, and Magic Hour. Each system approaches reference conditioning differently, but the underlying challenge is the same: generative models recreate images frame by frame rather than remembering a stable identity the way a human editor would.

For creators, marketers, and brand teams, this limitation quickly becomes a workflow problem. If a character changes slightly in every scene, the final video looks inconsistent and unprofessional. A marketing mascot might suddenly have a different jawline. A storytelling character might appear to be played by a different actor in the next shot.

The good news is that character drift is usually manageable once you understand how models interpret prompts and references. Most consistency issues come from using the wrong reference method, changing prompts too aggressively, or introducing scene variations that the model cannot reconcile.

This tutorial breaks down a practical workflow for maintaining character consistency in AI video. It explains when to use reference images, how to structure prompts so identity remains stable, and what to do when drift appears anyway. Instead of promising perfect identity preservation, the focus here is on repeatable techniques that significantly reduce variation across clips.

What you need

Before trying to keep characters consistent in AI video, it helps to understand what the models actually use as signals. Most modern generators such as Seedance 2.0, Kling 3.0, Veo 3, Sora, Runway, Pika, Luma, PixVerse, and Magic Hour work by combining three types of inputs: visual references, text prompts, and motion context. When one of these inputs changes too much, the character often drifts.

The goal of this guide is not to promise perfect identity preservation. That is still difficult across all models. Instead, the goal is to reduce character drift enough that viewers perceive the same character across shots.

To follow the workflow in this tutorial, you typically need the following inputs.

Reference images

These are the most important element for keeping a character stable. A single portrait often works for short clips, but longer sequences benefit from multiple angles or expressions.

Prompt structure

Your prompt should describe the character consistently. If the wording changes significantly between clips, many models will reinterpret the identity.

Scene context

Background, lighting, and camera movement influence how the model reconstructs the face. If these change dramatically, the model may generate a different version of the character.

A video generation tool that supports reference workflows

Many tools now support some form of reference image conditioning or character consistency. If you want a straightforward interface for this workflow, you can generate clips with the Magic Hour AI video generator.

Export format and duration planning

Shorter clips are easier to keep consistent. If you plan a longer narrative, it is often better to generate multiple clips and stitch them together.

Step-by-step workflow to keep characters consistent in AI video

Step 1: Decide which reference method to use

Before generating anything, you should decide how the character reference will be provided to the model. Different methods work better in different situations.

The three most common methods are single reference images, multi-angle reference sets, and previous video frames.

A simple way to decide is to follow this decision tree.

If you only need a short clip of one character with minimal camera movement, start with a single reference image. Many tools will maintain identity for several seconds if the scene remains simple.

If the character appears in multiple shots or expressions, use a small set of reference images. Two to four angles of the same character help the model maintain a more stable identity.

If you are extending an existing clip or creating sequential shots, using frames from the previous video as references usually produces the most stable results.

In practice, most creators combine these approaches. A base portrait defines the character, and additional frames maintain continuity between clips.

For workflows that start from text prompts, you can generate the initial clip using a text to video tool. Once the character exists visually, you can use that frame as the reference for later shots.

Step 2: Build a stable character description in the prompt

Prompts influence how the model interprets the reference image. If the description changes across clips, the model may subtly reinterpret the face.

A stable prompt pattern usually works better than rewriting the description each time.

A useful structure looks like this:

Character description

Age, gender, defining facial features, hair style.

Clothing or signature elements

Color or style that stays constant across scenes.

Camera or scene instructions

These should change between clips, but the identity description should remain stable.

Example prompt pattern:

A young woman with shoulder-length black hair, soft round face, light freckles, wearing a red denim jacket, cinematic lighting, medium shot, walking through a night market.

If the next shot requires a different environment, keep the character description intact and only modify the scene portion.

For example:

A young woman with shoulder-length black hair, soft round face, light freckles, wearing a red denim jacket, sitting at a cafe table, warm afternoon lighting.

The important idea is that the identity description remains constant across prompts.

Step 3: Anchor the character with reference images

Text prompts alone rarely guarantee identity consistency. The most reliable workflow combines prompts with reference images.

Most video generators allow you to upload a reference image or frame before generating the clip. The model uses that image as a visual anchor.

If you are starting from a photo, you can convert the image into a short clip using an image-to-video workflow.

Once the first clip is generated, export a clean frame where the face is clearly visible. This frame becomes the reference for the next shot.

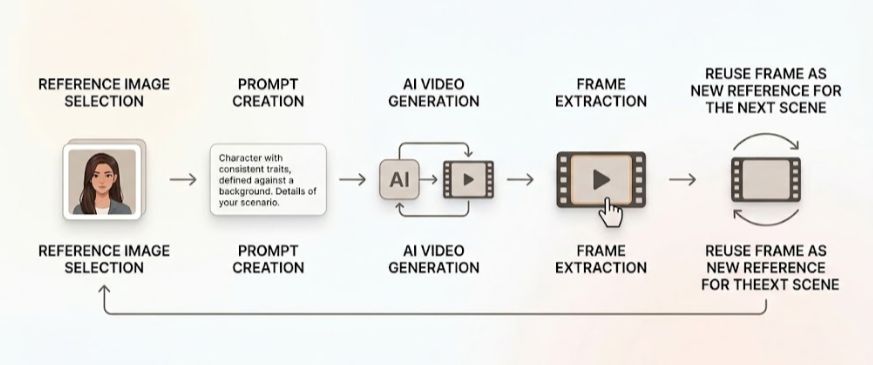

The process then looks like this.

Generate clip one using the reference image and prompt.

Export a frame from that clip.

Use the exported frame as the reference for clip two.

Repeat the process for additional shots.

This frame chaining method significantly reduces identity drift across scenes.

Step 4: Control motion and camera movement

Even with strong references, extreme motion can cause the model to reinterpret the character.

Large camera moves, fast rotations, or sudden lighting changes make it harder for the model to preserve facial structure.

If you need dramatic motion, it helps to introduce it gradually.

For example, instead of generating a clip where the character spins around immediately, you can start with a stable shot and then generate a second clip with slight movement.

The model often maintains identity better when changes occur incrementally.

Another useful technique is limiting the duration of each generated clip. Shorter clips allow the model to maintain identity more reliably.

You can later connect multiple clips using a video-to-video workflow if you want stylistic adjustments or continuity improvements.

Step 5: Maintain lighting and environment continuity

Many users focus on facial features but forget that lighting strongly affects identity perception.

If the lighting direction or color changes drastically, the model may generate a different face that still matches the prompt.

For example, a character generated in soft daylight may look noticeably different under neon lighting.

To reduce this effect, keep certain environmental elements consistent across shots.

Consistent color temperature

Similar camera distance

Gradual changes in background

When a major environment change is required, using a fresh reference frame from the previous clip helps stabilize the character.

Common mistakes and how to fix them

Even with a good workflow, character drift can still happen. The table below summarizes the most common causes and practical fixes.

Problem | Why it happens | Practical fix |

Face gradually changes across clips | The prompt description changes slightly | Keep the identity description identical across prompts |

Character looks different in each scene | No reference images are used | Anchor every clip with a reference frame |

Facial features distort during motion | Camera movement is too aggressive | Reduce motion or shorten the clip length |

Character age or style changes | Lighting and color grading shift dramatically | Keep lighting conditions similar |

Identity resets after a scene cut | New clip starts without previous frame reference | Export a frame from the prior clip and reuse it |

A useful habit is to troubleshoot inputs one variable at a time. If a clip drifts, do not rewrite everything at once. Start by checking whether the reference image changed, then examine the prompt wording.

What a good result looks like

A successful workflow for character consistency does not mean the character is identical in every frame. Current AI video systems do not maintain a fixed identity model the way traditional animation pipelines do. Instead, they reconstruct the character repeatedly based on prompts, references, and scene context. Because of this, the realistic goal is perceptual continuity rather than pixel-perfect identity.

Perceptual continuity means that viewers immediately recognize the same character across different shots. Even if lighting, camera position, or facial expression changes, the character should still feel like the same person appearing throughout the video.

There are several practical signals that indicate the workflow is producing stable results.

Stable facial structure across clips

The most important indicator is that the core facial structure remains recognizable. Elements such as the spacing between the eyes, the shape of the nose, the jawline, and the overall head proportions should remain consistent from one clip to the next. Minor variations in shading or skin texture are normal, but the underlying structure should not shift.

Consistent defining features

Distinctive visual features help anchor identity. These may include a specific hairstyle, glasses, facial hair, or a recognizable clothing item such as a jacket or accessory. When these elements remain stable, the character appears consistent even if the scene changes.

Natural variation in expressions

Characters should be able to smile, talk, or turn their heads without transforming into a different person. Good outputs allow natural facial expressions while maintaining recognizable identity. If expressions cause large changes in facial structure, the reference signals are likely too weak.

Controlled motion without facial distortion

When the character moves, the face should remain coherent rather than stretching or reshaping dramatically. Moderate motion such as walking, looking around, or subtle head turns typically works well. Extremely fast motion or dramatic camera moves can introduce identity drift.

Continuity between shots

If the video consists of multiple generated clips, transitions between them should feel natural. The character might have slightly different posture or lighting in the next scene, but viewers should still recognize them immediately.

In practice, if someone watching the video can clearly identify the same character throughout the sequence, the workflow has achieved its goal. Small visual variations are expected, but the character should maintain a stable identity across the entire video.

Variations of the workflow

The basic workflow described earlier - combining reference images, stable prompts, and chained frames - works for most projects. However, different types of content benefit from slightly different reference strategies. Adjusting the workflow based on the project can significantly improve character stability.

Using a character reference set

Instead of relying on a single portrait, some creators build a small reference set for the character. This usually includes several images showing the same character from different angles or with different expressions. For example, a reference set might contain a front-facing portrait, a three-quarter angle view, and a side profile.

Providing multiple views helps the model infer a more complete representation of the character’s structure. This approach is particularly useful for projects where the character turns their head, speaks, or appears in multiple scenes.

Frame chaining for narrative videos

Story-driven videos often involve a sequence of shots showing the same character in different environments. In these cases, it is helpful to generate clips sequentially and reuse frames from earlier clips as references for later ones.

The workflow is simple: generate the first clip using the original reference image, export a clear frame from that clip, and use it as the reference for the next shot. Repeating this process allows the character identity to propagate across the sequence, reducing the chance of sudden visual changes.

Gradual scene transitions

Large visual changes can sometimes cause identity drift. For example, moving directly from a bright outdoor environment to a dark interior scene may produce a noticeably different face.

One way to avoid this is to introduce scene changes gradually. Instead of jumping directly between environments, create intermediate clips where the character transitions from one setting to another. This gives the model more visual continuity to work with and often improves stability.

Style variation while preserving identity

Some creators want the same character to appear in different visual styles. For instance, the character might appear in cinematic lighting in one clip and a stylized animated look in another.

When experimenting with style changes, it is usually best to keep the reference image unchanged while modifying only the stylistic elements of the prompt. This allows the model to reinterpret the scene without replacing the underlying character identity.

Recurring character workflows for short-form content

Short-form videos, especially social media content, often rely on the same character appearing in many separate clips. In this situation, the workflow can be simplified. A single strong reference portrait becomes the foundation for every new video.

Each clip begins from the same reference image, while the prompt describes the action or setting. Because the clips are short and usually independent from each other, this approach often provides sufficient consistency for recurring characters such as mascots, presenters, or virtual influencers.

These variations highlight an important point: character consistency is not achieved through a single setting or prompt. It comes from designing a workflow that balances references, prompts, and scene changes in a way that keeps the character recognizable across the entire video.

FAQs

Why do AI video models struggle with character consistency?

Most generative models produce each frame independently based on the prompt and reference signals. Small variations in interpretation can accumulate across frames or clips, causing the character to drift.

Do all video generators support reference images?

Not all models support them equally. Some tools emphasize prompt-driven generation, while others provide explicit reference workflows. Models like Seedance 2.0, Kling 3.0, Veo 3, Sora, Runway, Pika, Luma, PixVerse, and Magic Hour each implement different approaches.

Is perfect character consistency possible?

Perfect consistency across long sequences is still difficult. Most workflows focus on reducing drift rather than eliminating it entirely. Shorter clips and strong references typically produce the best results.

How many reference images should I use?

For simple clips, one clear portrait is often enough. For longer videos or multiple scenes, two to four reference images from different angles usually improve stability.

Why does the face change when lighting changes?

Lighting influences how facial features are reconstructed by the model. Strong color shifts or dramatic shadows can cause the model to reinterpret the face structure.

Can I keep multiple characters consistent in the same video?

Yes, but it is more challenging. Each character should have its own reference images and consistent prompt description. Generating separate clips for each character and combining them later can improve results.

.jpg)