Best Reference Image-to-Video Tools (2026): Keep Characters Consistent Without Manual Editing

TL;DR

- Best workflow for consistent AI characters → Seedance 2.0 or Kling 3.0 for reference control, then render scenes with Runway or Veo 3.

- Best fast creator workflow → Pika or Luma for quick reference-based clips, then refine with Runway.

- Best flexible reference pipeline → Magic Hour for image-to-video or video-to-video generation when you need multiple reference inputs.

Why Reference Image-to-Video Matters for AI Video Workflows

In the early wave of AI video generation tools, one problem repeatedly slowed down real production workflows: character consistency. A creator might generate a great first shot, but when they tried to extend the story into the next scene, the character would subtly change. Hair color would shift, clothing details would disappear, or facial structure would morph into something entirely different.

This problem is often described as identity drift. It happens because generative models tend to recreate a subject from scratch each time they produce a frame sequence. Without guidance, the model treats every new prompt as a fresh generation rather than a continuation of the same identity.

Reference image-to-video workflows solve that problem by giving the model a visual anchor. Instead of generating a scene purely from text prompts, the system uses an existing image or video frame as a guide. The model then tries to maintain key attributes-face structure, clothing style, colors, pose characteristics, and overall identity-while generating new frames.

In practical terms, this means creators can maintain continuity across scenes without manually editing each frame or relying on complex compositing tools.

Reference workflows are now becoming one of the most important capabilities in modern AI video tools. Filmmakers, brand teams, and content creators increasingly rely on them to produce story-driven videos where characters must remain recognizable across multiple shots.

According to recent documentation and product releases from leading platforms, most major AI video systems now support some form of reference-guided generation, either through image inputs, video references, or multi-frame conditioning pipelines.

The rest of this guide compares the most relevant tools available in 2026 and explains when each one works best.

Best Reference Image-to-Video Tools at a Glance

Tool | Best For | Reference Type | Free Plan | Starting Price |

Balanced control and production workflows | Image + video | Limited | Paid plans vary | |

Cinematic realism | Image reference | Limited | Varies by platform | |

Character storytelling | Image + pose | Limited | Platform dependent | |

Fast iteration | Image prompts | Yes | Paid plans available | |

Visual consistency across shots | Image + scene | Limited | Varies | |

Flexible reference pipelines | Image + video | Free plan | Creator plan available | |

Structured reference control | Image + asset library | Limited | Varies |

What “Reference” Means in AI Video Generation

The word “reference” in AI video generation usually refers to a source visual that guides the generation process. This source can be a single image, multiple images, or an existing video clip. Instead of relying purely on text instructions, the AI model analyzes the visual reference and uses it to preserve key characteristics.

There are several types of references commonly used in modern AI video tools.

An image reference is the simplest form. The user uploads a still image of a character or scene, and the model generates motion or new scenes while trying to preserve the appearance of the subject. This approach is widely used for social media storytelling, product shots, and animated portraits.

A video reference works slightly differently. Instead of copying the identity of a subject, the system may copy movement, camera motion, or composition. For example, a creator might use a reference video to replicate the pacing of a cinematic shot while replacing the subject with a generated character.

Some tools also support multi-reference workflows. These systems allow multiple images to define different attributes such as face identity, clothing style, and environment. This method tends to produce more stable results but requires a slightly more structured workflow.

Understanding these differences helps determine which tool is best for a specific project.

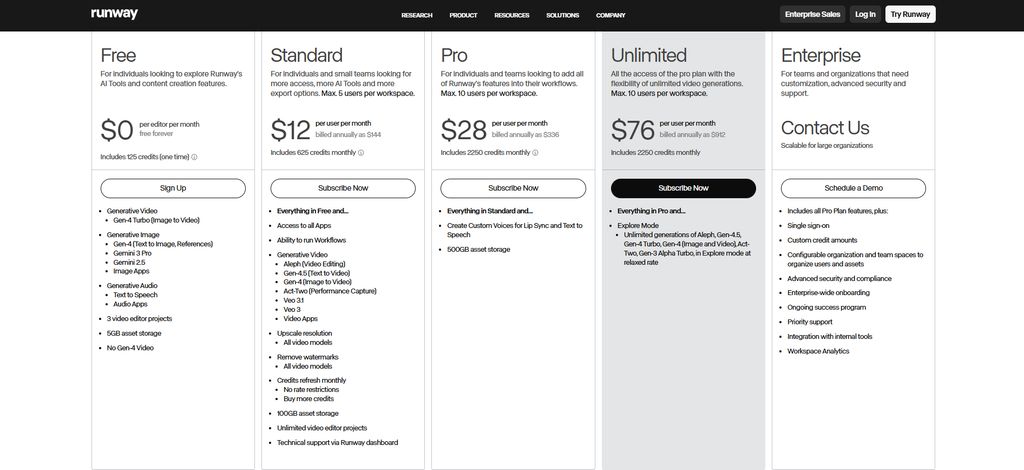

Runway

Runway remains one of the most widely used AI video tools for creators who need a balance between creative freedom and production-ready outputs. Its video models support reference images as part of the generation process, allowing users to guide character appearance while generating new scenes.

The platform is widely adopted in creative industries because it combines video generation with editing tools, compositing features, and timeline-based workflows. Instead of treating AI generation as a standalone feature, Runway integrates it into a broader creative pipeline.

Pros

- Strong ecosystem of video editing tools

- Good balance between quality and speed

- Reference image workflows are easy to test quickly

Cons

- Character identity can still drift across longer sequences

- Higher quality modes require more credits

Best for

Creators and small studios who want reference-guided generation inside a broader video production workflow.

Not for

Teams that need extremely precise character consistency across many scenes.

Pricing

Runway offers multiple subscription tiers with credit-based generation. Pricing details are available on the official Runway website.

Veo 3

Veo 3 is designed to generate cinematic video sequences from prompts and references. Its strength lies in visual realism and scene composition. When used with image references, the model can produce highly detailed shots that resemble live-action footage.

One reason Veo has gained attention among filmmakers is its ability to generate coherent lighting and camera motion. When paired with a strong reference image, the model tends to preserve the overall aesthetic of a subject more effectively than earlier video models.

However, Veo workflows still depend heavily on prompt design. The reference image guides appearance, but prompts must define motion, camera movement, and scene transitions.

Pros

- High visual realism

- Strong cinematic lighting and depth

- Works well for short narrative scenes

Cons

- Limited direct control over character identity in longer stories

- Access may depend on platform availability

Best for

Filmmakers experimenting with cinematic AI video generation.

Not for

High-volume content production where speed and iteration matter more than visual fidelity.

Pricing

Pricing varies depending on the platform that provides Veo access.

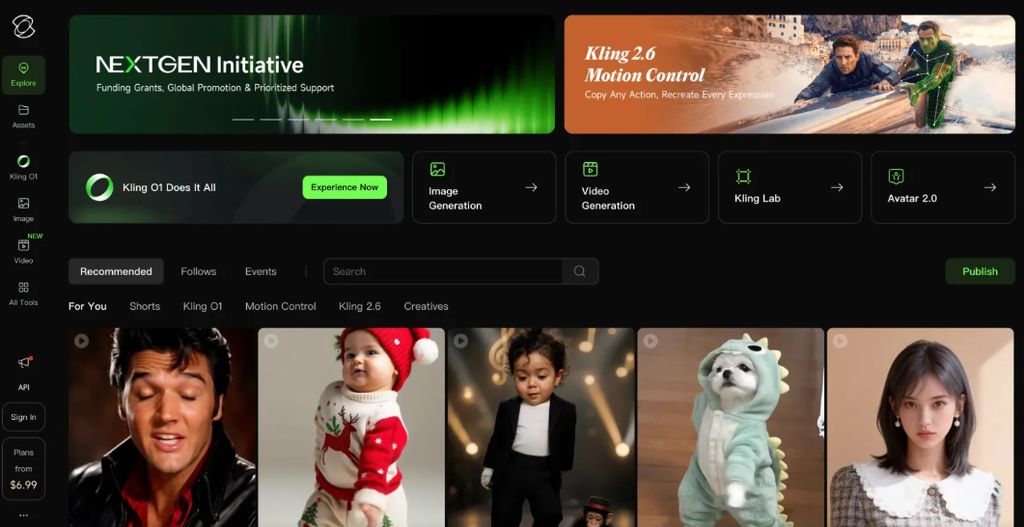

Kling 3.0

Kling 3.0 has become known for producing expressive characters and stylized motion. Its reference workflows allow creators to generate sequences based on an image while preserving the general appearance of the subject.

The system is particularly effective for storytelling scenarios where characters interact with environments or perform actions. When paired with clear prompts, Kling often produces fluid animation and expressive body movement.

However, like many generative video systems, it can struggle with fine details such as hands, small text, or accessories that appear in the reference image.

Pros

- Strong motion quality

- Good for character-focused scenes

- Effective for storytelling content

Cons

- Fine details sometimes change across frames

- Longer sequences can still introduce identity drift

Best for

Creators producing narrative content, social videos, or short animated sequences.

Not for

Workflows that require precise brand asset replication.

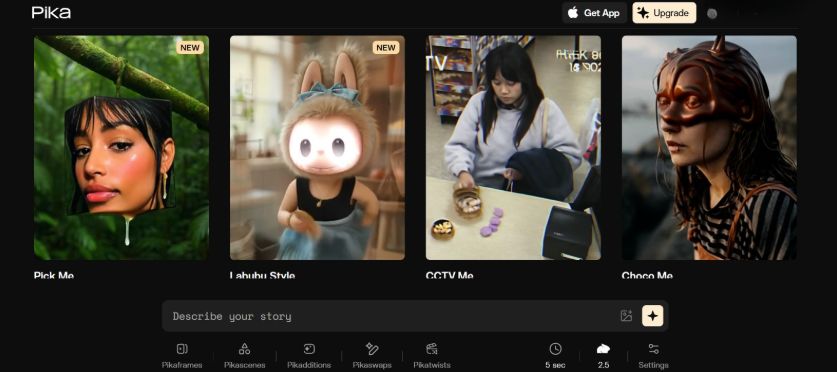

Pika

Pika focuses on accessibility and fast iteration. Its interface encourages experimentation, allowing creators to quickly test prompts and references.

The platform supports image references to guide generation, but its main strength is speed. Users can try multiple variations quickly, which helps refine prompts and visual direction before committing to longer sequences.

Because Pika prioritizes speed, its outputs sometimes show more variation between frames compared to tools focused on cinematic realism.

Pros

- Very fast generation cycles

- Simple interface for beginners

- Good for experimentation

Cons

- Visual stability may vary

- Fine details can change between frames

Best for

Creators exploring visual ideas or testing reference images quickly.

Not for

Projects requiring consistent characters across many scenes.

Pricing snapshot

Pika offers both free and paid plans depending on generation volume.

Luma

Luma approaches AI video generation with a focus on visual coherence. Its systems often produce smooth camera motion and relatively stable environments.

When used with reference images, Luma tends to preserve broader visual themes rather than exact character identity. This makes it particularly useful for scene-based storytelling where atmosphere and composition matter more than precise facial consistency.

Pros

- Smooth camera motion

- Strong environmental visuals

- Useful for scene continuity

Cons

- Character identity preservation is less precise

- Detailed elements may change across shots

Best for

Creators producing landscape-heavy or environment-driven content.

Not for

Character-focused narratives requiring strict identity consistency.

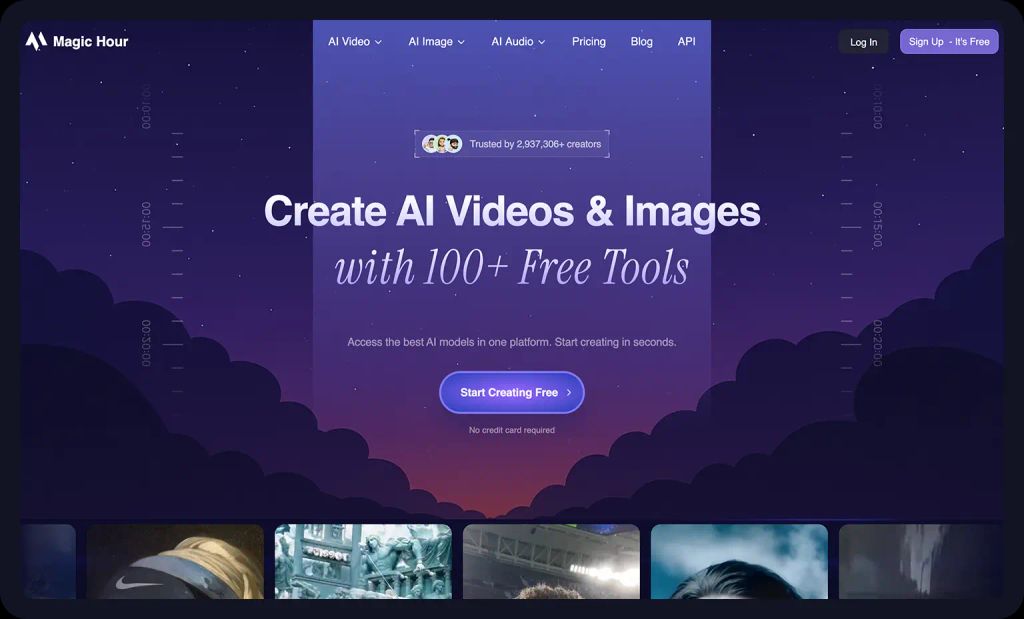

Magic Hour

Magic Hour focuses on making AI video creation accessible while supporting multiple generation workflows, including text-to-video, image-to-video, and video-to-video transformations. These workflows allow creators to use reference images or clips to guide the generation process.

One advantage of Magic Hour is its flexible pipeline. Users can combine prompts with reference visuals to create scenes that remain visually aligned with the original input. This makes the tool useful for creators who want to maintain visual continuity while experimenting with different scene variations.

The platform also integrates several generation modes in a single environment, which reduces the need to switch between different AI tools during production.

Pros

- Supports multiple AI video workflows

- Reference images can guide scene generation

- Accessible interface for creators and teams

Cons

- Like most AI video systems, complex details such as hands and text may still vary

- Longer sequences may require careful prompt tuning

Best for

Creators and teams looking for a flexible platform that combines several AI video generation approaches.

Not for

Workflows that require extremely precise frame-by-frame control.

Magic Hour Pricing (Annual Billing)

Basic – Free

Creator – $10/month (billed annually at $120/year)

Pro – $30/month (billed annually at $360/year)

Business – $66/month (billed annually at $792/year)

Seedance 2.0

Seedance 2.0 introduces a more structured approach to reference-guided video generation. Instead of relying on a single input image, the system can organize multiple references within a workflow.

This approach allows creators to define different attributes separately. For example, one reference image might define a character’s face, while another defines clothing style or environment. By separating these elements, the system can produce outputs that maintain visual alignment across multiple scenes.

Pros

- Structured reference pipelines

- Multi-reference workflows

- Good for design experimentation

Cons

- Slightly more complex to set up

- May require several iterations to achieve stable results

Best for

Creators experimenting with structured visual pipelines.

Not for

Users looking for a simple one-prompt generation workflow.

When to Use Image References vs Video References

Reference workflows can take different forms depending on the type of content being created. Choosing between image references and video references can significantly affect the final output.

Image references work best when the goal is to preserve a specific character or visual design. For example, if a creator wants to generate several scenes featuring the same character, providing a clear portrait image gives the AI model a strong anchor. The system will attempt to replicate facial features, clothing colors, and overall identity across generated shots.

Video references are often better suited for capturing motion or composition. A reference clip can guide the model to reproduce camera movement, pacing, or choreography. This technique is commonly used when creators want to replicate the feel of a specific shot but change the subject or environment.

Some advanced workflows combine both approaches. A creator might use an image reference to define character identity while using a short video clip to guide movement. This hybrid method can produce more coherent sequences but requires tools that support multiple reference inputs.

Example Use Cases for Reference Image-to-Video Tools

Reference workflows appear in many modern AI video projects. A few common examples illustrate how these tools are typically used.

Character storytelling for social media is one of the most common use cases. Creators generate short episodic videos where the same character appears in different situations. Reference images help keep the character recognizable across episodes.

Brand campaigns also benefit from reference workflows. Marketing teams often want a character or mascot to appear consistently across promotional videos. Using reference images allows AI tools to generate new scenes without redesigning the character each time.

Educational content creators sometimes use reference videos to replicate camera motion or presentation styles. By guiding the AI model with a reference clip, they can produce visually consistent lesson segments.

Game designers and filmmakers are also experimenting with reference workflows during pre-visualization. Instead of building full 3D scenes, they generate concept shots using AI video models guided by visual references.

Common Failure Cases in Reference Video Generation

Although reference workflows greatly improve consistency, they do not eliminate all generation issues.

Identity drift can still occur when sequences become too long or when prompts introduce conflicting information. For example, if a prompt requests a new costume or hairstyle, the model may alter the character more than expected.

Hands remain one of the most difficult elements for generative models. Even when a reference image clearly shows hand details, the model may struggle to reproduce them accurately during motion.

Text elements such as signs or logos also present challenges. Because generative models treat letters as visual patterns rather than semantic content, text can appear distorted or inconsistent across frames.

These limitations highlight why reference workflows should be seen as guidance rather than strict constraints

How We Chose These Tools

This list focuses on AI video tools that support reference-guided generation and are widely used in creative workflows during 2025–2026.

The evaluation considered several factors including output quality, stability across frames, ease of use, and adoption within the creative community. Tools were also selected based on whether they support practical workflows such as image-to-video generation, scene extension, or motion transfer.

Platforms that exist mainly as research demos or experimental prototypes were excluded in favor of tools that creators can realistically use today.

FAQ

What is reference image-to-video generation?

Reference image-to-video generation is a technique where an AI video model uses an existing image as a visual guide while generating new frames. The goal is to maintain visual consistency in elements such as character identity, clothing, or environment.

Why do AI video characters change between scenes?

Generative models create each scene independently unless guided by references. Without a visual anchor, the model may reinterpret a character every time it generates new frames.

Are reference workflows perfect?

No. Even advanced models may struggle with details such as hands, accessories, or text. Reference inputs improve consistency but do not guarantee identical outputs across long sequences.

What is the difference between image-to-video and video-to-video?

Image-to-video systems animate a still image into motion, while video-to-video systems transform an existing video clip into a different style or scene.

Can reference tools replace traditional video editing?

Not entirely. Many creators still combine AI video generation with traditional editing software to finalize timing, color grading, and transitions.