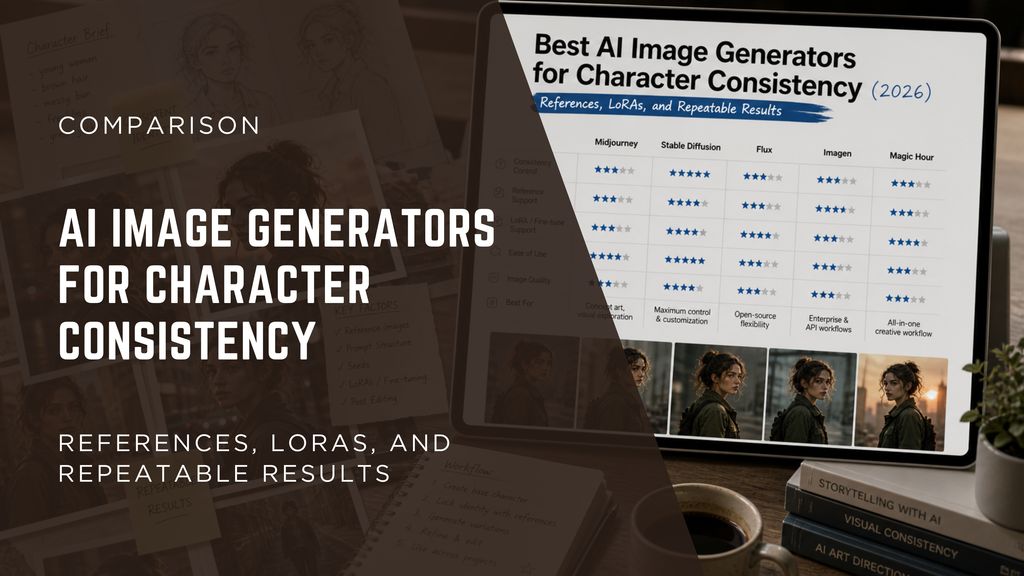

Best AI Image Generators for Character Consistency (2026): References, LoRAs, and Repeatable Results

TL;DR

- For fast, practical character consistency without technical setup, use reference images + editing workflows (e.g., Magic Hour)

- For maximum control and true repeatable AI characters at scale, use Stable Diffusion with LoRA and ControlNet

- For high-quality visuals with lighter consistency needs, tools like Midjourney work well with structured prompts and references

Why character consistency is still hard in AI image generation

Most AI image generators are great at creating a single beautiful image. The problem shows up when you try to generate the same character again in a different pose, lighting condition, or scene. Faces drift. Outfits change. Details disappear.

For creators building stories, games, ads, or social content, this is a real bottleneck. Whether you're making a talking photo, a meme generator pipeline, or a full image to video workflow, inconsistent characters break continuity fast.

What you actually need is not just “good images,” but repeatable systems:

- Same face across angles

- Same clothing across scenes

- Same style across batches

This guide focuses on tools and workflows that make that possible.

Best AI Image Generators for Character Consistency (At a Glance)

Tool | Best For | Consistency Methods | Platforms | Free Plan | Starting Price |

All-in-one workflows | Reference + editing | Web | Yes | Free / Paid tiers | |

Maximum control | Seeds, LoRA, ControlNet | Local/Web | Yes | Free (compute cost) | |

Visual quality | Style + prompt anchoring | Discord/Web | No | ~$10/mo | |

Open model workflows | Fine-tuning, seeds | Local/Web | Yes | Free | |

Dev pipelines | API + structured prompts | Cloud | Limited | Usage-based |

What actually drives character consistency

Before diving into tools, it’s worth understanding the three main approaches that matter in practice.

1. Reference image generation

Reference images are the most practical way to anchor character identity. Instead of relying purely on text, you give the model a visual example to follow. This significantly reduces variation in facial structure, proportions, and key features across generations.

The quality of your base image matters more than anything else. A clean, neutral, well-lit character image will produce much better downstream consistency than a stylized or noisy one. Most creators spend extra time refining this initial image because every future output depends on it.

Reference workflows are especially effective in fast production environments. Whether you're building emoji-style content, quick meme generator outputs, or lightweight face swap visuals, this method gives you stable results without technical setup. However, it does not fully lock identity, so small variations will still appear over time.

2. Seeds: stabilizing outputs, not preserving identity

Seeds control randomness in the generation process, but they do not define the character itself. Using the same seed can produce similar compositions, but it will not guarantee that the same face or identity appears.

Where seeds are useful is in controlled experimentation. By fixing the seed, you can test prompt changes without introducing new randomness. This helps refine a base character more efficiently.

However, seeds become less reliable as complexity increases. Changes in pose, lighting, or composition often override seed influence. They are best used as a supporting tool, not a primary method for consistency.

3. LoRA and fine-tuning: true identity control

LoRA and fine-tuning methods are the most reliable way to achieve consistent character AI at scale. Instead of guiding the model indirectly, you train it to recognize and reproduce a specific character.

Once trained, a LoRA model allows you to generate the same character across different scenes with high accuracy. This is essential for production use cases where identity must remain stable over time.

The trade-off is complexity. Training requires data, setup, and iteration. It is not ideal for quick projects, but it becomes necessary for advanced use cases like precise face swap, clothes swapper systems, or long-form storytelling.

4. Editing and post-processing: fixing what generation cannot

Even the best generation workflows produce small inconsistencies. Editing is what turns “almost consistent” into usable output. Instead of regenerating repeatedly, you correct issues directly.

This is especially important in pipelines involving gif generator outputs or animation. Small differences that are invisible in single images become obvious when viewed in sequence. Editing helps smooth these transitions.

Tools that combine generation and editing have a clear advantage here. They allow you to fix identity issues quickly without breaking your workflow, which is often more efficient than trying to perfect the initial generation.

5. Workflow design: the real source of repeatability

Consistency does not come from any single technique. It comes from how you combine them into a repeatable workflow. The most effective systems follow a structured process: create a strong base, reuse references, keep prompts stable, and refine outputs.

Each layer compensates for the limitations of the others. Prompts guide structure, references anchor identity, seeds control variation, and editing fixes errors. Together, they create a system that can scale.

This becomes critical in complex pipelines like image to video or talking photo generation. Consistency is no longer judged per image but across sequences, making even small deviations more noticeable. A structured workflow is what keeps everything aligned.

Magic Hour

What it is

Magic Hour is an all-in-one AI platform designed to combine image generation and editing into a single, continuous workflow. Instead of treating generation as a standalone step, it focuses on what happens after the image is created-refining, adjusting, and maintaining visual consistency across outputs. This becomes especially important when working with recurring characters across multiple scenes.

Unlike more technical tools, Magic Hour is built for usability first. It allows creators to generate images and immediately refine them using built-in editing tools, reducing the need to export assets into external software. This makes it particularly effective for workflows where iteration speed matters more than model-level control.

The platform is also designed to support multi-step creative pipelines. For example, a user might generate a base character, adjust facial features, then reuse that output in an image to video or lipsync workflow. Maintaining identity across these steps is where Magic Hour provides practical advantages over tools that focus purely on generation.

Another key aspect is accessibility. Magic Hour does not require users to understand seeds, training methods, or model tuning. This lowers the barrier significantly for creators building content like talking photo videos, meme generator assets, or lightweight face swap outputs where consistency matters but does not need to be technically perfect.

Pros

- All-in-one workflow (generation + editing)

- Easy to use with minimal setup

- Strong for multi-step content pipelines

- Fast iteration and correction

Cons

- Limited deep model control

- No native LoRA or fine-tuning system

- Less flexible than open-source setups

Deep evaluation

Magic Hour approaches character consistency from a workflow perspective rather than a modeling perspective. This is a critical distinction. Instead of trying to solve identity preservation entirely at the generation stage, it assumes that some inconsistency will happen and gives users tools to correct it quickly. In real-world content production, this approach is often more efficient.

Compared to Stable Diffusion, Magic Hour sacrifices low-level control in exchange for speed and usability. You cannot fine-tune a model or build a reusable character embedding, but you can achieve consistent-enough outputs much faster. For many creators, especially those producing social content or short-form media, this trade-off is worthwhile.

Another important advantage is how well it integrates into broader pipelines. If your workflow includes steps like replace face in video online free tools, gif generator outputs, or emoji-based assets, having editing built into the same platform reduces friction significantly. You spend less time switching tools and more time refining results.

However, the limitations become clear in long-form or production-grade scenarios. Without LoRA or model-level memory, consistency must be maintained manually or through repeated adjustments. This can become inefficient when scaling across dozens or hundreds of images.

Overall, Magic Hour is best understood as a practical system for managing consistency, not enforcing it at a technical level. It excels in speed, accessibility, and workflow cohesion, but is not designed for maximum precision.

Price

- Free plan

- Creator: $10/month (billed annually)

- Pro: $30/month

- Business: $66/month

Best for

Creators, marketers, and teams who need fast, consistent outputs without technical complexity.

Stable Diffusion

What it is

Stable Diffusion is an open-source image generation ecosystem that offers the highest level of control currently available. Rather than being a single product, it consists of models, user interfaces, and extensions that allow deep customization of the generation process.

It is widely used for building consistent character AI systems because it supports advanced techniques like LoRA (Low-Rank Adaptation), ControlNet, and seed control. These tools allow users to define and reuse character identity across different scenes and contexts.

Unlike hosted platforms, Stable Diffusion can be run locally or in the cloud. This gives users full control over their data, models, and outputs. However, it also introduces complexity, as users must manage setup, hardware, and configuration.

Another key feature is extensibility. The ecosystem includes tools for tasks like image upscaler workflows, face swap pipelines, and structured generation, making it highly adaptable for different use cases.

Pros

- Maximum control over outputs

- Supports LoRA and fine-tuning

- Highly extensible ecosystem

- No platform lock-in

Cons

- Steep learning curve

- Requires setup and configuration

- Hardware or cloud costs

Deep evaluation

Stable Diffusion is the only option in this list that can deliver true, repeatable character consistency at scale. By training a LoRA model on a specific character, you effectively create a reusable identity that can be applied across unlimited generations. This is essential for production environments such as games, animation, or long-form storytelling.

The addition of ControlNet further enhances this capability by allowing precise control over pose, structure, and composition. This means you can maintain character identity while changing position, expression, or environment-something that prompt-based tools struggle with.

However, the cost of this control is complexity. Setting up a stable pipeline requires understanding multiple components, including model selection, training data, and parameter tuning. For many users, this becomes a barrier that outweighs the benefits, especially for smaller projects.

Compared to Midjourney, Stable Diffusion is less impressive out of the box but far more powerful when customized. Compared to Magic Hour, it requires significantly more effort but delivers stronger long-term consistency.

It is also the preferred choice for technically demanding tasks like clothes swapper systems or high-accuracy face swap gif generation, where even small deviations in identity can break the result. In these cases, simpler tools are not sufficient.

Price

- Free (open-source)

- Additional compute costs depending on usage

Best for

Developers, studios, and advanced creators who need full control and scalable consistency.

Midjourney

What it is

Midjourney is a high-quality AI image generator known for its strong artistic output and ease of use. It operates primarily through a prompt-based interface and is widely used for creative exploration and visual storytelling.

The platform focuses on generating visually appealing images with consistent style rather than strict identity preservation. This makes it popular among designers, illustrators, and marketers who prioritize aesthetics.

While Midjourney has introduced improvements in handling reference images, it still lacks native tools for fine-tuning or persistent character modeling. As a result, maintaining the exact same character across multiple generations remains challenging.

Despite these limitations, Midjourney is often used in early stages of creative workflows, where speed and visual quality are more important than repeatability.

Pros

- High-quality image output

- Strong style consistency

- Easy to use

Cons

- Limited identity consistency

- No LoRA or fine-tuning

- Less control over outputs

Deep evaluation

Midjourney excels in producing visually striking images with minimal effort. For many users, this makes it the fastest way to generate compelling visuals. However, this strength comes at the cost of control. The model prioritizes artistic interpretation over strict adherence to input constraints.

When it comes to character consistency, Midjourney relies heavily on prompt engineering and reference images. While this can produce similar-looking characters, it does not guarantee identity preservation. Small variations in prompts or generation conditions can lead to noticeable differences.

Compared to Stable Diffusion, Midjourney is significantly easier to use but far less precise. Compared to Magic Hour, it produces higher-quality images initially but offers fewer tools for correcting inconsistencies afterward.

This makes it best suited for concept development, mood boards, or short-form content where consistency is not critical. It can also be used in workflows involving image generator free experimentation or early-stage visual ideation.

In summary, Midjourney is a powerful creative tool but not a reliable system for maintaining consistent characters across multiple outputs.

Price

- Starts at approximately $10/month

Best for

Designers and creators who prioritize visual quality over strict consistency.

Flux

What it is

Flux is a newer generation of open-weight AI image models that aims to balance quality and flexibility. It is part of a broader trend toward accessible, high-performance models that can be run locally or customized.

Unlike older open-source models, Flux focuses on improving output quality while maintaining flexibility. This makes it appealing to users who want more control than hosted tools but less complexity than full Stable Diffusion setups.

Flux can be integrated into various workflows, including experimental pipelines for text to video or hybrid generation systems. Its flexibility makes it suitable for users exploring new creative approaches.

However, the ecosystem around Flux is still developing, and tools for fine-tuning and consistency are not as mature as those in Stable Diffusion.

Pros

- Open-weight flexibility

- High-quality outputs

- Growing ecosystem

Cons

- Less mature tooling

- Limited documentation

- Not yet standardized

Deep evaluation

Flux represents a middle ground between closed platforms and fully customizable systems. It offers more flexibility than tools like Midjourney while avoiding some of the complexity of Stable Diffusion. This makes it attractive for users who want control without committing to a full technical stack.

In terms of character consistency, Flux shows promise but is not yet a complete solution. While it can produce stable outputs with careful prompting, it lacks the robust fine-tuning infrastructure needed for long-term identity preservation.

Compared to Stable Diffusion, Flux is easier to experiment with but less powerful for production use. Compared to Magic Hour, it offers more control but requires more setup and lacks integrated editing tools.

Flux is particularly useful for experimental workflows, including those involving image generator free setups or hybrid pipelines. However, it is not yet reliable enough for projects that require strict consistency across many outputs.

Overall, Flux is worth watching but should be considered an evolving tool rather than a finished solution.

Price

- Free (open-weight models)

Best for

Advanced users experimenting with new models and flexible workflows.

Imagen

What it is

Imagen is a cloud-based AI image generation system developed by Google, designed primarily for developers and enterprise use cases. It is accessed through APIs and integrated into larger software systems rather than used as a standalone tool.

The platform emphasizes reliability, scalability, and structured generation. It is often used in applications where consistency is enforced through backend logic rather than manual control.

Imagen does not provide the same level of customization as open-source models, but it offers strong baseline performance and integration capabilities.

It is commonly used in production systems such as automated headshot generator tools or content pipelines that require predictable outputs.

Pros

- Scalable and reliable

- Strong API integration

- High baseline quality

Cons

- Limited customization

- Not beginner-friendly

- Requires development resources

Deep evaluation

Imagen is best understood as an infrastructure tool rather than a creative tool. Its strength lies in its ability to generate consistent outputs within structured systems. Instead of relying on manual adjustments, developers can enforce consistency through controlled inputs and logic.

Compared to Stable Diffusion, Imagen offers less flexibility but more stability. Compared to Midjourney, it is less visually expressive but more predictable. Compared to Magic Hour, it lacks editing capabilities but integrates better into automated pipelines.

This makes Imagen ideal for large-scale applications where consistency must be maintained across thousands of outputs. However, it is less suitable for individual creators or small teams.

In workflows involving automation, such as large-scale content generation or structured pipelines, Imagen can outperform more flexible tools simply because it is easier to standardize.

However, for tasks that require fine-grained control over character identity, such as advanced face swap or detailed customization, Imagen may not provide sufficient flexibility.

Price

- Usage-based (API pricing)

Best for

Startups and enterprises building scalable AI-powered products.

Example workflow: how to get consistent characters in practice

A simple, practical workflow that works across most tools:

- Generate a base character (high quality, neutral pose)

- Save and reuse as reference image

- Lock prompt structure (don’t change phrasing too much)

- Use editing tools to fix small inconsistencies

- If needed, move to LoRA training for full control

This workflow scales from:

- simple meme generator use cases

- to full talking photo pipelines

- to multi-scene storytelling

How we chose these tools

We evaluated tools based on practical consistency, not just image quality.

Criteria included:

- Identity stability across multiple generations

- Support for reference image workflows

- Ability to control outputs (seeds, LoRA, editing)

- Speed and usability

- Integration into real workflows (image to video, lipsync, etc.)

We prioritized tools that creators can actually use in production, not just demos.

Market trends: where character consistency is going

There are three clear trends shaping this space.

First, tools are moving from pure generation to full workflows. Platforms now combine image editor, generation, and transformation in one place.

Second, multi-modal pipelines are becoming standard. Character consistency now matters across:

- images

- video

- gif generator outputs

Third, lightweight fine-tuning (like LoRA) is becoming more accessible. What used to require deep technical setup is slowly becoming productized.

Which tool is best for you?

If you are a solo creator

Start with Magic Hour or Midjourney. You’ll get fast results without complexity.

If you are building a startup or product

Use Stable Diffusion or Imagen. You need control and scalability.

If you are experimenting

Try Flux. It gives flexibility without long-term commitment.

If your workflow includes things like face swap, image upscaler, or replace face in video online free tools, prioritize platforms that support editing alongside generation.

FAQs

What is a character consistency AI image generator?

It’s a tool or system designed to generate the same character across multiple images with minimal variation. This usually involves reference images, seeds, or fine-tuning methods.

Which tool is best for consistent character AI?

Stable Diffusion is the most powerful for control. Magic Hour is the easiest for practical workflows.

Can AI maintain the same character across images?

Yes, but it depends on the method used. Reference images and LoRA training are the most reliable approaches.

Is LoRA necessary for consistency?

Not always. For simple use cases, reference workflows are enough. For production-level consistency, LoRA becomes important.

Are free tools good enough?

Some image generator free tools are good for basic consistency, but advanced control usually requires more setup or paid tools.

How does this connect to video workflows?

Consistent images are the foundation for text to video, talking photo, and lipsync systems. If your base images are inconsistent, the video output will be too.