8 Best AI Image-to-Video Generators in 2026 (Tested: Free and Paid)

Quick answer: The best AI image-to-video generator in 2026 depends on what you are animating. For your own real photos with no watermark on a free plan, Magic Hour. For cinematic quality from prompts or reference images, Kling 3.0 or Runway Gen 4. For product photography, Luma Dream Machine. For video with synchronized audio from a single image, Veo 3 or Seedance 2.0. For budget social content, Pika 2.5 at $8/mo. |

Image-to-video AI has crossed a meaningful threshold in 2026. What once produced jittery, artifact-heavy clips that were recognizably AI now generates footage that holds up under real scrutiny — smooth motion, preserved identity across frames, and in some cases, synchronized audio generated alongside the video in a single pass.

The tools are not interchangeable. Some are built for animating your own uploaded photos. Others are built for generating cinematic footage from a reference image using AI motion models. A third category generates audio and video simultaneously from a single source image. Picking the wrong category is the most common reason creators are disappointed with their results.

This guide covers the 8 tools that actually matter for image-to-video in 2026, with verified pricing, honest limitations, and a use-case routing table so you can get to the right tool in under a minute.

All 8 Tools at a Glance

Key specs for every tool on this list, verified March 2026.

Tool | Best For | Free Plan | Paid From | Max Length | Native Audio |

Magic Hour | Your photos into video + full workflow | 400 credits, no watermark | $10/mo | Credit-based | No |

Kling 3.0 | Cinematic realism, human motion | 66 credits/day, watermarked | $10/mo | 2 minutes | Yes (Kling 2.6) |

Runway Gen 4 | Camera control, character consistency | 125 one-time credits | $12/mo | 16 seconds | No |

Luma Dream Machine | Fast motion, product photography | 30 credits/mo, watermarked | $9.99/mo | 5 minutes | No |

Pika 2.5 | Social effects, budget entry point | 80 credits/mo, no watermark | $8/mo | 5 seconds | No |

Veo 3 / Flow | Native audio-video, reference accuracy | Limited via Flow | $19.99/mo | 8 seconds | Yes |

Seedance 2.0 | Budget native audio, multi-modal inputs | Daily credits via Dreamina | ~$9.60/mo | 15 seconds | Yes |

PixVerse | Stylized motion, keyframe swaps | 90+60 daily credits, watermarked | $10/mo | 5 seconds | No |

Pricing verified from official sources, March 2026.

Find the Right Tool for Your Use Case

Use this table to go directly to the right section based on what you are making.

Use Case | Best Tool | Why |

Animating your own real photos | Magic Hour | Preserves your image exactly as uploaded, watermark-free free plan, full creator workflow alongside |

Cinematic B-roll and human motion | Kling 3.0 | Highest benchmark scores for photorealistic human characters, up to 2-minute output, generous free tier |

Character consistency across shots | Runway Gen 4 | Reference image system keeps identity locked across separate generations better than any other tool |

Product photography animation | Luma Dream Machine | Reliable motion on product and environmental content, fast generation, up to 5-minute sequencing |

Fast social content on a tight budget | Pika 2.5 | Cheapest paid plan at $8/mo, no-watermark free tier with credit rollover, fastest iteration cycle |

Video with synchronized audio from a photo | Veo 3 / Flow | Native audio-video joint generation from a single image input, best audio integration available |

Budget native audio on a global platform | Seedance 2.0 | Same native audio capability as Veo 3 at roughly half the cost, free daily credits via Dreamina |

Stylized and effects-driven social clips | PixVerse | Keyframe-controlled object and background swaps alongside face swap, strongest for stylized output |

What Separates Good Image-to-Video Tools from Bad Ones?

The quality gap between tools is wider than most comparison articles let on. These are the factors that determine whether output is actually publishable.

Identity preservation. The tool should produce a video where the subject in your source image looks the same throughout every frame. Tools that warp faces, morph skin tones, or gradually alter the subject's features mid-clip are unusable for anything where the source image actually matters.

Motion naturalness. Does the AI-generated movement look physically plausible? Hair, fabric, liquid, and human limbs are the hardest to render convincingly. Cheap models produce robotic or jittery movement that collapses the illusion immediately.

Prompt adherence for reference inputs. When you provide a reference image and a motion prompt, does the model actually follow both? Some tools are strong on one but weak on the other, producing generic movement that ignores the specific instruction.

Free tier reality. Most free plans either watermark outputs, cap to 480p, or give you so few credits that meaningful testing is impossible. Pika's 80-credit no-watermark rollover plan and Magic Hour's 400-credit no-watermark plan are the exceptions on this list.

Native audio. In 2026, generating synchronized audio alongside video from a single image is now possible with Veo 3 and Seedance 2.0. If your use case requires audio, these are the only tools on this list that handle it natively.

8 Best AI Image-to-Video Generators in 2026

Each tool below was evaluated on output quality against real uploaded images, free tier usefulness, pricing accuracy, and honest failure modes. No tool is perfect for every use case — the sections below are specific about where each one works and where it does not.

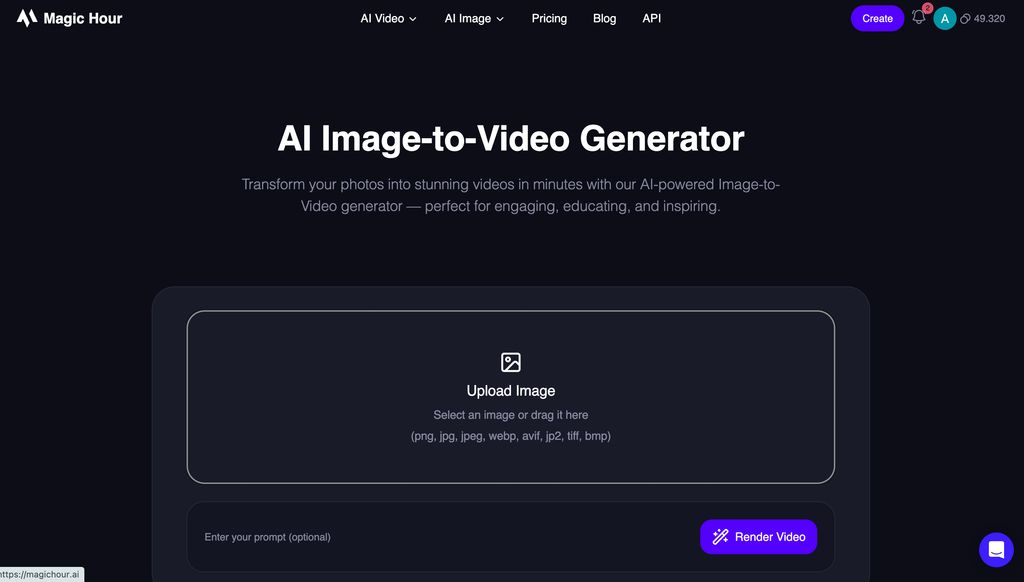

1. Magic Hour — Best for Animating Your Own Real Photos

Magic Hour is the strongest starting point for creators who want to animate their own uploaded photos rather than generate footage from scratch with a text prompt. You upload an image, choose a motion style, and the AI generates smooth, stylized video that preserves the identity of your source material. The face swap, lip sync, and talking photo tools work in the same workflow, which means a product photo, a headshot, or a brand asset can go from still image to animated video to lipped-synced presentation without switching platforms.

The free plan is the most useful on this list for testing with real assets: 400 credits, no watermark, no credit card required, and credits that never expire. That is more than enough to evaluate output quality against your specific images before paying anything.

Magic Hour is not a standalone text-to-video prompt generator for creating footage from scratch. Its strength is animating content you already have. For prompt-to-video generation, it pairs naturally with Kling or Runway.

Strengths

- Animates your own uploaded photos with strong identity preservation

- Face swap, lip sync, and talking photo in the same workflow as image-to-video

- 400 free credits, no watermark, no credit card, credits never expire

- Works on any device from a browser, including mobile

- Trusted by teams at Meta, NBA, and L'Oreal

Limitations

- Not a prompt-to-video tool for generating footage from scratch

- Video length per render is credit-based — longer clips require more credits

Pricing

- Free: 400 credits, no watermark, 576px, no credit card required

- Creator: $10/mo annual — 120,000 credits/yr, 1024px, commercial use

- Pro: $30/mo annual — 360,000 credits/yr, 1472px

- Business: $66/mo annual — 840,000 credits/yr, 4K, full API

Pro tip: After animating your image in Magic Hour, use the talking photo or lip sync tool to layer audio onto the same output. One source image can produce a complete speaking video without any additional filming. |

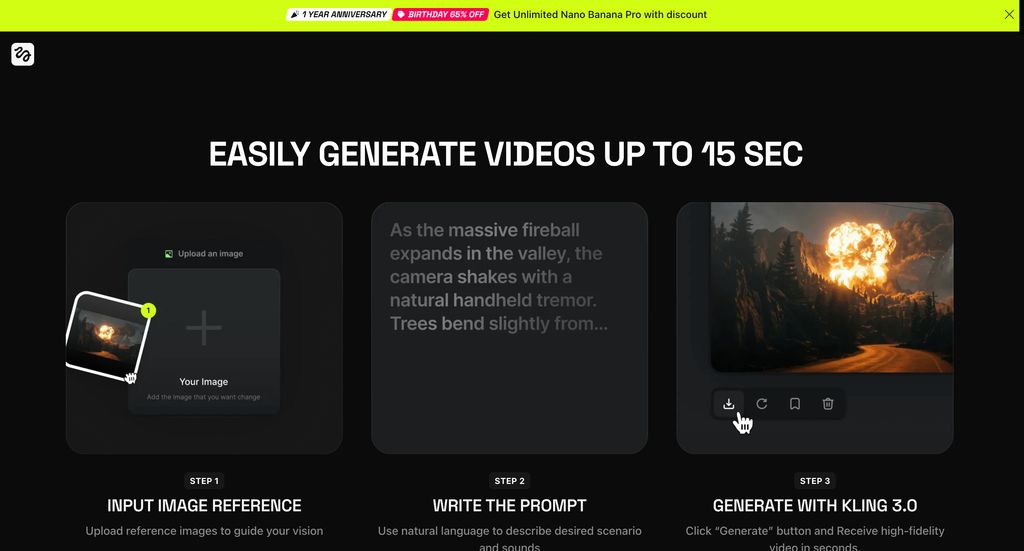

2. Kling 3.0 — Best for Cinematic Realism and Human Motion

Kling 3.0 is the current benchmark leader for photorealistic image-to-video generation. Upload a reference image, add a motion prompt, and the model produces footage with natural physics, realistic skin rendering, and smooth character movement that holds up at HD resolution. For product photography, lifestyle imagery, and any content requiring believable human motion, Kling 3.0 consistently outperforms other models in independent testing.

The free tier is genuinely usable: 66 credits per day that refresh daily, enough for 2-3 short clips per day to evaluate quality against your source images. The Standard plan at $10/mo unlocks watermark-free output and commercial rights at the lowest price point of any major model on this list.

Two limitations worth knowing before committing: credits expire within their validity period if unused — unlike Magic Hour's never-expiring credits. And native audio, while available on the Kling 2.6 model, consumes roughly 5 times more credits than silent video. Budget based on your actual quality settings, not the headline credit count.

Strengths

- Top benchmark scores for photorealistic human characters and motion in 2026

- Up to 2-minute output — the longest of any model on this list

- Motion Brush for animating specific regions of your image independently

- 66 daily free credits — the most generous ongoing free tier in this category

- Native audio available on Kling 2.6 model for synchronized sound generation

Limitations

- Paid credits expire within their validity period — no rollover

- Professional mode and native audio drive real per-video cost significantly above headline pricing

- No refunds on failed generations, even when the platform is at fault

Pricing

- Free: 66 credits/day, refreshes daily, watermarked, personal use only

- Standard: $10/mo — 660 credits, watermark-free, 1080p, commercial use

- Pro: $30/mo — 3,000 credits, priority rendering

- Premier: $75/mo — 8,000 credits, all models, maximum output

Pro tip: Start in Standard mode to evaluate whether your source image animates well before switching to Professional mode. Standard costs 3.5 times fewer credits per clip and is good enough to validate the motion direction and identity preservation before committing higher-credit Professional generations. |

3. Runway Gen 4 — Best for Camera Control and Character Consistency

Runway Gen 4 is the production standard for creators who need precise control over how their image animates. Rather than describing motion in a prompt and hoping for the best, Runway lets you specify camera movement direction, zoom, panning, and pacing with dedicated controls. The reference image system locks character identity, clothing, and facial features across separate generations — critical for any content where the same person or product needs to appear consistently across multiple clips.

The Aleph model in Runway goes further: you can direct exactly what to change in an existing image or clip using natural language, without regenerating from scratch. For product imagery where one element needs to animate differently, or for character animations where the background should change but the subject should stay constant, this is the strongest precision tool available.

The main trade-off is output length. At 16 seconds maximum per generation, Runway requires stitching clips for longer content. And the free plan — 125 one-time credits — is genuinely limited, enough to test a prompt or two but not to evaluate the tool properly.

Strengths

- Precise camera controls for specifying movement, zoom, and pacing direction

- Reference image system keeps character identity consistent across separate generations

- Aleph model for directed editing — describe what to change without regenerating

- Act-Two for driving character motion from a reference video performance

- ProRes export on Pro plan for professional post-production workflows

Limitations

- Maximum 16 seconds per generation — the shortest hard limit of any major model

- Credits do not roll over — unused credits expire at the end of each billing cycle

- Free plan (125 one-time credits) is evaluation-only, not a usable ongoing free tier

- No native audio generation

Pricing

- Free: 125 one-time credits, watermarked — evaluation only

- Standard: $12/mo annual ($15 monthly) — 625 credits/mo, watermark-free, 1080p

- Pro: $28/mo annual ($35 monthly) — 2,250 credits/mo, 4K, ProRes, custom voices

- Unlimited: $76/mo annual ($95 monthly) — 2,250 credits + Explore Mode unlimited relaxed-rate generation

Pro tip: Use Gen-4 Turbo for iterating and testing your motion prompts — it costs 5 credits per second compared to 12 for standard Gen-4. Once you have confirmed the motion direction and identity are correct on the Turbo generation, switch to full Gen-4 for the final deliverable. This makes your credit allocation go roughly 2.4 times further. |

4. Luma Dream Machine — Best for Product Photography and Long Sequences

Luma Dream Machine generates fast, visually polished motion from a reference image, with particular strength on product photography, environmental scenes, and object animation. Upload a product shot and add a motion prompt, and Luma produces natural lighting transitions, depth-of-field movement, and camera pan or zoom that makes the image feel filmed rather than generated. For e-commerce and brand content where the product needs to look real rather than stylized, Luma is the most consistent tool tested.

The standout capability over every other tool on this list is the extension feature. Rather than being capped at 5-16 seconds, Luma can chain clips into sequences up to 5 minutes long. For any image-to-video workflow that needs more than a social clip — product demos, background loops, ambient sequences, or longer narrative content built from a starting image — this removes the hard limit that every other tool imposes.

The free tier is limited at 30 credits per month with watermarked output. The Standard plan at $9.99/mo is the right entry point, giving 120 credits and watermark-free commercial use.

Strengths

- Extension feature allows chaining clips to sequences up to 5 minutes — unique on this list

- Strong motion quality on product photography, environmental content, and object animation

- Fast generation times, typically under 2 minutes for a 5-second clip

- Ray3 model adds HDR output, 4K upscaling, and keyframe editing

- Web and iOS access with a clean, approachable interface

Limitations

- Free plan limited to 30 credits per month with watermarks — not suitable for ongoing free use

- Character consistency across separate generations is inconsistent

- Human face and body motion is weaker than Kling or Runway

- No native audio generation

Pricing

- Free: 30 credits/mo, watermarked, personal use only

- Standard: $9.99/mo — 120 credits, watermark-free, commercial use

- Pro: $49.99/mo — 400 credits + Unlimited queued mode, priority generation

- Unlimited: $94.99/mo — relaxed-rate unlimited generation

Pro tip: For product animation, shoot your source image on a clean neutral background. Luma handles background motion and lighting transitions best when the original image is well-lit and uncluttered. Complex backgrounds with competing elements often produce inconsistent motion in non-subject areas. |

5. Pika 2.5 — Best Budget Option for Social Content and Creative Effects

Pika 2.5 is the best entry point for creators who want image-to-video capability without a meaningful financial commitment. The free plan gives 80 credits per month with no watermark and credits that roll over — a combination no other major tool on this list offers. The Standard plan at $8/mo is the lowest-cost paid tier available.

Beyond pricing, Pika's creative effects suite is genuinely distinctive. Pikaffects, Pikaswaps, and Pikascenes allow stylized transformations and scene replacements that no other tool matches for fast, experimental social content. For a creator who wants to animate a selfie into a trending format, add a stylized motion effect to a product photo, or produce a batch of quick social clips from a library of still images, Pika delivers faster results than any other tool at this price point.

The limitation is output quality ceiling. At 5 seconds maximum per generation and a focus on stylized rather than photorealistic motion, Pika is the wrong choice when the goal is production-grade cinematic footage or content requiring realistic human movement. It is the right choice when speed, effects, and cost matter more than fidelity.

Strengths

- Free plan: 80 credits/mo, no watermark, rollover — best free tier on this list for casual creators

- Standard plan at $8/mo — lowest paid entry point of any major tool

- Pikaffects, Pikaswaps, and Pikascenes provide creative effects no other tool matches

- Fastest iteration cycle for short-form social clips from images

- Credits roll over month to month on paid plans

Limitations

- Maximum 5 seconds per generation — shortest of any tool on this list

- Output is stylized, not photorealistic — not suitable for production-grade realism

- No native audio generation

Pricing

- Free: 80 credits/mo, no watermark, rollover, 480p resolution

- Standard: $8/mo annual ($10 monthly) — 700 credits, all resolutions, fast generation

- Pro: $28/mo annual ($35 monthly) — 2,300 credits, faster generation

- Fancy: $76/mo annual ($95 monthly) — 6,000 credits, maximum capacity

Pro tip: Pika's free plan is the best way to test whether AI image-to-video fits your workflow before spending anything. The 80 monthly credits with no watermark and rollover give you enough to generate a realistic set of social clips from your image library and evaluate the output quality for your specific content type. |

6. Veo 3 / Google Flow — Best for Native Audio from a Single Source Image

Veo 3 and its latest version Veo 3.1 are Google DeepMind's flagship video generation models. For image-to-video use cases specifically, the defining capability is native audio-video joint generation: you upload a reference image, write a prompt, and receive a video with synchronized dialogue, sound effects, and ambient audio generated alongside the visual content in a single pass.

This matters for a specific and growing set of workflows. Product videos that need ambient sound. Character animations that need dialogue. Environmental scenes that need realistic acoustic texture. Every other tool on this list requires separate audio post-production for these results. Veo 3 generates them natively from your source image.

Access is primarily through Google Flow, bundled with the Google AI Pro plan at $19.99/mo. That plan gives access to Veo 3.1 Fast, which produces strong results for most creator use cases. Full Veo 3.1 quality requires the Ultra plan at $249.99/mo — a significant jump that makes sense for agencies and high-volume production teams, but is expensive for individuals.

Strengths

- Native audio-video joint generation from a single source image — unique on this list alongside Seedance

- Supports up to 3 reference images for precise identity and environment anchoring

- Strong prompt adherence and visual realism at top benchmark levels

- Google Flow provides scene building and editing alongside generation

- API access via Vertex AI for developer workflows at $0.15-0.40/sec

Limitations

- Maximum 8 seconds per generation — requires clip chaining for longer content

- Full Veo 3.1 quality requires AI Ultra at $249.99/mo — steep for individual creators

- Veo 3.1 Fast (on AI Pro) is meaningfully lower quality than full Veo 3.1

- Limited to Google's ecosystem and credit structure in the web interface

Pricing

- Free: Limited monthly AI credits via Flow in some regions

- AI Pro: $19.99/mo — Veo 3.1 Fast via Flow, Gemini Advanced, 2TB storage

- AI Ultra: $249.99/mo — full Veo 3.1, highest output limits, 30TB storage

- API (Vertex AI): $0.40/sec standard, $0.15/sec Fast (both with audio)

Pro tip: For image-to-video work, provide a detailed reference image rather than relying on a text description of the subject. Veo 3 respects the visual specifics of your uploaded image more reliably when the image is clear and well-lit. Combine with a precise motion prompt for the most controlled results. |

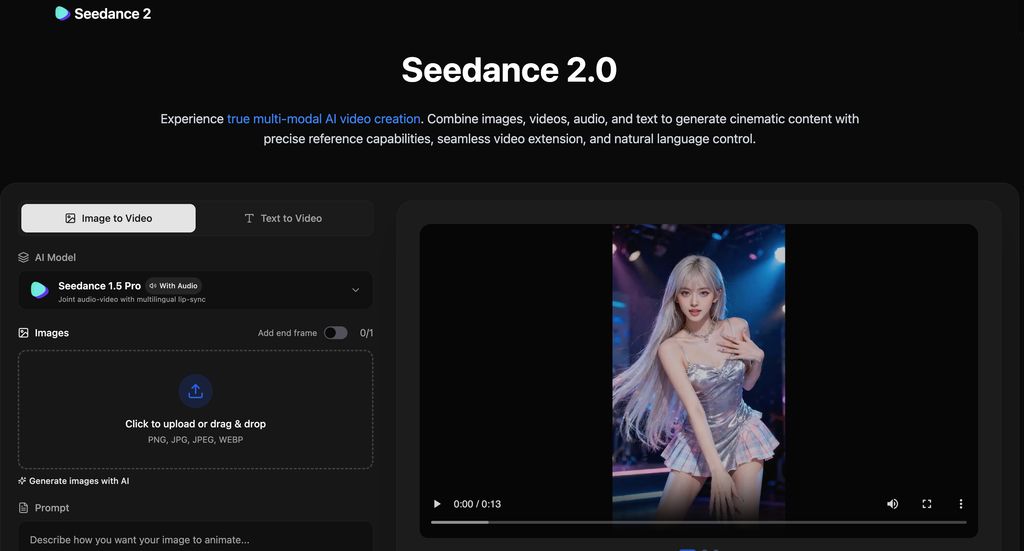

7. Seedance 2.0 — Best Budget-Conscious Native Audio Image-to-Video

Seedance 2.0 is ByteDance's multimodal video generation model, released February 2026. For image-to-video workflows, it accepts up to 12 reference inputs simultaneously — text, images, audio, and video in any combination — and generates video with natively synchronized audio as a single output. This is the same capability as Veo 3, at roughly half the price.

The key differentiator over Veo 3 is cost and input flexibility. At approximately $9.60/mo for the Dreamina Basic subscription versus $19.99/mo for Google AI Pro, Seedance delivers native audio image-to-video at a meaningfully more accessible price point. The 12-reference-input capability also enables workflows that Veo 3 cannot match — anchoring a character image, a motion reference video, an audio track, and an environment image simultaneously in a single generation.

International access is still rolling out as of March 2026. Seedance 2.0 is available via Dreamina internationally with free daily credits, and is beginning to integrate into CapCut in select markets. US availability is more limited than established tools, which is worth factoring into workflow planning.

Strengths

- Native audio-video joint generation at approximately half the cost of Veo 3

- Up to 12 reference inputs simultaneously — text, image, audio, and video combined

- Strong multi-shot character consistency across separate generations

- 90%+ generation success rate, reducing wasted credits on failed attempts

- Free daily credits available via Dreamina with no credit card required

Limitations

- International access still rolling out — US availability more limited than other tools

- Dreamina interface is less polished than Runway or Kling platforms

- Maximum 15 seconds per generation

- Payment primarily through ByteDance platforms — international payment flow less streamlined

Pricing

- Free: Daily credits via Dreamina (approx. 2-3 short videos per day), no credit card

- Dreamina Basic: ~$9.60/mo (69 RMB/mo) — commercial use, watermark removal

- International plans: From ~$18-41/mo via third-party platforms

- API: ~$0.14/sec via ByteDance Volcengine, from $0.022/sec via third-party providers

Pro tip: If you are outside China and want to evaluate Seedance 2.0 before committing to a plan, the Dreamina free tier via dreamina.capcut.com gives daily credits with no credit card required. Generate 2-3 test clips from your reference images to confirm the output quality matches your use case before subscribing. |

8. PixVerse — Best for Stylized Motion and Keyframe-Controlled Effects

PixVerse approaches image-to-video differently from the other tools on this list. Its Swap feature allows keyframe-controlled replacement of faces, objects, and backgrounds within an animated clip — not just generating motion from a source image, but surgically swapping specific elements at specific moments. For creative social content where the visual effect or the transition is the point, this level of control is unique.

The platform generates video at up to 1080p and supports multiple styles in a single generation — producing the same image animated in cinematic, anime, and sketch styles simultaneously for comparison. For brand and marketing teams testing which visual treatment resonates, this eliminates the need to re-generate separately for each style direction.

The free tier gives 90 initial credits plus 60 daily, which is generous for evaluation. Output is watermarked on the free tier. The Standard plan at $10/mo removes watermarks and gives 1,200 monthly credits — a competitive entry point given the feature set.

Strengths

- Keyframe-controlled object, face, and background replacement within animated clips

- Multi-style generation from the same image for instant A/B comparison

- Strong for stylized and creative motion that prioritizes visual effect over photorealism

- API available via fal.ai for developer and production workflow integration

- Generous free tier: 90 initial + 60 daily credits

Limitations

- Maximum 5 seconds per generation on the Swap feature — costs double for longer clips

- Free plan outputs are watermarked

- Character consistency across multiple generations is limited

- Photorealism is weaker than Kling or Runway for human motion and detail

- No native audio generation

Pricing

- Free: 90 initial + 60 daily credits, watermarked

- Standard: $10/mo — 1,200 credits/mo, watermark-free, HD

- Pro: $30/mo — more credits, 1080p, priority queue

- Premium: $60/mo — highest credit allocation

Pro tip: Use PixVerse's multi-style generation to test visual direction before committing to a full production run. Generate the same source image in cinematic, anime, and standard styles at once, share the outputs with stakeholders for feedback, and then run full production in the confirmed style. This cuts approval cycles significantly. |

How to Choose the Right Image-to-Video Tool

The most common mistake is choosing a tool based on brand recognition rather than use case. Here is the shortest path to the right decision.

- You want to animate your own real photo with no setup cost: Start with Magic Hour free plan. 400 credits, no watermark, no credit card.

- You need the most photorealistic output and can manage a credit system: Kling 3.0. Top benchmark scores, 2-minute output, daily free credits.

- You need the same character to look identical across multiple separate clips: Runway Gen 4. The reference image system is the strongest for cross-generation identity lock.

- You are animating product photography and need output longer than 16 seconds: Luma Dream Machine. Up to 5-minute sequencing, strong product motion quality.

- You want the cheapest path to social-ready clips with no watermark: Pika 2.5 free plan. 80 credits/mo, no watermark, rollover.

- Your output needs synchronized audio generated from the image: Veo 3 via Google AI Pro ($19.99/mo). Seedance 2.0 if you want native audio at lower cost (~$9.60/mo).

- You need stylized motion or keyframe-controlled element replacement: PixVerse. No other tool on this list offers keyframe-level swap control for social content.

Getting Better Results: What Makes a Good Source Image

The quality of your source image determines the quality ceiling of your output regardless of which tool you use. These principles apply across every platform on this list.

Resolution and sharpness. All models produce better motion from high-resolution, sharp input images. A blurry or low-resolution source will produce blurry, inconsistent motion. If your source image is low quality, upscale it first — Magic Hour's AI image upscaler or any dedicated upscaling tool improves output quality noticeably.

Clean backgrounds for isolated subjects. If you want motion applied primarily to your subject rather than the whole frame, a clean or simple background gives the model less to animate incorrectly. Complex backgrounds with competing elements frequently produce artifacts or unnatural motion in areas you did not intend to animate.

Subject size in frame. A subject that fills a significant portion of the frame is animated more accurately than one that occupies a small portion. If your source image has a small subject within a large scene, crop to increase the subject's relative size before generating.

Front-facing for human subjects. Human face animation is most accurate on front-facing or near-front-facing images. Side profiles and extreme angles produce noticeably worse identity preservation and motion quality across every tool on this list.

Final Thoughts

Image-to-video generators have opened up video creation to basically anyone with a photo library. Whether you’re a marketer, designer, or just trying to make your Instagram posts pop, these tools help you move fast.

If you want quick, shareable videos without digging into complex software, Magic Hour is still my go-to.

Try Magic Hour Image-to-Video Free

Upload any photo and animate it into a video in minutes. 400 free credits, no watermark, no credit card. Trusted by teams at Meta, NBA, and L'Oreal.

Click to Try Magic HourFrequently Asked, AI Image to Video Generation Questions

What is the best free AI image-to-video generator with no watermark?

Magic Hour offers the strongest free plan for real-photo animation: 400 credits, no watermark, no credit card, credits that never expire. Pika 2.5 also offers a no-watermark free plan with 80 credits per month and rollover — the best free option for social content and effects-driven clips. Kling 3.0 gives 66 credits per day on its free tier but all outputs are watermarked.

Which image-to-video tool is best for product photography?

Luma Dream Machine produces the most reliable results for product and object animation, with smooth lighting transitions and natural depth-of-field movement. It also supports sequences up to 5 minutes, which no other tool on this list can match. For products that need synchronized ambient sound alongside the animation, Veo 3 is the better choice.

Can AI image-to-video tools generate audio as well?

Yes, but only two tools on this list do it natively: Veo 3 / Google Flow generates synchronized dialogue, sound effects, and ambient audio alongside the video in a single pass. Seedance 2.0 offers the same capability at roughly half the cost. All other tools on this list require separate audio post-production.

How long can AI-generated image-to-video clips be in 2026?

It varies significantly by tool. Pika and PixVerse cap at 5 seconds per generation. Runway caps at 16 seconds. Veo 3 caps at 8 seconds. Kling 3.0 supports up to 2 minutes. Seedance 2.0 supports up to 15 seconds. Luma Dream Machine is the only tool on this list that allows sequences up to 5 minutes through its clip extension feature.

Do I need to use multiple tools for image-to-video in 2026?

For most creators, one well-chosen tool handles the majority of use cases. Magic Hour covers animation of your own photos across multiple output types. Kling 3.0 covers cinematic prompt-to-video from a reference image. The main reason to use two tools is when one handles generation (Kling, Runway) and another handles transformation and workflow (Magic Hour). That combination covers the widest range of image-to-video scenarios without needing separate subscriptions for every edge case.

What happened to tools like Kaiber and Animoto?

Kaiber peaked in 2023 as an audio-reactive tool and is no longer a meaningful player in the 2026 image-to-video landscape. Animoto is a slideshow and template tool, not an AI image-to-video generator — it does not use generative AI motion. Neither belongs in a 2026 comparison of tools that generate AI-driven video from static images.

.jpg)