Seedance 2.0 Reference Guide (2026): Image, Video, and Audio Tagging Patterns That Work

TL;DR

- Use structured reference tags in Seedance 2.0 to lock identity, motion, and style across shots.

- Combine image, video, and audio references to control continuity instead of relying on prompts alone.

- For production-ready workflows, many creators generate clips in Seedance and refine or extend them using tools like Magic Hour’s AI video workflows.

Why Reference Tagging Matters in Seedance 2.0

Seedance 2.0 is designed to generate video from structured prompts that include references. Instead of relying only on text prompts, creators can attach images, video clips, or audio to guide the model.

This is important for creators working on storytelling, short films, product ads, or social media clips. Without reference control, characters drift between shots, camera movement resets, and pacing becomes inconsistent. The result is often visually interesting but unusable for narrative sequences.

Reference tagging solves that problem. By attaching structured references to prompts, creators can maintain identity, reuse motion patterns, or synchronize visual events with audio cues.

In practice, most experienced creators use Seedance alongside other tools. For example, many workflows generate base motion in Seedance and then extend or refine the results using tools like Magic Hour through its AI video generation pipelines.

This guide focuses specifically on the tagging patterns that actually work in Seedance 2.0.

What Reference Tags Do in Seedance

Reference tags tell the model which elements of the prompt should remain stable across generations. In most workflows they control four major aspects of a video:

Character identity

Visual style

Camera motion

Audio synchronization

Instead of hoping the model understands the prompt, the tags act as anchors that guide the generation process.

For example, a reference image can lock the appearance of a character across multiple shots. A reference video can copy camera motion or pacing. An audio track can influence the timing of cuts or movements.

When used together, these tags allow creators to produce short sequences that feel more like edited footage rather than disconnected clips.

Reference Workflow Overview

Reference workflows in Seedance 2.0 are built around a simple idea: instead of relying entirely on descriptive prompts, you guide the model with structured visual and audio anchors. These anchors help the system understand exactly what should remain consistent across frames or scenes.

Many beginners treat AI video prompting like creative writing. They describe the scene in detail and hope the model interprets it correctly. In practice, this rarely works for sequences longer than a few seconds. Characters may change appearance, the camera may reset between shots, and the pacing can drift away from what the creator intended.

Reference workflows solve that by splitting the generation process into several controlled stages. Each stage introduces a different type of reference that stabilizes part of the output. Over time, creators develop repeatable patterns that combine image references, motion references, and audio cues.

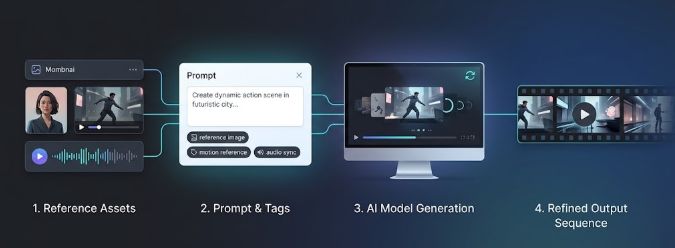

A typical Seedance reference workflow usually follows four stages.

Stage 1: Prepare Reference Assets

The first step is collecting the assets that will guide the model. These references act as constraints, telling the model what it should preserve during generation.

Most creators prepare three main types of references:

Image references

These are used to control identity, style, or composition. For example, a portrait of a character can ensure the face stays consistent across multiple clips.

Video references

Short motion clips can guide camera movement or subject motion. A dolly shot, a walking animation, or a dance clip can be used as the motion template.

Audio references

Audio tracks can influence timing and rhythm. Beat-driven clips, dialogue recordings, or sound effects can help synchronize visual events.

The quality of these assets matters more than most people expect. High-resolution images with clear lighting usually produce more stable results. Video references should be short and clean, without sudden camera jumps or complex edits.

When the references are strong, the prompt itself can remain relatively simple.

Stage 2: Attach Structured Tags to the Prompt

Once the references are prepared, they need to be tagged correctly inside the prompt.

Seedance uses reference tags to define how each asset should influence the generated video. Instead of just uploading files, the creator explicitly tells the system how to use them.

For example, an image reference might be tagged to lock character identity. A video clip might be tagged to copy camera motion. An audio track might trigger beat synchronization.

A simplified prompt structure might look like this:

[reference_image: character.png]

[identity_lock]

cinematic close-up of the same character standing in a rainy street

These tags act like instructions. They tell the model which elements are flexible and which elements must stay stable.

This is one of the biggest differences between experimental prompting and structured AI video workflows.

Stage 3: Generate the Base Clip

Once the references and tags are in place, the model generates a short video clip based on the prompt.

This base clip usually serves as the foundation for the rest of the workflow. The goal at this stage is not perfection. Instead, creators are looking for a clip that captures the correct identity, motion style, and pacing.

Most clips generated in this stage are between a few seconds and roughly ten seconds long. That length is often enough to establish a shot that can later be extended or edited.

Experienced creators typically generate several variations of the same prompt. They then choose the clip that best preserves the references and discard the others.

This iteration step is important because AI video models can produce slightly different interpretations each time they run.

Stage 4: Refine or Extend the Video

After the base clip is generated, the next step is refining or extending the footage.

Seedance can generate short clips effectively, but longer sequences often require additional tools. Many creators export the generated clip and continue editing it elsewhere.

This is where tools like Magic Hour are commonly used. For example, a creator might:

Generate a character-driven shot in Seedance

Export the clip

Extend the scene with image-to-video or video transformation workflows

These hybrid pipelines allow creators to combine the strengths of multiple systems. Seedance handles reference-driven generation, while other platforms help scale the footage into longer or more polished sequences.

Some workflows also introduce additional AI models such as Kling 3.0 for specific visual styles or motion patterns.

The result is a modular production pipeline where each tool focuses on the part of the process it handles best.

Why This Workflow Works

Reference workflows work because they reduce ambiguity.

AI models are very good at generating visually interesting frames, but they struggle with consistency across time. By providing explicit references, the creator limits how much the model can reinterpret the scene.

Instead of guessing what the character should look like, the model follows the identity reference. Instead of inventing a new camera movement, it follows the motion reference.

The result is a clip that feels much closer to a real shot from a camera rather than a random sequence of generated frames.

Once creators understand this workflow, prompting becomes less about writing elaborate descriptions and more about designing the right reference structure.

That shift is what turns Seedance from a novelty tool into something that can support real creative projects.

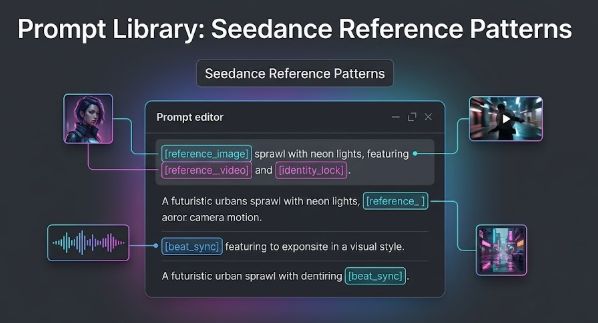

Prompt Library: Seedance Reference Patterns

Below is a practical library of reference patterns used by creators. Each pattern shows a common structure and explains when to use it.

The goal is not to copy these prompts exactly but to understand how reference tags influence the output.

Pattern 1: First Frame Lock

Use this when you want the first frame of the video to match a reference image exactly.

Prompt structure example:

[reference_image: character_portrait.png]

[first_frame_lock]

cinematic close-up of the same character looking toward the camera, soft studio lighting, slow breathing motion

Explanation:

The reference image becomes the visual anchor. The model starts the clip with that frame and then animates it. This pattern is useful for talking-head clips, portrait animations, or product hero shots.

Pattern 2: Identity Lock

Identity lock ensures a character remains consistent across different scenes.

Prompt structure example:

[reference_image: hero_character.png]

[identity_lock]

the character walks through a neon city street at night, reflections on wet pavement

Explanation:

Without identity locking, the character’s face or clothing often changes between frames. This tag tells the model to prioritize identity consistency.

Pattern 3: Multi-Shot Character Consistency

This pattern works well when generating multiple clips for the same scene.

Prompt structure example:

[reference_image: character.png]

[identity_lock]

shot 1: character standing on rooftop looking at skyline

shot 2: character turning toward camera

shot 3: character walking forward slowly

Explanation:

Instead of generating separate prompts, the shots are described together while sharing the same reference. This increases visual continuity.

Pattern 4: Camera Motion Copy

Reference video clips can copy camera movement.

Prompt structure example:

[reference_video: dolly_pan.mp4]

[camera_copy]

a futuristic laboratory interior with glowing screens

Explanation:

The reference clip defines how the camera moves. The generated scene replaces the content but keeps the motion.

Pattern 5: Motion Transfer

This pattern transfers subject motion from one video to another.

Prompt structure example:

[reference_video: dancer_motion.mp4]

[motion_transfer]

robot performing the same dance on a cyberpunk stage

Explanation:

Instead of copying the camera movement, the model copies the subject motion.

Pattern 6: Style Transfer

Style transfer applies the visual aesthetic of a reference image or video.

Prompt structure example:

[reference_image: watercolor_style.png]

[style_transfer]

city skyline at sunrise

Explanation:

This pattern works well for turning realistic scenes into animated or illustrated styles.

Pattern 7: Scene Composition Lock

Use this when composition matters more than motion.

Prompt structure example:

[reference_image: composition_layout.png]

[composition_lock]

dramatic cinematic lighting, foggy atmosphere

Explanation:

The layout of the scene stays similar to the reference while the lighting or environment changes.

Pattern 8: Beat Sync with Audio

Audio references can influence timing.

Prompt structure example:

[reference_audio: beat_track.wav]

[beat_sync]

dancer performing movements synchronized with the beat

Explanation:

Visual events align with the rhythm of the audio.

Pattern 9: Dialogue Lip Sync

Some creators experiment with audio-driven motion.

Prompt structure example:

[reference_audio: dialogue.wav]

[lip_sync]

character speaking directly to the camera

Explanation:

The model attempts to align mouth movement with speech.

Pattern 10: Lighting Reference

Lighting style can also be anchored.

Prompt structure example:

[reference_image: lighting_reference.png]

[lighting_copy]

portrait of a musician holding a guitar

Explanation:

The scene adopts the lighting direction and contrast of the reference.

Pattern 11: Environment Reference

Sometimes creators want to replicate a location style.

Prompt structure example:

[reference_image: forest_environment.png]

a fantasy creature walking through the same forest

Explanation:

The model adapts the environment rather than copying the exact frame.

Pattern 12: Camera Perspective Lock

This pattern ensures the camera perspective remains stable.

Prompt structure example:

[reference_image: camera_angle.png]

[perspective_lock]

dramatic character reveal

Explanation:

Useful when continuity between shots is critical.

Pattern 13: Style + Motion Combination

Multiple references can work together.

Prompt structure example:

[reference_image: anime_style.png]

[reference_video: camera_pan.mp4]

anime-style city with cinematic camera movement

Explanation:

The image controls style while the video controls motion.

Pattern 14: Object Reference Lock

Products or props can also be stabilized.

Prompt structure example:

[reference_image: product.png]

[object_lock]

product rotating slowly on a glossy pedestal

Explanation:

This pattern is useful for product marketing videos.

Pattern 15: Sequence Expansion

Creators often generate a base clip and then extend it.

Prompt structure example:

[reference_video: previous_clip.mp4]

[sequence_extend]

continue the scene with the character walking forward

Explanation:

The model attempts to continue the previous scene.

When to Use Alternatives

While Seedance 2.0 is powerful for reference-driven prompts, it is not always the best tool for every stage of an AI video workflow. Most creators combine several systems because each model tends to perform better in a specific part of the production process.

Seedance is particularly useful when you need structured reference control. It works well for locking character identity, copying camera motion, or synchronizing visuals with audio. This makes it a strong choice during the early phase of a project when you are testing references and building a consistent visual setup.

However, once the base clip is created, many creators switch to alternative tools for speed, style exploration, or longer sequences.

When You Need Faster Iteration or Different Styles

Reference-heavy prompts can slow experimentation because each generation depends on multiple inputs. If the goal is to quickly explore different visual directions or cinematic motion, some creators use alternative models.

For example, Kling 3.0 is often used for stylized scenes or dynamic camera movement without needing a complex reference structure. This makes it useful for rapid experimentation before committing to a specific workflow.

When to Use Magic Hour

Another common step in many workflows involves exporting the Seedance clip and refining it using Magic Hour.

Creators often generate the initial reference-driven shot in Seedance and then use Magic Hour to extend or transform that footage into a longer sequence. This approach works well because it separates the workflow into stages: Seedance handles reference control, while Magic Hour helps scale the clip into a more complete video.

Magic Hour supports several AI video pipelines that fit naturally into this process, including text-to-video, image-to-video, and video-to-video generation.

For many creators, this hybrid workflow makes AI video production more flexible and easier to manage.

How to Avoid Common Seedance Reference Problems

Several issues appear frequently when reference tags are used incorrectly.

Character Drift

Character drift happens when a character’s face, clothing, or body proportions change during the clip or between shots. This usually occurs when the prompt relies too heavily on text descriptions without a strong identity reference.

To avoid this, always provide a clear reference image and use an identity lock tag if the workflow supports it. The reference image should show the character clearly, preferably in neutral lighting with minimal motion blur. If the image is low resolution or partially obscured, the model may reinterpret details and generate inconsistent frames.

Another useful approach is to reuse the same reference image across multiple prompts instead of generating new references for each shot. This helps maintain continuity throughout the sequence.

Motion Distortion

Motion transfer can sometimes produce unnatural movement. For example, limbs may stretch, camera motion may appear unstable, or the subject may move in unexpected ways.

This usually happens when the reference video contains complex motion such as fast cuts, rapid camera changes, or multiple moving subjects. The model struggles to translate these patterns into a new scene.

The simplest fix is to choose cleaner motion references. Short clips with a single camera movement, such as a slow pan or a simple walk cycle, usually transfer much more reliably than complicated action footage.

Audio Desynchronization

When using audio references for beat sync or dialogue timing, the generated motion does not always match the rhythm perfectly. This is especially noticeable with complex music tracks or layered audio.

To improve synchronization, use audio clips with clear and predictable beats. Simple drum loops, claps, or spoken dialogue tend to work better than full music tracks with many instruments. Shorter audio segments also make it easier for the model to align visual events with the sound.

Reference Conflicts

One of the most common mistakes is using too many references in the same prompt. For example, combining multiple image references, a motion clip, and an audio track can confuse the model about which reference should take priority.

When this happens, the generated video may partially follow several references instead of fully committing to one. The result often looks inconsistent.

A better strategy is to start with one reference type, generate the base clip, and then add additional references in later iterations. This step-by-step approach makes it easier to identify which reference is causing problems.

Start Simple, Then Add Control

Most stable Seedance workflows begin with a minimal prompt and one strong reference. Once the base motion and identity look correct, additional tags can be introduced to refine the result.

This incremental approach reduces conflicts between references and makes the generation process easier to troubleshoot. Over time, creators develop a small set of reliable reference patterns that they reuse across projects instead of rebuilding prompts from scratch.

Final Thoughts

Reference tagging is the core skill that separates experimental AI video from production-ready workflows.

Creators who use Seedance effectively treat prompts more like structured instructions than creative writing. The references define the constraints, and the prompt describes the scene.

Once you understand how to combine identity locks, camera motion references, and audio synchronization, it becomes possible to produce short sequences that feel consistent.

Many production workflows then combine these reference-driven clips with editing and extension pipelines in tools like Magic Hour to finish the video.

FAQs

What is a Seedance 2.0 reference guide?

A Seedance 2.0 reference guide explains how to use images, videos, and audio references to control AI video generation. Instead of relying only on text prompts, creators attach reference assets to stabilize identity, motion, and visual style.

What are Seedance reference tags?

Reference tags are instructions that tell Seedance 2.0 how to use the assets attached to a prompt. For example, an image can lock character identity while a video clip can guide motion or camera movement.

Can Seedance keep the same character across multiple scenes?

Yes, but it usually requires a strong image reference. Reusing the same reference image across prompts helps maintain character consistency between shots.

What types of references work best in Seedance?

High-quality assets with simple visual structure work best. Clear portrait images help with identity, short motion clips work well for movement, and audio with clear beats improves synchronization.

Is Seedance enough to produce full AI videos?

Seedance is often used to generate short clips rather than full-length videos. Many creators extend or refine those clips using other tools like Magic Hour.

What is the difference between Seedance and Kling 3.0?

Seedance 2.0 focuses on reference-driven prompting and structured tagging. Kling 3.0 is often used for cinematic motion or faster visual experimentation.

Why do Seedance prompts sometimes produce inconsistent results?

Inconsistency usually happens when references conflict or when the input assets are unclear. Starting with one strong reference and adding control gradually often produces more stable results.