How to Use Seedance 2.0 (2026): A Practical Guide to Reference Images, Video, and Audio Without Drift

TL;DR

- Use structured references with the @ mention system to anchor identity, style, and motion clearly from the start.

- Lock priorities (identity first, style second) and iterate with short clips before expanding into full scenes.

- Seedance 2.0 offers stronger multi-scene consistency than most tools, but it requires deliberate setup and precise prompting.

Intro

If you’re searching for how to use Seedance 2.0 properly, you’re probably trying to solve one problem: keeping characters, motion, and style consistent across AI-generated video.

Seedance 2.0 is not a simple text-to-video generator. It is a structured multimodal system. You can attach reference images, reference videos, and reference audio, then explicitly tell the model how each asset should influence the output. Used correctly, this reduces character drift and improves continuity. Used casually, it produces unpredictable results.

This guide explains:

- What Seedance 2.0 actually is

- What the @ mention structure means (in plain English)

- How to structure references step by step

- How to lock identity and style

- How to iterate without breaking continuity

- Where it sits compared to Kling 3.0, Veo 3, Sora, Runway, and Magic Hour

If you’re building serialized content, branded video systems, or narrative shorts, this tutorial is written for you.

What Is Seedance 2.0?

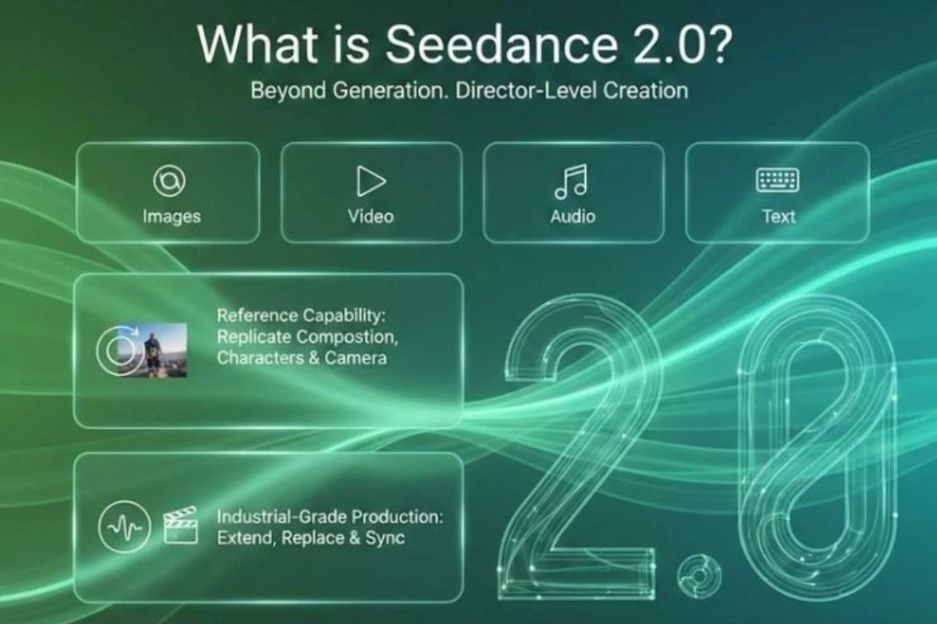

Seedance 2.0 is a multimodal AI video generation model built around explicit reference control. Unlike basic prompt-only systems, it allows you to upload images, video clips, and audio files, then specify exactly how each one should influence the generated output.

At its core, Seedance 2.0 is a conditioning engine. Your text prompt defines the scene, but your references anchor identity, motion, camera behavior, and rhythm. The system blends these inputs into a coherent video. The key word is blends. It does not automatically “understand” which attribute matters most unless you tell it.

What makes Seedance 2.0 different from earlier models is its structured reference binding. Instead of loosely attaching an image and hoping the model follows it, you explicitly call references inside your prompt using an @ mention syntax. This gives you more control, but also introduces responsibility.

Compared to cinematic-first models like Kling 3.0 or Veo 3, Seedance is more modular. It is less about one-shot spectacle and more about compositional assembly. Compared to creator-focused platforms like Magic Hour, it offers deeper manual control but requires more setup. Compared to Runway, it emphasizes generation control more than post-production refinement.

In short, Seedance 2.0 is best understood as a system builder’s tool rather than a plug-and-play generator.

Pros

- Explicit multimodal reference control

- Strong character preservation when references are clean

- Can replicate camera motion from video references

- Supports audio-conditioned generation and rhythm alignment

- Suitable for serialized storytelling

Cons

- Requires structured prompt discipline

- Drift increases with conflicting references

- Slower iteration compared to lightweight platforms

- Learning curve for beginners

- Not optimized for casual one-click creation

Deep Evaluation

Seedance 2.0’s greatest strength is its ability to separate concerns inside a generation pipeline. You can assign facial identity to one image, motion behavior to a short video clip, and pacing to an audio track. This separation allows you to build repeatable workflows. In production contexts, that is powerful. It enables you to treat AI generation as a system rather than a single prompt experiment.

However, this flexibility also creates fragility. If you upload five inconsistent character images with different lighting conditions and hair variations, the model will attempt to average them. That averaging often leads to subtle face morphing across frames. The model does not automatically decide which reference is the “true” identity anchor unless you specify it clearly.

Compared with Kling 3.0, Seedance requires more explicit control but offers stronger identity anchoring over multiple scenes. Kling often delivers smoother cinematic output on the first attempt, but it does not always expose the same level of granular reference isolation. If you are producing episodic content with the same character, Seedance provides better long-term structural stability.

Against Veo 3 and Sora, Seedance feels more tactical. Those models focus heavily on cinematic realism and narrative scale. Seedance focuses on compositional modularity. It is less about generating a sweeping fantasy world in one prompt and more about carefully constructing scenes with predictable reference behavior.

Compared to Runway, Seedance excels at initial multimodal conditioning. Runway, by contrast, is often stronger in editing, scene manipulation, and post-generation refinement. Many creators use Seedance to generate base footage and then move to Runway for finishing touches.

Finally, compared to Magic Hour, Seedance trades simplicity for control. Magic Hour emphasizes guided workflows for text-to-video, image-to-video, and video-to-video creation. It reduces friction for marketers and content teams. Seedance, in contrast, gives you more direct control over how references influence output, but requires careful planning. If your priority is speed and accessibility, simpler platforms may be more practical. If your priority is modular conditioning, Seedance has advantages.

This distinction is critical: Seedance is not “better” universally. It is better for structured workflows.

Price

Pricing is typically credit-based, with higher tiers unlocking longer durations and higher resolutions. Because plans may vary by access level and region, always verify the latest pricing directly on the official platform before committing to production volume.

Best For

- Serialized character storytelling

- Branded video systems

- Indie filmmakers experimenting with AI actors

- Creators who value control over speed

What Is the @ Mention Structure?

Before using Seedance 2.0 effectively, you must understand the @ mention structure.

The @ mention structure is a way of referencing uploaded assets inside your text prompt. After uploading images, videos, or audio files, each file receives an identifier such as @Image1, @Video1, or @Audio1. You then call that identifier in your prompt to specify how the file should influence generation.

Think of it as assigning roles to each asset.

Instead of writing:

“Use the image I uploaded.”

You write:

“Reference @Image1 for facial structure and skin tone only.”

This changes everything. The model is no longer guessing. It is receiving explicit instructions.

There are typically two main modes:

First/Last Frame Mode

Used when you want a specific image as the starting or ending frame.

Universal Reference Mode

Used when combining multiple assets (images + videos + audio + text).

Example basic usage:

@Image1 as the first frame.

Reference @Video1 for camera movement.

Use @Audio1 for backgrouMAnd music pacing.

The key principle is specificity. Always state what attribute should be extracted from which file. Motion? Identity? Camera? Rhythm? Lighting? If you do not specify, the model may merge attributes unpredictably.

Understanding this structure is the foundation of using Seedance 2.0 correctly.

Step-by-Step Workflow: How to Use Seedance 2.0 Without Drift

Below is a detailed Seedance workflow designed for maximum clarity and consistency.

Step 1: Choose Strong References (Do Not Skip This)

The most common mistake beginners make is uploading weak or inconsistent references. Seedance can only anchor what you give it. If your reference image is low resolution, heavily filtered, or inconsistent in lighting, the generated video will inherit those weaknesses.

When selecting reference images:

Choose high-resolution images with clear facial details. Avoid extreme shadows that hide key identity markers like jawline, nose shape, and eye spacing. Make sure the clothing and hairstyle match your intended final look. If you plan to generate multiple scenes, keep wardrobe and lighting consistent from the start.

For reference video:

Select clips that demonstrate clean camera motion. If you want cinematic stability, avoid handheld chaos. If you want a slow push-in shot, provide a clip that clearly demonstrates that movement. The model will abstract motion patterns from the video.

For audio references:

If using voice or rhythm, choose clean audio. Background noise can distort tone interpretation. If you’re using audio for pacing rather than voice, pick clips with clear tempo and minimal distortion.

Strong references reduce iteration cycles dramatically.

Step 2: Tag References Properly

After uploading references, assign clear, descriptive tags using the @ mention structure. Avoid vague labels like @image1 or @ref. Instead, use semantic names:

@lead_character

@urban_night_style

@slow_dolly_motion

@dramatic_voice

These tags should reflect functional roles. Think of them as modular building blocks.

Once tagged, you must refer to them clearly in your prompt. Do not assume the system will infer connections automatically. Always explicitly state what should be preserved:

“Maintain facial identity from @lead_character.”

“Use lighting contrast and color grading from @urban_night_style.”

“Follow camera motion arc from @slow_dolly_motion.”

Clarity reduces drift.

Step 3: Lock Identity and Style

In Seedance 2.0, identity locking is about prioritization. The model may blend references unless you explicitly rank importance.

A strong identity-locking prompt structure might look like this:

“Primary identity anchor: @lead_character. Do not alter facial proportions, eye shape, or hairstyle. Maintain wardrobe consistency. Secondary style reference: @urban_night_style for lighting and color grading only.”

By separating primary and secondary anchors, you reduce cross-contamination between style and identity.

You should also reinforce constraints:

“No face distortion.”

“No wardrobe changes.”

“No color palette shift.”

Constraints are not guarantees, but they reduce randomness.

Step 4: Generate Short Clips First

Do not immediately generate a 20-second sequence. Start with 3–5 second clips.

Short generations allow you to test:

- Facial stability

- Lighting retention

- Motion adherence

- Background consistency

If drift occurs, refine before scaling.

Common fixes include:

- Increasing identity emphasis language

- Reducing conflicting style references

- Simplifying motion instructions

Iteration is expected. Even with good references, you may need 2–4 cycles.

Step 5: Expand Scene-by-Scene

Once a 3–5 second clip is stable, expand scene-by-scene rather than regenerating from scratch.

You can reattach the previous output as a new reference and tag it:

@scene1_locked

Then instruct:

“Continue from @scene1_locked. Preserve identity and lighting.”

This chaining approach reduces abrupt transitions.

Example Prompts

Image Reference Prompt

@lead_character = portrait of woman in red jacket

@studio_light = cinematic soft side lighting

Prompt:

“Generate a 5-second cinematic close-up using @lead_character as the primary identity anchor. Maintain facial proportions and red jacket wardrobe. Apply lighting style from @studio_light. Slow push-in camera motion. Neutral background.”

Video Reference Prompt

@lead_character = portrait image

@slow_push_motion = uploaded dolly-in shot

Prompt:

“Create a dramatic monologue scene. Preserve identity from @lead_character. Follow camera movement pattern from @slow_push_motion. Maintain realistic skin texture and subtle micro-expressions.”

Audio Reference Prompt

@lead_character = portrait image

@calm_voice = uploaded audio sample

Prompt:

“Generate a close-up speaking scene. Preserve identity from @lead_character. Match vocal tone and pacing from @calm_voice. Maintain steady lighting and no camera shake.”

Common Failure Modes and Fixes

If the face slowly morphs across frames, reduce your reference images to the three most consistent ones and strengthen identity lock language.

If wardrobe changes unexpectedly, explicitly state “Do not alter clothing category or primary color.”

If motion feels disconnected from the character, shorten your motion reference and isolate its role more clearly.

If lip-sync becomes unstable, simplify the scene. Complex motion plus heavy emotional audio reduces sync accuracy.

Comparing Seedance 2.0 to Other Tools

Below is a small positioning table to clarify where Seedance stands today:

Tool | Strength | Weakness | Best For |

Structured reference locking | Requires careful setup | Consistent multi-scene storytelling | |

Visual realism | Less structured reference control | Cinematic one-off clips | |

High-end motion physics | Limited public access | Advanced cinematic sequences | |

Strong scene understanding | Still evolving workflows | Concept storytelling | |

Accessible UI | More drift across scenes | Social content | |

Easy multimodal tools | Less granular tagging | Fast creator workflows |

Seedance’s strength is structured identity control. Its weakness is complexity. It rewards careful users.

How Seedance 2.0 Fits in the 2026 Market

In 2026, AI video tools are splitting into two categories.

The first category focuses on high-fidelity cinematic realism. Tools like Kling 3.0, Veo 3, and Sora emphasize visual quality and narrative scale. They often produce impressive first-pass outputs with less structured input.

The second category emphasizes workflow simplicity. Platforms like Magic Hour guide users through structured interfaces for text-to-video, image-to-video, and video-to-video creation. These tools reduce friction for marketing teams and creators who prioritize speed.

Seedance 2.0 occupies a middle ground. It offers more manual control than guided platforms, but less automatic cinematic polish than some large-scale models. Its strength lies in modular conditioning.

If you need deep reference binding and repeatable character systems, Seedance is a strong choice. If you need fast ad production with minimal setup, streamlined platforms may be more practical.

Choosing the right tool depends less on model hype and more on workflow fit.

Final Advice

Seedance 2.0 does not eliminate drift. It reduces drift when you approach it methodically.

Your success depends on:

- Clean, consistent references

- Clear @ mention role assignment

- Explicit identity locking

- Incremental iteration

Treat it like a structured production pipeline. Not a prompt experiment.

If you build that discipline, Seedance 2.0 becomes a powerful system for generating consistent AI video across images, motion references, and audio conditioning.

.jpg)