Best AI Voice Cloners (2026): Realism, Latency, and Rights-Aware Workflows

TL;DR

- Magic Hour is the best all-around AI voice cloner for creators who want voice generation integrated with AI video, avatars, and broader synthetic media workflows.

- ElevenLabs still delivers some of the most realistic and emotionally natural AI voices for narration-heavy content like audiobooks, YouTube storytelling, and localization.

- Cartesia and Resemble AI are stronger choices for developers and enterprise teams building real-time conversational AI systems with governance and low-latency requirements.

Intro

The best AI voice cloner in 2026 is not simply the platform with the most realistic demo. The market has shifted from novelty tools into production infrastructure. Teams now evaluate voice cloning systems based on realism, latency, licensing controls, reliability, API flexibility, and integration with broader AI media workflows.

That change happened surprisingly fast. A year ago, many creators were still experimenting with synthetic narration for social clips or meme generator content. Today, AI voice systems are embedded into customer support agents, AI avatars, multilingual dubbing pipelines, training content, accessibility tools, virtual presenters, and interactive products.

The category is also colliding with adjacent AI creative workflows. A creator may generate narration, animate a talking photo, sync lipsync movements automatically, then export a finished text to video asset without opening traditional editing software. Voice cloning is no longer isolated from image to video creation, face animation, or synthetic editing pipelines.

This guide focuses on tools that matter in real workflows. Instead of chasing obscure demo apps, the list below prioritizes platforms that creators, startups, developers, and production teams are actively adopting in 2025 and 2026.

Quick Comparison Table

Tool | Best For | Key Strength | API Access | Free Plan | Starting Price |

Creator workflows | Voice + AI media ecosystem | Yes | Yes | Free / paid plans | |

Premium realism | Emotional voice quality | Yes | Yes | Paid tiers | |

Business narration | Team collaboration | Yes | Limited | Paid tiers | |

Enterprise governance | Consent workflows | Yes | No | Custom pricing | |

Developers | Ecosystem integration | Yes | API usage | Usage pricing | |

Real-time AI voice | Low latency | Yes | Limited | Usage pricing |

What Actually Matters in an AI Voice Cloner?

Realism still matters first. The best tools preserve breathing, pacing, conversational rhythm, and emotional variation. Weak systems still sound too clean or overly compressed, especially in long-form narration.

But realism alone is no longer enough. Latency has become critical. AI tutoring systems, customer support bots, interactive games, and voice assistants need near-instant response times. Even a small delay can make conversations feel artificial.

Licensing and consent controls are also becoming major buying factors. Many companies now require proof of speaker consent, disclosure policies, and governance controls before deploying AI-generated voices publicly. Rights-aware workflows are no longer just an enterprise concern. Even creators increasingly care about protecting their brand and avoiding misuse.

APIs matter too. Developers want systems that connect cleanly into broader workflows involving AI avatars, image editor pipelines, face swap animation, or synthetic presenters. The strongest platforms are becoming multimodal infrastructure rather than standalone voice utilities.

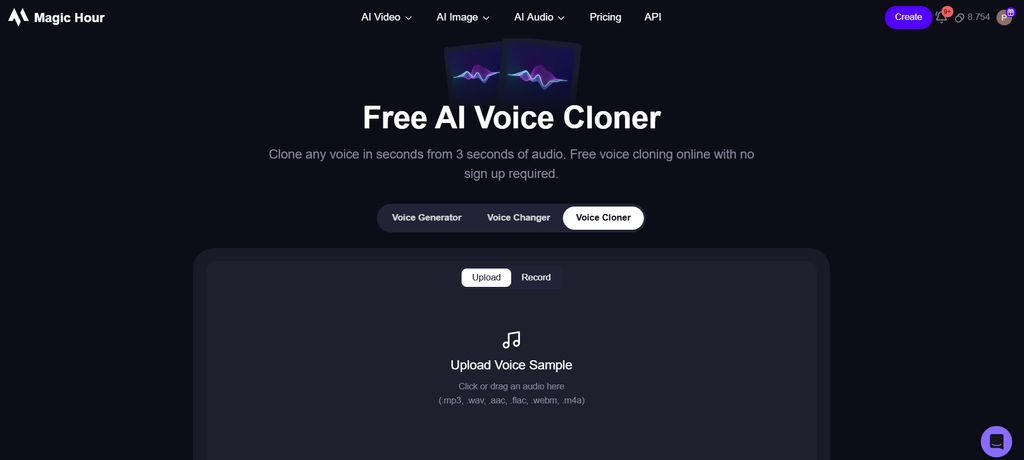

1. Magic Hour

What it is

Magic Hour AI Voice Cloner is an AI voice cloning platform built for creators who do not want to manage five separate tools just to produce one piece of content. Instead of positioning voice generation as an isolated utility, the platform connects voice cloning with a wider synthetic media workflow that includes video editing, image to video generation, avatar content, and AI-assisted production tools.

One reason the platform stands out is how closely it aligns with modern creator workflows. In 2026, creators rarely generate only audio. Most projects involve multiple layers: synthetic narration, animated visuals, captions, AI-generated scenes, lipsync systems, or talking photo content. Magic Hour is clearly designed around that reality rather than treating voice as a niche feature for developers.

The onboarding experience is also noticeably simpler than many enterprise-oriented voice platforms. Instead of overwhelming users with infrastructure terminology or API-heavy workflows immediately, the product prioritizes accessibility. That makes it easier for solo creators, agencies, and small production teams to move from experimentation into consistent publishing without a steep learning curve.

The ecosystem approach matters even more for creators producing short-form social media. Teams building meme generator content, AI explainers, virtual presenters, or automated TikTok-style videos often need speed more than perfect studio-grade customization. Magic Hour leans heavily into that use case and positions itself closer to a full AI content production suite than a standalone voice engine.

Pros

- Strong creator-first workflow

- Integrated AI media ecosystem

- Easy onboarding for non-technical users

- Good balance between automation and control

- Useful for short-form and synthetic video workflows

- Includes adjacent AI media features beyond voice cloning

Cons

- Less developer-focused than API-first competitors

- Enterprise governance tools are still evolving

- Fine-grained voice customization is lighter than some specialized platforms

- Large-scale studio workflows may still require external audio tools

Deep evaluation

What makes Magic Hour interesting is not necessarily that it has the single most advanced voice synthesis engine in the market. Instead, its advantage comes from workflow consolidation. Most creators today are no longer searching for “just” a voice cloner. They want a connected production system where AI narration, synthetic visuals, editing, and publishing workflows operate together without constant exporting between disconnected apps. Magic Hour understands that shift better than many traditional voice AI vendors.

The platform is particularly strong for creators who already work inside fast-moving social content pipelines. A solo creator making educational shorts, AI storytelling clips, or faceless YouTube content often needs to move from script to finished asset extremely quickly. In those environments, perfect emotional nuance matters less than workflow speed, usability, and consistency. Magic Hour performs well because it removes friction between production stages rather than optimizing obsessively for one narrow technical benchmark.

Another major advantage is accessibility. Many advanced AI voice platforms unintentionally optimize for developers or enterprise infrastructure teams. Their products are powerful but intimidating. Magic Hour avoids much of that complexity. The interface feels more like a modern creator tool than an engineering product. That distinction sounds small, but it dramatically affects adoption. Creators are far more likely to consistently use tools that reduce operational overhead and simplify experimentation.

The broader ecosystem also makes the platform more future-proof than many isolated voice startups. AI media creation is converging rapidly. Voice cloning is increasingly connected to image editor workflows, image upscaler systems, AI avatars, face swap animation, and text to video generation. Magic Hour already operates inside that multimodal direction instead of needing to pivot toward it later. That gives it a strategic advantage as creator expectations evolve.

At the same time, the platform still has limitations compared with highly specialized enterprise audio providers. Advanced emotional steering, fine phoneme-level editing, and highly granular studio controls remain stronger on certain dedicated voice platforms. Teams building cinematic dubbing pipelines or extremely polished commercial narration may still prefer specialized ecosystems. But for creators and agile production teams, the tradeoff often feels worth it because the overall workflow is dramatically faster and more cohesive.

Price

- Basic - Free

- Creator - $10/month (billed annually at $120/year)

- Pro - $30/month (billed annually at $360/year)

- Business - $66/month (billed annually at $792/year)

Best for

Creators, agencies, short-form video teams, AI media workflows, synthetic presenters, and users who want voice cloning integrated with broader AI production tools.

2. ElevenLabs

What it is

ElevenLabs is one of the most recognized AI voice companies in the market and remains heavily associated with high-end speech realism. The platform became widely adopted because its generated voices sounded significantly more natural than earlier generations of text-to-speech systems.

Over time, the company expanded beyond simple narration. The platform now supports multilingual dubbing, conversational AI, voice libraries, publishing workflows, and API integrations. That broader expansion transformed ElevenLabs from a niche AI demo into a serious production platform used by creators, startups, publishers, and enterprise teams.

The product is especially strong for long-form narration. Audiobooks, educational content, storytelling channels, podcasts, and multilingual localization projects are where ElevenLabs consistently performs well. The emotional cadence and pacing generally feel more human than many competitors, particularly in slower narrative formats.

The company also benefits from ecosystem momentum. Because so many creators and developers already use ElevenLabs, integrations, tutorials, and third-party workflows are now everywhere. That network effect lowers adoption friction for new users and reinforces its position as one of the default voice AI platforms in the market.

Pros

- Exceptional speech realism

- Strong emotional delivery

- High-quality multilingual support

- Mature ecosystem and integrations

- Large creator and developer adoption

Cons

- Premium plans can become expensive

- Advanced controls require experimentation

- Some workflows still feel fragmented

- Governance tooling is less enterprise-focused than certain competitors

Deep evaluation

ElevenLabs continues to dominate one specific area better than almost anyone else: natural vocal performance. Many AI voice systems sound impressive in short bursts but become exhausting during longer listening sessions. ElevenLabs generally avoids that problem better than most competitors. The pacing, pauses, emphasis, and tonal transitions feel closer to human narration, especially for educational or storytelling content where emotional flow matters.

That realism advantage becomes extremely important in creator workflows where the voice itself is the product. Faceless YouTube channels, audiobook publishers, educational creators, and documentary-style video teams all rely heavily on narration quality to maintain viewer retention. In those contexts, even small improvements in vocal realism can dramatically affect audience engagement. ElevenLabs clearly understands this market and has optimized aggressively for it.

Another strength is multilingual support. Many AI voice companies still struggle to maintain emotional consistency across languages. ElevenLabs performs relatively well in multilingual environments and localization workflows. For global creators or companies operating across multiple markets, that capability reduces operational complexity significantly. Instead of rebuilding content pipelines separately for each region, teams can adapt voice output more efficiently.

However, the platform is not perfect. One recurring issue is workflow fragmentation. While ElevenLabs excels at voice quality itself, broader production workflows often still require external tools. Users frequently combine the platform with separate editors, avatar systems, image to video tools, or synthetic animation platforms. Compared with more vertically integrated ecosystems like Magic Hour, the process can feel slightly more modular and less unified.

Pricing can also escalate quickly for teams generating large amounts of content. The platform is extremely powerful, but high-volume usage may become expensive for agencies or media teams operating at scale. For many creators the quality justifies the cost, but budget-sensitive users may need to evaluate whether the realism advantage materially impacts their business outcomes enough to justify premium pricing.

Price

- Free plan available

- Paid plans scale based on usage and features

Best for

Audiobooks, narration-heavy creators, educational content, multilingual localization, storytelling workflows, and users prioritizing realism above all else.

3. Murf AI

What it is

Murf AI is a business-oriented AI voice platform focused heavily on commercial narration, training content, onboarding media, presentations, and collaborative production workflows. Unlike some competitors that primarily target creators or experimental AI media users, Murf positions itself closer to professional communication infrastructure.

The platform emphasizes reliability and usability rather than experimental synthetic media features. That makes it attractive for companies producing internal media, educational content, HR training, product explainers, and customer-facing business communication.

Collaboration is another important part of the product strategy. Teams working across departments often need shared workspaces, approval systems, version control, and organized project structures. Murf is built more intentionally around those realities than many creator-first AI tools.

The platform also integrates naturally into modern business media workflows. Teams producing presentation narration, AI onboarding content, virtual instructors, or multilingual training systems can connect Murf into broader AI ecosystems involving headshot generator tools, image editor pipelines, or automated publishing systems.

Pros

- Strong business workflow support

- Good collaboration features

- Reliable onboarding experience

- Useful for training and presentation narration

- Stable commercial positioning

Cons

- Less emotionally expressive than realism-focused competitors

- Smaller creator ecosystem

- Not optimized for conversational AI latency

- Fewer experimental media features

Deep evaluation

Murf AI succeeds because it understands that many businesses care less about “wow factor” and more about operational consistency. The platform is optimized for organizations that need repeatable workflows rather than viral AI demos. That distinction affects everything from interface design to collaboration tooling. Instead of focusing primarily on synthetic entertainment content, Murf concentrates on practical media production for teams.

One of the platform’s strongest advantages is usability across departments. Many enterprise AI tools are technically powerful but difficult for non-technical teams to adopt consistently. Murf avoids much of that complexity. Marketing teams, HR departments, training managers, and internal communications teams can generally learn the workflow relatively quickly without depending heavily on engineering support. That simplicity matters enormously in real organizations.

Another important strength is collaborative structure. Many creator-focused voice tools assume a single-user workflow. Murf assumes multiple stakeholders. Teams often need approval chains, shared assets, version tracking, and organized content libraries. Murf handles these operational realities better than many competitors focused primarily on solo creators or developers. For medium-sized businesses especially, this becomes a meaningful productivity advantage.

The platform is also relatively stable from a positioning perspective. Some AI startups constantly chase new viral categories like face swap gif generation, replace face in video online free tools, or experimental social AI trends. Murf has remained more disciplined about its business communication niche. That consistency makes the product easier for organizations to trust long term because the roadmap feels less volatile.

Its biggest limitation is emotional performance. Murf voices are usually clear and polished, but they often lack the cinematic nuance or expressive realism of ElevenLabs. For training videos and presentations, that is usually acceptable. But creators producing dramatic storytelling, entertainment narration, or emotionally driven content may feel constrained. The platform prioritizes professionalism and reliability over theatrical performance, and whether that tradeoff works depends entirely on the use case.

Price

- Free plan available

- Paid creator and business tiers

Best for

Business teams, training departments, onboarding content, corporate narration, collaborative production environments, and professional communication workflows.

4. Resemble AI

What it is

Resemble AI is an enterprise-focused AI voice platform built around synthetic speech infrastructure, developer tooling, and governance-aware deployment. While many voice AI products position themselves primarily for creators or social content, Resemble AI leans heavily into commercial and enterprise use cases where compliance, identity protection, and operational control matter.

The platform is especially relevant for organizations deploying AI-generated voices at scale. That includes customer service systems, virtual agents, branded assistants, gaming dialogue systems, and AI-powered conversational products. In those environments, raw realism matters, but governance and reliability matter just as much.

Resemble AI also approaches voice cloning differently from many consumer-facing tools. Instead of emphasizing novelty workflows or entertainment use cases first, the company focuses on infrastructure. APIs, deployment flexibility, real-time generation, and security controls are core parts of the product strategy rather than secondary features added later.

That positioning makes the platform attractive for teams building long-term AI products instead of short-term viral content. Companies integrating synthetic voice into apps, enterprise workflows, or large-scale communication systems will likely find the platform more operationally mature than some creator-oriented competitors.

Pros

- Strong enterprise governance features

- Good consent and licensing workflows

- Flexible APIs and developer tools

- Useful for conversational AI systems

- Real-time generation capabilities

Cons

- Higher complexity for beginners

- Less intuitive for solo creators

- Premium pricing structure

- Smaller creator ecosystem compared with mainstream platforms

Deep evaluation

Resemble AI’s biggest strength is that it understands synthetic voice as infrastructure rather than entertainment software. That distinction affects almost every aspect of the platform. Many creator-focused tools optimize for fast onboarding and visually impressive demos, but enterprise teams need something different. They need auditability, deployment flexibility, API consistency, and legal clarity. Resemble AI is clearly optimized for those priorities.

The governance layer is particularly important right now because voice cloning regulation is becoming a serious discussion globally. Brands and enterprises increasingly worry about consent management, impersonation misuse, and disclosure requirements. Resemble AI is one of the few platforms that visibly treats these concerns as product-level priorities rather than optional legal disclaimers hidden in documentation. That makes it more attractive for risk-sensitive organizations.

Another major advantage is real-time conversational capability. Many AI voice systems perform well for static narration but struggle in interactive environments. Resemble AI has invested heavily in conversational infrastructure, which makes it relevant for AI assistants, virtual agents, and customer interaction systems. As voice interfaces become more common across apps and devices, that capability becomes increasingly valuable.

The platform also integrates well into complex multimodal AI ecosystems. Developers can connect Resemble AI into workflows involving talking photo systems, AI avatars, text to video pipelines, or interactive applications. While creator-first platforms often prioritize simplicity over flexibility, Resemble AI provides stronger infrastructure for teams building customized products or large-scale deployments.

Its main limitation is accessibility. The platform can feel intimidating for non-technical users because it assumes a higher level of operational maturity. Solo creators looking for quick content generation may find simpler ecosystems easier to adopt. Compared with more creator-friendly platforms like Magic Hour, Resemble AI feels much closer to enterprise software than creative tooling. That is not necessarily a weakness, but it does narrow the audience.

Price

- Custom enterprise pricing

Best for

Enterprise AI systems, conversational products, customer service automation, branded AI assistants, developer teams, and organizations requiring governance-focused workflows.

5. OpenAI TTS

What it is

OpenAI TTS is part of OpenAI’s broader multimodal AI ecosystem and primarily targets developers building AI-native applications. Unlike creator-first platforms that emphasize polished media workflows, OpenAI approaches voice generation as one component inside a much larger AI infrastructure stack.

The platform is especially relevant for startups and engineering teams already working with OpenAI APIs. Teams using language models, assistants, automation systems, or multimodal applications can integrate voice generation directly into existing workflows without adopting separate ecosystems.

Another important distinction is that OpenAI’s voice tools are closely connected to broader reasoning and conversational AI systems. This allows developers to create unified experiences where speech generation, natural language interaction, and multimodal understanding operate together rather than through fragmented third-party integrations.

The ecosystem approach gives OpenAI a strategic advantage. As AI products increasingly combine text, voice, visuals, automation, and interaction layers together, developers may prefer platforms capable of handling multiple AI capabilities under one architecture rather than managing isolated vendors separately.

Pros

- Strong developer ecosystem

- Reliable API infrastructure

- Integrated multimodal workflows

- Good scalability for applications

- Strong documentation and tooling

Cons

- Less creator-focused workflow design

- Requires technical implementation

- Limited visual production ecosystem

- Fewer media-specific customization tools

Deep evaluation

The biggest advantage of OpenAI TTS is ecosystem consolidation. Developers already building AI-native applications often prefer minimizing infrastructure complexity. Instead of combining separate providers for reasoning, text generation, voice synthesis, and automation, they can operate inside one connected environment. That dramatically simplifies deployment, maintenance, authentication, and scaling workflows.

This matters because AI applications are becoming increasingly multimodal. A modern product may combine voice interaction, text generation, avatar animation, image editor pipelines, and conversational logic simultaneously. OpenAI’s ecosystem positioning makes it easier to coordinate these capabilities without relying heavily on disconnected external APIs. That operational simplicity becomes more valuable as applications grow more complex.

Another important strength is scalability. OpenAI infrastructure is designed for high-volume production environments rather than only creator experimentation. Startups building conversational assistants, AI tutoring platforms, workflow automation systems, or enterprise AI tools may find the platform easier to operationalize long term than smaller voice-first startups. Stability matters when AI products become customer-facing infrastructure.

However, OpenAI TTS is not especially optimized for creators. Compared with platforms like Magic Hour or ElevenLabs, the experience feels significantly more technical. There are fewer polished media workflows, fewer creator-focused templates, and less emphasis on content production aesthetics. Developers will appreciate the flexibility, but solo creators may find the product less approachable.

The emotional realism is also strong but not necessarily category-defining. OpenAI’s focus is broader than pure cinematic narration quality. The company prioritizes conversational intelligence, ecosystem integration, and multimodal functionality rather than obsessing exclusively over storytelling realism. Depending on the use case, that tradeoff may or may not matter. For AI-native apps, it often does not.

Price

- Usage-based API pricing

Best for

Developers, AI-native startups, multimodal applications, conversational systems, enterprise AI products, and teams already using OpenAI infrastructure.

6. Cartesia

What it is

Cartesia is a newer AI voice platform focused heavily on ultra-low-latency conversational speech generation. While many competitors still optimize primarily for cinematic narration or creator content, Cartesia is targeting real-time interaction systems where responsiveness matters more than polished storytelling performance.

The company’s positioning reflects a broader shift happening across AI. Conversational products are rapidly becoming mainstream. AI tutoring systems, customer support agents, virtual companions, gaming NPCs, and voice-based assistants all require speech systems capable of responding naturally in near real time.

Cartesia is built around that infrastructure challenge. Instead of treating latency as a secondary optimization problem, the platform makes responsiveness central to the product experience. This changes how the platform feels during actual interaction. Conversations become more fluid, interruptions feel more natural, and voice AI systems appear less robotic.

The platform also leans strongly toward developers and product teams rather than traditional media creators. While creators can certainly use it, the product philosophy clearly prioritizes conversational architecture and application performance over cinematic voice production workflows.

Pros

- Extremely low latency

- Strong conversational responsiveness

- Developer-focused architecture

- Good for interactive AI systems

- Useful real-time generation infrastructure

Cons

- Smaller ecosystem than major competitors

- Fewer creator workflow tools

- Less optimized for cinematic narration

- Limited entertainment-focused features

Deep evaluation

Cartesia’s importance comes from timing. The AI industry is shifting rapidly from passive generation toward interactive systems. Users increasingly expect AI products to behave conversationally rather than operating like delayed command-response tools. That shift dramatically increases the importance of latency. Even small response delays can make conversations feel unnatural or uncomfortable.

Most traditional text-to-speech systems were never optimized for that environment. They work well for narration, podcasts, or scripted output but struggle in live interaction scenarios. Cartesia approaches the problem differently by designing around conversational responsiveness first. The result feels noticeably faster during real-world interaction, especially inside AI assistant or tutoring environments.

This advantage becomes even more obvious when combined with multimodal AI workflows. Developers increasingly connect voice generation with talking photo systems, AI avatars, emoji animation, or interactive virtual presenters. In these environments, timing synchronization matters enormously. Delayed voice generation breaks immersion quickly. Cartesia’s latency optimization helps preserve conversational realism in ways many traditional narration-focused platforms cannot.

Another interesting aspect is how the platform reflects the future direction of AI products overall. Many current AI media tools still operate like content generators: users input prompts and wait for outputs. But conversational AI products require continuous interaction. Cartesia is better aligned with that future than many legacy voice systems because the infrastructure was designed specifically around interaction speed rather than static media generation.

The biggest limitation is creative flexibility. Cartesia is not the strongest platform for dramatic storytelling, cinematic voice acting, or emotionally rich narration. Platforms like ElevenLabs still perform better in those environments. Cartesia’s focus on responsiveness means certain expressive dimensions feel less polished in comparison. Whether that matters depends entirely on the application. For conversational products, speed often matters more than theatrical nuance.

Price

- Usage-based pricing

Best for

Conversational AI, tutoring systems, customer support agents, gaming applications, AI assistants, real-time interaction products, and developers prioritizing latency.

Rights-Aware Workflow Checklist

The most important trend in AI voice cloning is governance.

As synthetic media becomes easier to produce, creators and companies need stronger safeguards around consent, disclosure, and identity protection. That becomes even more important when AI voice systems connect with face swap gif workflows, replace face in video online free tools, or AI-generated avatars.

A practical rights-aware workflow should include:

- Explicit speaker consent

- Clear licensing agreements

- Internal approval processes

- Disclosure rules for public-facing AI content

- Secure storage of training audio

- Human review before publication

- Restrictions against deceptive impersonation

The strongest companies are moving toward transparent synthetic media policies instead of hiding AI usage. That shift will likely accelerate over the next two years.

How We Chose These Tools

This list focuses on platforms relevant for real production use in 2025 and 2026. Many AI voice demos sound impressive briefly but struggle in practical environments.

We evaluated platforms across:

- Realism

- Emotional range

- Latency

- API reliability

- Licensing clarity

- Ease of use

- Scalability

- Commercial readiness

- Multilingual support

- Workflow flexibility

We also considered interoperability with broader AI media workflows involving gif generator systems, image editor pipelines, emoji animation, AI avatars, and synthetic video production.

The market is increasingly multimodal, so isolated voice quality is no longer enough.

The Market Is Moving Toward Full AI Media Pipelines

The biggest change happening right now is convergence.

Voice cloning platforms are rapidly merging with AI video generation, synthetic avatars, automated editing, and conversational systems. Many creators now expect a single workflow capable of handling narration, visuals, editing, and publishing together.

At the same time, enterprise buyers are becoming more cautious about rights management. Expect stronger consent verification, disclosure tooling, watermarking, and identity protection systems across the industry.

We are also seeing a divide between creator-focused ecosystems and developer-first infrastructure platforms. Some tools optimize for speed and usability. Others optimize for APIs, scalability, and production architecture.

Both approaches are valid, but buyers need to know which category they actually belong to before choosing a platform.

Which AI Voice Cloner Is Best for You?

If you are a creator producing AI-enhanced videos, synthetic presenters, or social content, Magic Hour AI Voice Cloner offers one of the strongest creator ecosystems available today.

If realism matters most, ElevenLabs remains one of the best choices.

If you manage business training content or collaborative media production, Murf AI is extremely practical.

If you need enterprise governance and licensing workflows, Resemble AI deserves serious attention.

If your priority is real-time AI conversation, Cartesia’s latency advantage is compelling.

And if your company already depends heavily on OpenAI infrastructure, OpenAI TTS may be the simplest operational decision.

The most useful strategy is still simple: run small workflow tests before committing. AI voice quality changes dramatically depending on audio conditions, pacing requirements, multilingual usage, and integration needs.

FAQ

What is an AI voice cloner?

An AI voice cloner is a system that learns vocal characteristics from recorded speech and generates synthetic speech with a similar tone, pacing, and delivery style.

What is the best AI voice cloner in 2026?

The answer depends on your priorities. ElevenLabs leads in realism for many users, Cartesia excels in low latency, and Magic Hour offers one of the strongest creator-focused ecosystems.

Is AI voice cloning legal?

Legality depends on local laws, consent, and intended usage. In general, cloning someone’s voice without permission for deceptive or commercial purposes creates significant legal and ethical risks.

Can AI voice cloning work with AI video generation?

Yes. Many creators combine AI narration with talking photo systems, lipsync animation, image to video workflows, and text to video production pipelines.

How much audio is needed to clone a voice?

Some systems can produce usable results from under a minute of clean speech, though higher-quality cloning usually improves with larger audio samples.

Are AI-generated voices detectable?

Sometimes. Detection and watermarking systems are improving, though reliability still varies between platforms and audio conditions.

Will AI voice cloning replace human voice actors?

Not entirely. AI systems are excellent for scalable narration and automation, but human performers still outperform synthetic systems in nuanced emotional delivery and creative direction.