How to Add Voiceover to a Video with AI (2026): Free Workflow + Best Tools

TL;DR (3 steps)

- Write a clean script → generate AI voice using Magic Hour Voice Generator or similar tools

- Adjust pacing, pronunciation, and tone → export audio

- Sync to video (either standard voiceover or avatar lipsync) → export final video

Introduction

If you want to add voiceover to video with AI in 2026, the workflow is much simpler than it used to be. You no longer need a microphone, recording setup, or voice talent. Instead, you can generate realistic narration from text, edit timing, and sync it directly to your visuals in a few steps.

The challenge is not generating a voice anymore. It is getting a voiceover that actually fits your video. That means correct pacing, natural pronunciation, and proper alignment with visuals. Many creators get stuck here, especially when combining formats like image to video, talking photo clips, or short-form content.

In this guide, I will walk through a complete workflow I’ve tested across multiple tools and formats. You will learn how to go from script to final export, including how to handle edge cases like numbers, acronyms, and timing issues. I will also show when to use standard narration versus lipsync avatars depending on your content.

What You Need

Before you start, make sure you have the following inputs ready. This is where most quality issues actually begin.

A script that is written for audio, not just copied from text content. Voiceovers need shorter sentences, clearer pauses, and conversational phrasing. If you are using text to video tools, you may need to rewrite parts of your script to sound natural when spoken.

A visual asset. This can be a full video, a sequence from an image generator free workflow, or even a static image if you plan to turn it into a talking photo. Many creators now start with image to video pipelines and layer voice later.

An AI voice tool. You can use Magic Hour Voice Generator or alternatives like ElevenLabs depending on your needs. The key difference is control over tone, pacing, and export flexibility.

Optional but useful tools include:

- A lipsync system for avatar-based videos

- An image editor to adjust frames before syncing

- A gif generator if you plan to repurpose clips into short loops

Step-by-Step: Add Voiceover to Video with AI

Step 1: Write a Voice-Ready Script (Foundation Layer)

Everything downstream depends on this step. Most “bad AI voice” problems are actually script problems.

When writing a script for AI voiceover, think in terms of spoken rhythm, not written clarity. Your goal is to make the text easy to read aloud in one pass.

Start by breaking long sentences into shorter units. Each sentence should ideally express one idea. Then, introduce intentional pauses using punctuation, line breaks, or even filler words like “so,” “now,” or “here’s the key.”

For example, if you’re creating content from a text to video workflow, your original script might feel dense because it was written for visual reading. Convert it into something that flows naturally when spoken.

Also consider emphasis. AI voices do not always infer importance correctly, so structure helps:

- Put key words at the end of sentences

- Use repetition for clarity

- Avoid nested clauses

If you are planning to combine this with formats like talking photo or lipsync, your script must be even tighter. Long sentences create unnatural mouth movement.

A good rule: if you cannot read the sentence in one breath, rewrite it.

Step 2: Generate the Voiceover (Control Layer)

Now move into voice generation using Magic Hour Voice Generator or similar tools.

Instead of generating the entire script at once, break it into sections. This gives you more control and avoids having to regenerate everything if one part sounds off.

There are three key variables to control here:

Voice identity. Choose based on use case:

- Educational → neutral, clear tone

- Marketing → energetic, slightly faster

- Storytelling → expressive but controlled

Pacing. Most tools default to a speed that is slightly too fast. Slowing it down by a small amount improves clarity significantly.

Structure-aware generation. If your script includes sections, headings, or transitions, generate them separately. This allows you to fine-tune delivery for each part.

If your end goal includes formats like face swap or replace face in video online free tools, consistency matters. The voice must match the visual identity across clips.

Step 3: Pronunciation, Numbers, and Edge Cases (Refinement Layer)

This is one of the most overlooked steps, but it has a huge impact on perceived quality.

AI voices often misread:

- Numbers

- Acronyms

- Brand names

- Technical terms

Fixing this requires editing the script, not the audio.

For numbers, write them in the way you want them spoken. For example:

- “1500” → “one thousand five hundred”

- “2026” → “twenty twenty-six”

For acronyms, test both formats:

- “AI” vs “A I”

- “SEO” vs “S E O”

For product names or niche terms, use phonetic spelling if needed.

This step becomes critical if you are producing professional content like tutorials, product demos, or headshot generator explainers. Small pronunciation errors reduce trust quickly.

Step 4: Timing, Pauses, and Audio Cleanup (Alignment Layer)

Once the voice sounds correct, export your audio and move into timing adjustments.

Listen to the full audio without visuals first. Identify:

- Where pauses feel too short

- Where sentences feel rushed

- Where emphasis is missing

Then make adjustments:

- Insert small gaps between sections

- Trim unnecessary silence

- Split long segments into smaller clips

If your video comes from an image to video pipeline, your visuals may have fixed durations. In that case, adjust audio to fit the visuals, not the other way around.

Also, if you used an image editor or image upscaler earlier in your workflow, make sure visuals are final before syncing. Any change in clip length will break alignment.

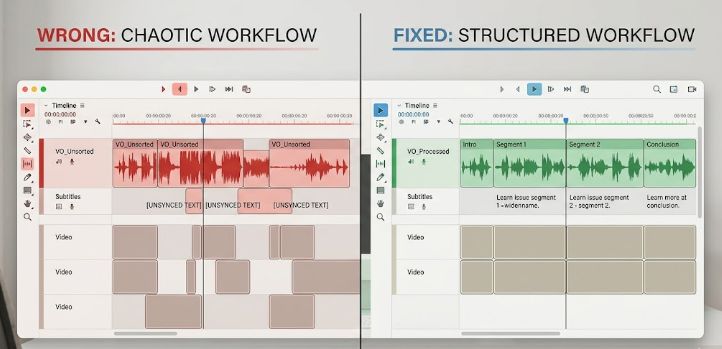

Step 5: Sync Voiceover to Video (Integration Layer)

This is where everything comes together. There are two main approaches, and choosing the right one depends on your content.

Standard voiceover workflow:

You import your audio into a timeline and align it with visuals manually. This works best for:

- Tutorials

- Product walkthroughs

- Text to video outputs

You control timing by adjusting clips, adding cuts, or inserting transitions.

Avatar or lipsync workflow:

Use tools like Magic Hour Lip Sync or Magic Hour Talking Photo to match voice with a face.

This approach is better when:

- You want a human-like presenter

- You are creating social or viral content

- You are using formats like talking photo or face swap gif

One important detail: lipsync works best with shorter phrases. If your script is too dense, the mouth movement will look unnatural.

Step 6: Export and Test Variants (Optimization Layer)

Do not assume your first version is the best.

Export multiple variations:

- Slightly slower vs slightly faster pacing

- Different voice tones

- Shortened versions for social

If you are working with formats like meme generator or gif generator outputs, timing differences of even one second can change performance significantly.

Also test across formats:

- Full video

- Short clips

- Looping content

If you are experimenting with styles like clothes swapper or face swap visuals, test how voice tone affects perceived realism.

Common Mistakes + Fixes

Most issues with AI voiceover are predictable. Here are the ones that come up most often, along with how to fix them quickly.

1. Voice sounds robotic or unnatural

This usually comes from the script, not the tool. Long sentences, complex phrasing, or too much information per line will force awkward delivery.

Fix: Rewrite the script into shorter sentences with clear pauses. Read it out loud once—if it feels hard to say, it will sound unnatural.

2. Wrong pronunciation (numbers, acronyms, names)

AI often misreads things like “2026,” “AI,” or brand names. This breaks credibility fast, especially in tutorials or product demos.

Fix: Control pronunciation directly in the script. Spell numbers out, split acronyms (e.g. “A I”), or use phonetic spelling for tricky words.

3. Voiceover doesn’t match the video timing

This happens a lot in image to video workflows where visuals are generated first and timing shifts later.

Fix: Lock your visuals before final voice generation, or edit the audio (trim, split, add pauses) to match the final timeline.

4. Pacing feels too fast or rushed

Many creators try to shorten videos by speeding up the voice, but it reduces clarity and retention.

Fix: Slightly slow down the voice and add micro-pauses between ideas. Clear delivery performs better than fast delivery.

5. Lipsync or talking photo looks off

When using talking photo or lipsync tools, long sentences create unnatural mouth movement.

Fix: Break dialogue into shorter chunks. Keep each line tight and conversational so it aligns better with facial motion.

6. Tone doesn’t match the content

Using an energetic voice for tutorials or a flat voice for ads creates a mismatch that feels off.

Fix: Choose voice style based on intent. Educational = calm and clear. Marketing = more dynamic. Stay consistent across the video.

7. Over-editing or over-processing

Adding too many effects, speed changes, or emotional tweaks can make the voice feel artificial.

Fix: Keep adjustments minimal. Focus on script quality and timing first, then apply light refinements only where needed.

“Good Result” Checklist

A strong AI voiceover should meet these criteria:

The voice sounds natural at normal listening speed.

There are clear pauses between ideas.

Pronunciation is consistent, including numbers and acronyms.

The audio aligns with visuals without noticeable lag.

The tone matches the content type.

If you are using lipsync or talking photo formats, also check that mouth movement feels believable and not rushed.

Variations

1. Talking Photo Voiceover (Minimal Input, High Engagement)

This is one of the simplest and most effective formats right now.

Instead of creating a full video, you start with a single image and animate it using talking photo tools. The voiceover becomes the primary storytelling layer.

This works well for:

- Educational snippets

- Historical storytelling

- Character-based content

The key challenge here is synchronization. Because the visual is static, all perceived motion comes from the face. That means:

- Sentences must be short

- Pauses must be frequent

- Emphasis must be clear

If you push too much information into one sentence, the result feels unnatural immediately.

2. Short-Form and Meme Content (Timing-Driven Workflow)

For short-form content, voiceover is not just narration. It is part of the pacing system.

When working with meme generator formats, the voice often drives the entire structure. You may need to:

- Add exaggerated pauses

- Delay punchlines intentionally

- Sync voice with visual cuts

Combining voice with elements like emoji overlays or quick transitions can amplify engagement, but only if timing is precise.

In this format, you should generate multiple versions of the same voiceover with slightly different pacing and test them.

3. Product and Feature Demos (Clarity-First Workflow)

For product demos, your goal is clarity and trust.

Voiceover should:

- Match exactly what is shown on screen

- Avoid unnecessary emotion

- Maintain consistent pacing

If you are demonstrating tools like clothes swapper, face swap, or image editor workflows, timing becomes critical. The voice must align with each visual change.

A common mistake is describing something before or after it appears. This creates cognitive friction.

4. Image-to-Video Pipelines (Layered AI Workflow)

Many creators now start with static visuals and build up.

A typical pipeline looks like:

- Generate visuals using an image generator free tool

- Enhance quality using an image upscaler

- Convert into motion using image to video

- Add narration at the final stage

The key insight here is sequencing. Voiceover should always come after visuals are finalized.

If you generate voice too early, you will need to redo it every time visuals change.

5. Social Avatar + Face Swap Content (Hybrid Workflow)

This is a more advanced variation that combines multiple AI layers.

You can:

- Generate a voiceover

- Apply it to an avatar using lipsync

- Enhance engagement using face swap or face swap gif formats

Some creators also experiment with replace face in video online free tools to create variations quickly.

The challenge here is consistency. Voice, face, and motion must feel like they belong together. If one element feels off, the entire output breaks.

6. Looping Content for GIFs and Shorts (Micro-Optimization)

If your goal is looping content, such as clips made with a gif generator, voiceover must be structured differently.

You need:

- A clean loop point

- No abrupt endings

- A rhythm that resets naturally

This often means rewriting the script entirely for looping instead of reusing a standard narration.

A More Advanced Workflow (Optional)

Once you are comfortable, you can combine multiple AI layers.

Start with an image generator free tool to create visuals.

Enhance frames using an image upscaler.

Convert to motion using image to video.

Add narration using Magic Hour Voice Generator.

Sync with lipsync or talking photo tools.

This creates a full pipeline from static asset to narrated video without traditional production.

FAQs

What is the best way to add voiceover to video with AI?

The simplest method is to write a script, generate audio using an AI voice tool, and sync it to your video. Tools like Magic Hour Voice Generator make this process fast and flexible.

Can I create voiceovers for free?

Yes, many tools offer free tiers. However, free plans may limit voice quality, export length, or usage rights.

Is AI voiceover good enough for professional content?

In many cases, yes. For tutorials, ads, and social content, AI voiceovers are often indistinguishable from human recordings when properly edited.

How do I fix robotic-sounding voiceovers?

Focus on script quality first. Short sentences, natural phrasing, and proper pauses improve output more than changing voice settings.

Should I use voiceover or lipsync avatars?

Use voiceover for clarity and speed. Use lipsync or talking photo formats when you want a more engaging, human-like presentation.

Can I combine voiceover with other AI formats?

Yes. Many creators combine voiceover with image to video, face swap gif, or meme generator workflows to create more dynamic content.

Final Thoughts

Adding voiceover to video with AI is no longer a technical challenge. The real advantage comes from how you structure your workflow and refine your output.

If you only focus on generating audio, your results will feel average. If you control script quality, pacing, and sync, your videos will stand out immediately.

Start simple. Test small variations. Then build a workflow that fits your content style.