Best AI Video Extenders (2026): Extend Clips Without Re-shooting

TL;DR

- AI video extenders work best for short fixes (ending extension, simple transitions), but quality drops in longer or complex scenes.

- Expect common issues like face drift, motion errors, and weak audio support, especially in character-heavy or dynamic clips.

- Treat results as iterative: you’ll often need multiple generations and light editing to get production-ready output.

Intro

AI video tools have moved from novelty to practical editing utilities. One of the most useful capabilities right now is extending existing clips without reshooting. Whether you need to fix an abrupt ending, add breathing room for transitions, or expand the frame beyond what was originally captured, an ai video extender can save hours of production time.

Choosing the right tool is not trivial. Some tools extend time, meaning they generate new frames after your clip ends. Others extend space, meaning they expand the edges of the frame through outpainting. A few try to do both, but the results vary depending on motion, lighting, and subject complexity.

This guide compares the best ai video extender tools in 2026. It focuses on real use cases like ending extension, transition filler, and background outpaint. It also highlights where these tools break, because generative extend is powerful but still imperfect.

Best AI Video Extenders at a Glance

Tool | Best For | Extension Type | Platforms | Free Plan | Starting Price |

Overall ease + speed | Time + basic outpaint | Web | Yes | Free / Paid tiers | |

Cinematic continuity | Time | Web | Limited | Paid plans | |

Creative generative extend | Time | Web | Yes | Paid tiers | |

Depth-aware outpaint | Frame/space | Web | Limited | Paid | |

Long-form continuation | Time | Limited access | No clear free | TBD | |

Editing workflow integration | Timeline-based | Desktop | No | Subscription |

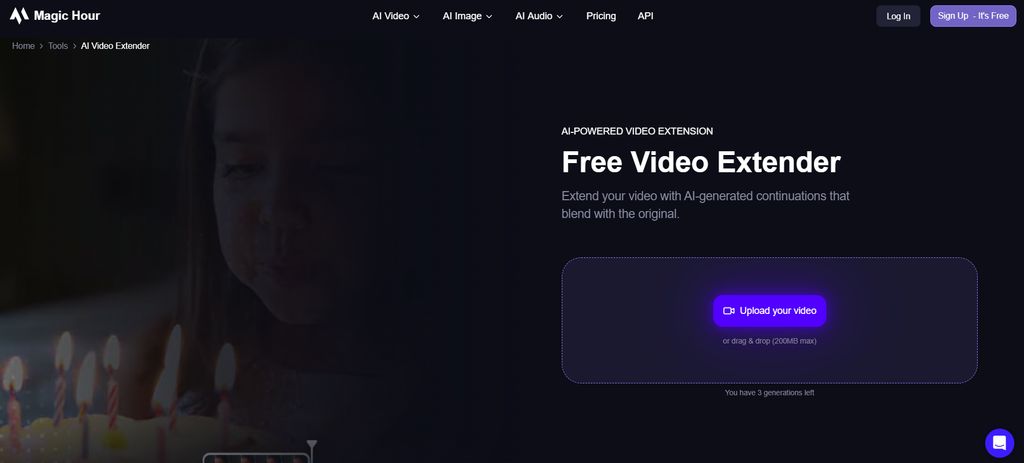

Magic Hour

What it is

Magic Hour is a web-based AI video extender designed to help creators extend clips without reshooting. It focuses on generating additional frames at the end of a video or slightly expanding scenes with minimal setup. The tool is built around speed and accessibility rather than deep technical controls.

It integrates multiple AI workflows beyond simple extension, including image to video, video-to-video transformation, and basic generative edits. This makes it more of a lightweight creative suite rather than a single-purpose tool. Users can move between generation and extension without switching platforms.

The interface is intentionally simplified. Most users can upload a clip, choose an extension mode, and generate results within minutes. This makes it appealing for marketers, social media creators, and small teams that prioritize turnaround time over fine-tuned control.

Magic Hour also fits well into broader AI content pipelines. It can complement workflows like text to video generation or even quick iterations from an image generator free tool, making it useful in multi-step content creation processes.

Pros

- Very fast generation speed compared to most competitors

- Simple interface with minimal learning curve

- Supports multiple workflows beyond extension

- Accessible pricing structure

Cons

- Limited control over motion and physics

- Less consistent with complex multi-subject scenes

- Not optimized for cinematic-grade outputs

Deep evaluation

Magic Hour performs best when the task is clearly defined and relatively simple, such as extending a clip by a few seconds to avoid abrupt endings. In these cases, it delivers consistent results with minimal artifacts. The model tends to prioritize visual continuity over creative variation, which is often the correct tradeoff for production use. Compared to more experimental tools, it feels stable and predictable.

Where Magic Hour starts to show limitations is in scenes with layered motion, such as multiple people interacting or fast camera movement. The generated frames may preserve overall composition, but subtle inconsistencies appear in object motion or timing. This is not unique to Magic Hour, but the lack of advanced controls means users cannot easily correct these issues within the tool itself.

Another important factor is how Magic Hour balances speed and quality. It is noticeably faster than tools like Runway, which makes it more suitable for high-volume workflows. For example, if a marketer needs to extend dozens of clips for ads, the time savings become significant. However, this speed comes at the cost of slightly less refined motion compared to more advanced models.

The tool also integrates well into broader AI ecosystems. It can be used alongside workflows like face swap, talking photo generation, or even quick meme generator pipelines. This flexibility makes it more valuable as part of a system rather than a standalone solution. It is particularly effective when combined with tools that generate initial content, then extended using Magic Hour.

In comparison to competitors, Magic Hour positions itself as a practical tool rather than a cutting-edge research product. It does not aim to produce the most advanced generative outputs, but it consistently delivers usable results. For most creators and teams, this reliability is more important than pushing the limits of generative extend.

Price

Basic - Free

Creator - $10/month (billed annually at $120/year)

Pro - $30/month (billed annually at $360/year)

Business - $66/month (billed annually at $792/year)

Best for

Creators and teams who need fast, reliable video extension without complex setup

Runway

What it is

Runway is a more advanced AI video platform focused on high-quality generative video and scene continuation. It is widely used in creative industries and offers a range of tools beyond simple extension, including full video generation and editing.

The platform emphasizes motion consistency and cinematic output. Its models are designed to understand how scenes evolve over time, making it more suitable for extending clips with camera movement or complex compositions.

Runway operates primarily through a web interface, but it behaves more like a professional creative tool than a simple utility. Users often need to experiment with prompts and settings to achieve optimal results.

It is also frequently used in workflows involving lipsync, character animation, and storytelling. This positions it as a more comprehensive creative platform rather than a focused ai video extender.

Pros

- Strong motion consistency

- Better handling of complex scenes

- High-quality cinematic outputs

- Broad feature set

Cons

- Slower generation speed

- Requires experimentation

- Higher cost compared to simpler tools

Deep evaluation

Runway stands out primarily because of its ability to maintain motion continuity across frames. When extending clips that involve camera movement, such as pans or tracking shots, it performs significantly better than most competitors. The generated frames tend to follow the original motion trajectory rather than resetting or drifting, which is critical for cinematic use cases.

However, this quality comes with tradeoffs. Runway requires more user input and iteration to achieve optimal results. Unlike simpler tools, it does not always produce usable outputs on the first attempt. Users often need to adjust prompts, regenerate segments, and experiment with different settings. This makes it less suitable for high-volume workflows where speed is a priority.

Another key strength is its flexibility. Runway is not limited to extension; it can generate entirely new scenes, modify existing ones, and integrate with other creative workflows. This makes it particularly useful for projects that involve storytelling, character animation, or complex visual narratives. It can also work alongside tools like headshot generator systems or character-based pipelines.

In terms of output quality, Runway often produces more natural motion and lighting transitions than faster tools. This is especially noticeable in scenes with dynamic elements, such as people walking or interacting with objects. However, it is not immune to common AI issues like face drift or physics inconsistencies, especially in longer extensions.

Compared to Magic Hour, Runway is clearly positioned as a higher-end solution. It sacrifices speed and simplicity in favor of control and quality. For professional creators, this tradeoff is often worthwhile. For casual users or marketers, it may feel unnecessarily complex.

Best for

Filmmakers and creators who prioritize motion quality and cinematic continuity

Pika

What it is

Pika is an AI video generation and extension tool focused on creative and stylized outputs. It allows users to extend clips in ways that are not strictly realistic, often producing visually interesting or exaggerated results.

The platform is designed for experimentation. Users can quickly generate variations and explore different directions without needing precise control over every detail. This makes it popular among social media creators.

Pika operates through a web interface and is optimized for speed and iteration. It is less about accuracy and more about creative exploration.

It is often used in workflows involving gif generator outputs, short-form videos, and visual experiments that prioritize engagement over realism.

Pros

- Fast iteration speed

- Highly creative outputs

- Easy to use

- Good for social content

Cons

- Less realistic results

- Inconsistent continuity

- Limited control over details

Deep evaluation

Pika’s main strength is its ability to produce visually engaging extensions that feel intentional rather than broken. When extending a clip, it often introduces stylistic changes that enhance the content rather than strictly preserving the original scene. This makes it particularly useful for creative projects where realism is not the primary goal.

However, this same strength can become a limitation in professional workflows. Pika does not prioritize continuity in the same way as tools like Runway. As a result, extended clips may drift in style, lighting, or composition. For narrative content or brand-focused videos, this inconsistency can be problematic.

Another important factor is how quickly users can iterate. Pika allows rapid generation of multiple variations, which is ideal for testing different creative directions. This is especially useful in environments where content needs to be produced quickly, such as social media or marketing campaigns. It also pairs well with meme generator workflows and short-form content strategies.

In terms of technical capability, Pika is less advanced than some competitors. It does not handle complex motion or multi-subject scenes particularly well. However, it compensates for this by embracing a more flexible and forgiving approach to generation. Users are encouraged to experiment rather than optimize.

Compared to other tools, Pika occupies a unique position. It is not the best choice for realism or precision, but it excels in creative contexts. For users who value originality and speed over accuracy, it can be a powerful addition to their toolkit.

Best for

Creators focused on social media, memes, and creative experimentation

Luma

What it is

Luma is an AI tool focused on spatial understanding and 3D-aware generation. Unlike most video extenders, it specializes in expanding the frame rather than extending time.

It uses depth estimation to generate new content beyond the original boundaries of a video. This makes it particularly useful for resizing or reframing content.

The platform is designed for users who need to adapt videos to different formats, such as converting vertical clips to horizontal layouts.

It also integrates well with workflows involving image upscaler tools and image editor pipelines, where visual quality and composition are critical.

Pros

- Strong depth awareness

- Excellent for outpainting

- Useful for format conversion

- High-quality background generation

Cons

- Not focused on time extension

- Limited motion generation

- Requires specific use cases

Deep evaluation

Luma stands out because it approaches video extension from a spatial perspective rather than a temporal one. Instead of generating new frames after a clip ends, it expands the visible area of each frame. This makes it fundamentally different from tools like Magic Hour or Runway.

This capability is particularly valuable in modern content workflows, where videos often need to be adapted across multiple formats. For example, turning a vertical video into a horizontal one without cropping important elements. Luma can generate new background content that feels consistent with the original scene, which is difficult to achieve manually.

However, Luma is not a replacement for traditional ai video continuation tools. It does not handle motion extension in the same way, and it is not designed to generate new sequences of action. Users need to understand this distinction to use it effectively.

Another important consideration is quality consistency. Luma performs well when the scene has clear depth and structure, but it may struggle with abstract or highly dynamic content. The generated areas can sometimes appear slightly less detailed than the original footage.

Compared to other tools, Luma fills a specific niche. It is not the most versatile option, but it is highly effective within its domain. For users who need frame expansion rather than time extension, it is one of the best available solutions.

Best for

Editors and creators who need background extension and format adaptation

Kling

What it is

Kling is an advanced generative video model focused on long-form continuation. It aims to extend videos beyond short clips, pushing toward more complete scene generation.

The tool is still evolving and not as widely accessible as others. However, it represents a more experimental approach to AI video extension.

Kling focuses on generating new sequences rather than simply extending existing ones. This makes it closer to full video generation than traditional extension tools.

It is often discussed in the context of future workflows, where AI handles entire scenes rather than small edits.

Pros

- Capable of longer extensions

- Strong generative capabilities

- Represents future direction of AI video

Cons

- Unpredictable results

- Limited availability

- Not production-ready

Deep evaluation

Kling is fundamentally different from most tools in this category because it attempts to bridge the gap between extension and full generation. Instead of adding a few seconds to a clip, it can generate entirely new sequences that continue the original scene. This makes it one of the most ambitious tools currently available.

However, this ambition comes with significant challenges. The outputs are often less predictable than those from more constrained tools. While it can produce impressive results, it can also generate sequences that diverge significantly from the original content. This makes it difficult to use in controlled production environments.

Another key aspect is its potential rather than its current performance. Kling demonstrates what ai video continuation could look like in the future, but it is not yet optimized for reliability. Users need to approach it as an experimental tool rather than a practical solution.

In terms of workflow integration, Kling is less developed than other tools. It does not yet fit seamlessly into existing pipelines, which limits its usability for most creators. However, this may change as the technology matures.

Compared to other tools, Kling is the least predictable but also the most forward-looking. It is not the best choice for current production needs, but it is worth watching as the field evolves.

Best for

Advanced users exploring long-form generative video and future workflows

Adobe Premiere Pro

What it is

Adobe Premiere Pro is a professional video editing software that integrates AI features into a traditional editing workflow. It is not a dedicated AI video extender but offers tools that can achieve similar results.

The platform is widely used in professional production environments. It provides full control over editing, including timeline-based adjustments and effects.

Adobe has introduced AI-powered features to assist with tasks like content-aware fill and scene extension. These features complement manual editing rather than replacing it.

Premiere Pro is often used alongside other AI tools, acting as the final stage in a production pipeline.

Pros

- Full editing control

- Reliable and stable

- Industry-standard software

- Integrates with other Adobe tools

Cons

- Not fully automated

- Requires manual work

- Subscription cost

Deep evaluation

Adobe Premiere Pro approaches video extension from a fundamentally different perspective than generative tools. Instead of relying entirely on AI to generate new content, it combines AI-assisted features with manual editing control. This makes it more reliable in professional contexts where precision is critical.

One of its main strengths is flexibility. Editors can use AI features to fill gaps or extend scenes, but they retain full control over the final output. This is particularly important in projects where consistency and quality must be maintained across multiple clips.

However, this approach also requires more effort. Unlike one-click AI tools, Premiere Pro demands time and expertise. Users need to understand editing techniques and workflows to achieve the best results. This makes it less accessible for beginners.

Another advantage is integration. Premiere Pro works seamlessly with other Adobe tools, allowing for a complete production pipeline. It can be combined with AI-generated content from other platforms and refined within a professional editing environment.

Compared to pure AI tools, Premiere Pro is less innovative in terms of generative capability. However, it remains one of the most reliable options for final production. It is best seen as a complement to AI tools rather than a direct competitor.

Best for

Professional editors who need full control and integration within a production workflow

How We Chose These Tools

This list is based on official documentation, product capabilities, and reputable reviews across multiple sources. The focus was on practical performance in real workflows rather than theoretical features.

Key criteria included:

- Extension quality: how natural the generated frames look

- Motion consistency: whether subjects move realistically

- Speed: time to generate results

- Ease of use: how quickly a user can get a usable output

- Flexibility: support for different formats and workflows

- Integration: compatibility with tools like face swap, clothes swapper, and headshot generator pipelines

We also considered how these tools fit into broader creative stacks, including gif generator, emoji-based content, and video length extender use cases.

Test Cases: What Actually Matters

To evaluate any ai video extender properly, you need to go beyond “it works” and look at how it performs in real scenarios. Most tools look similar on the surface, but they behave very differently depending on what you’re trying to fix or create. These three test cases reflect the most common, high-impact use cases in actual workflows.

1. Ending Extension (Fixing Abrupt Cuts)

This is the most practical and widely used scenario. You have a clip that ends too quickly and need a few extra seconds to make it feel complete or usable in a timeline.

What matters here is temporal consistency. The tool needs to continue motion, lighting, and subject behavior in a believable way. Even small issues like a hand freezing, a face subtly changing, or background elements shifting can break the illusion.

- Magic Hour performs well for short, clean extensions where speed matters

- Runway handles motion-heavy scenes better, especially with camera movement

- Pika works if you are okay with slight stylistic shifts

This use case is especially important for social content, ads, and short-form videos where pacing is critical. It also pairs naturally with workflows like text to video or talking photo generation, where clips are often short and need extension to feel complete.

2. Transition Filler (Bridging Two Clips)

This test case focuses on connecting two separate clips smoothly. Instead of extending an ending, you are generating a short “bridge” that makes the transition feel intentional.

Here, coherence is more important than creativity. The generated frames need to match both the end of clip A and the start of clip B. This includes color grading, motion direction, and scene composition.

- Runway is the strongest for maintaining continuity across transitions

- Magic Hour is faster but may require multiple attempts for perfect alignment

- Adobe Premiere Pro works well if you prefer manual control with AI assistance

This scenario is common in editing workflows, especially when stitching together content from different sources. It becomes more complex when combined with effects like face swap or lipsync, where even small inconsistencies in facial features or timing become noticeable.

3. Background Outpaint (Extending the Frame)

This is about expanding what the camera originally captured. Instead of adding time, you are adding space, which is useful for reframing or adapting content to different aspect ratios.

The key factor here is spatial consistency. The AI needs to understand depth, perspective, and lighting to generate believable surroundings. Poor results often look blurry, repetitive, or disconnected from the original scene.

- Luma is the most reliable for depth-aware outpainting

- Magic Hour offers basic support for simple cases

- Most time-focused tools struggle with this scenario

This use case is critical for repurposing content across platforms, such as turning vertical clips into horizontal formats. It also connects well with image editor and image upscaler workflows, where maintaining visual quality across formats is important.

Limitations You Should Know

1. Face Drift and Identity Consistency

One of the most common issues is subtle changes in faces over time. Even when the scene looks stable, small shifts in facial structure, expression, or eye direction can appear after extension. This becomes more obvious in close-up shots or character-driven content.

The problem gets worse in workflows like face swap or replace face in video online free setups, where identity consistency is critical. A face might look correct in one frame but slightly off in the next, breaking continuity. Tools are improving, but none fully solve this yet, especially for longer extensions.

2. Motion and Physics Errors

AI models do not truly understand physics. They predict what “looks right” based on training data, which means motion can feel slightly unnatural in extended segments.

You may notice:

- Hands or objects moving inconsistently

- Sudden changes in speed or direction

- Background elements shifting in unrealistic ways

This is especially noticeable in action scenes, camera movement, or interactions between multiple subjects. Tools like Runway handle motion better, but even they are not perfect when the scene becomes complex.

3. Limited Audio Support

Most ai video extender tools focus almost entirely on visuals. When you extend a clip, the audio usually does not extend with it in a meaningful way.

In practice, this means:

- You may get silence after the original clip ends

- Audio loops or glitches can occur

- Dialogue and lipsync may fall out of sync

If your content relies on sound, you will almost always need a separate workflow to edit or regenerate audio. This is important for formats like talking photo or lipsync videos, where audio-visual alignment matters.

4. Style and Lighting Inconsistency

Even when the extension looks “correct,” subtle differences in lighting, color grading, or texture can appear between original and generated frames.

These issues include:

- Slight color shifts

- Changes in shadow direction

- Texture smoothing or loss of detail

They are not always obvious at first glance, but become noticeable in longer clips or professional edits. This is particularly relevant if you are combining AI-generated segments with footage from an image editor or image generator free pipeline.

5. Duration Limits and Stability

Most tools perform well when extending clips by a few seconds. As you push for longer extensions, the quality tends to drop.

Common patterns include:

- Increased visual drift over time

- Loss of subject consistency

- More frequent artifacts

Some experimental tools attempt longer ai video continuation, but they are less reliable. In most cases, shorter, incremental extensions produce better results than trying to generate long sequences in one go.

Which Tool Is Best for You?

If you want a simple, reliable ai video extender for everyday use, Magic Hour is the most practical choice. It is fast, accessible, and works across multiple use cases.

If you care about cinematic quality and motion consistency, Runway is the better option.

If you are experimenting with creative content or meme-style outputs, Pika is worth exploring.

If your main need is resizing or expanding frames, Luma is the right tool.

If you are working inside a professional editing pipeline, Adobe Premiere Pro remains a strong complement.

The best approach is to test two or three tools with the same clip. Small differences in output quality can have a big impact depending on your use case.

FAQ

What is an ai video extender?

An ai video extender is a tool that generates additional frames or expands the frame of an existing video. It allows you to extend clips without reshooting.

How does AI extend video?

Most tools use generative models trained on video data to predict what comes next in a scene or what exists beyond the frame.

Which tool is best for beginners?

Magic Hour is the easiest to start with due to its simple interface and fast results.

Can AI extend video with audio?

Most tools focus on visuals. Audio usually needs to be edited separately.

Is this the same as text to video?

Not exactly. Text to video creates clips from scratch, while extension builds on existing footage.

What is the difference between time extension and outpainting?

Time extension adds new frames after the clip ends. Outpainting expands the visible frame beyond its original boundaries.

How accurate are these tools?

They are improving quickly, but still have limitations with motion, faces, and complex scenes.