How to Edit Photos With Flux Kontext (2026): 10 Practical Recipes (Retouch, Relight, Replace, Restyle)

TL;DR (3 steps)

- Upload a clean source image into Flux Kontext and define a single clear goal (retouch, relight, replace, or restyle).

- Use structured prompts with constraints (what to change, what to preserve, style limits).

- Iterate in small steps, checking artifacts like edges, lighting consistency, and identity drift before exporting.

What you need (inputs/specs)

To get consistent results with Flux Kontext, the inputs matter more than the prompt itself. Most weak outputs come from poor source images or unclear intent.

Start with a high-quality image. Ideally, your input should be at least 1024px on the shortest side. If your image is smaller, run it through an image upscaler first to avoid texture loss during edits. Clean lighting, visible subject edges, and minimal compression artifacts will significantly improve results.

Define your editing goal before writing any prompt. Flux Kontext performs best when you isolate one transformation at a time. For example, do not attempt background replacement, lighting change, and face retouch in a single pass. Treat it like a layered image editor workflow, even though it is prompt-based.

You also need a prompt structure. A good prompt for flux kontext photo editing usually includes:

- What to change

- What to preserve

- Style or realism constraints

- Output expectations (e.g. natural, studio lighting, editorial look)

Optional but useful inputs include reference images, especially when restyling. This is particularly helpful for workflows like image to video or text to video later, where consistency matters across frames.

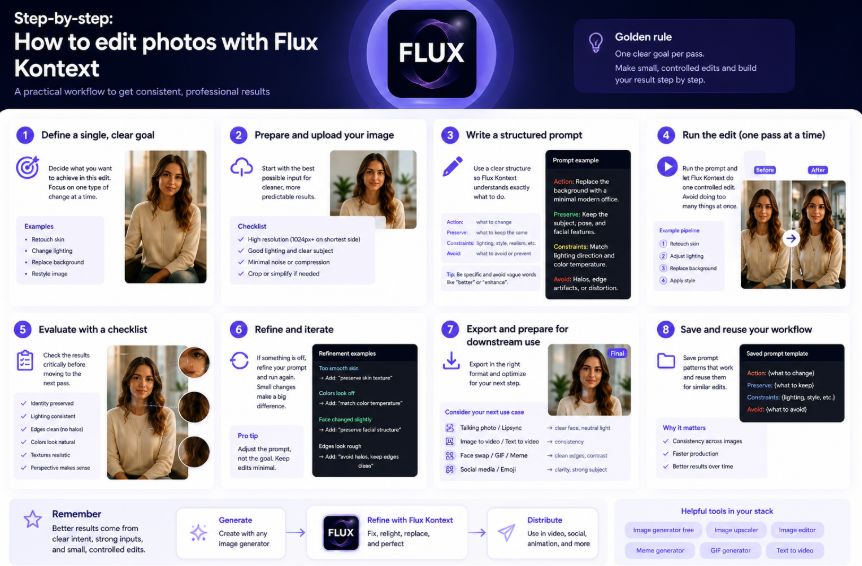

Step-by-step: How to edit photos with Flux Kontext

Step 1: Define a single, clear objective

Before you even upload your image, decide exactly what this edit is supposed to do.

Flux Kontext performs best when each run focuses on one type of transformation. In practice, most edits fall into four categories:

- Retouch (skin, small object cleanup)

- Relight (change lighting conditions)

- Replace (background or object swap)

- Restyle (overall visual style)

For example:

- “Fix skin imperfections” → retouch

- “Change background to a beach” → replace

- “Make this look like a studio portrait” → relight + restyle

The most common mistake is combining everything into one prompt. This usually leads to inconsistent outputs: the background changes, but the face distorts; lighting improves, but textures break.

A better approach is to think in passes:

- Pass 1: retouch

- Pass 2: relight

- Pass 3: replace background

This becomes critical if your image is later used in pipelines like talking photo, lipsync, or image to video, where visual consistency matters more than a single “nice-looking” frame.

Step 2: Prepare and normalize your input

Input quality directly affects output quality. If your source image is noisy, low-resolution, or poorly lit, Flux Kontext will amplify those issues.

A simple prep workflow:

- If the image is small → run it through an image upscaler

- If colors look off → lightly correct white balance

- If the background is cluttered → crop or simplify

You should also prefer images with:

- Clear subject separation from background

- Defined lighting direction

- Minimal compression artifacts

This makes a big difference for tasks like replace background flux or clothes swapper-style edits, where edge detection and subject isolation are critical.

Step 3: Write structured prompts (not long prompts)

The quality of your prompt matters less than the structure of your prompt.

Instead of writing long, descriptive paragraphs, use a controlled format:

- What to change

- What to preserve

- Constraints (lighting, realism, proportions)

- What to avoid

Example:

“Replace the background with a modern office. Keep the subject unchanged. Match lighting direction and color temperature. Avoid edge artifacts or halos.”

If you skip the “preserve” part, the model may alter your subject. If you don’t specify lighting, the new background won’t match.

This structured approach is especially useful when creating assets for meme generator or gif generator workflows, where even small inconsistencies become obvious.

Step 4: Run edits in passes, not all at once

Flux Kontext is not optimized for multi-goal prompts.

Instead of:

“Retouch skin, change background, adjust lighting, and make it cinematic”

Break it into:

- Retouch skin

- Adjust lighting

- Replace background

- Apply style

This mirrors how a professional image editor works, but faster.

Benefits of this approach:

- Easier to debug errors

- Better control over each visual element

- Less identity drift (important for face swap or headshot generator use cases)

Step 5: Evaluate outputs with a technical checklist

Don’t just ask “does this look good?” Evaluate the result systematically.

Check:

- Edges: any halos or cutout artifacts?

- Lighting: is direction consistent across subject and background?

- Texture: does skin or fabric look natural?

- Identity: does the face still match the original?

- Perspective: does the background feel physically correct?

Skipping this step leads to compounding errors. A small edge issue in a background replacement becomes very obvious if you later use the image in a face swap gif or animation pipeline.

Step 6: Fix issues by adjusting constraints, not adding noise

When results are off, most people respond by making the prompt longer. That usually makes things worse.

Instead, identify the exact failure and correct it with a targeted constraint:

- Skin too smooth → “preserve skin texture”

- Colors off → “match color temperature”

- Face distortion → “preserve facial structure”

Avoid vague phrases like:

- “make it better”

- “more realistic”

Flux Kontext responds better to precise constraints than to stylistic adjectives.

Step 7: Prepare outputs for downstream use

Once your image looks good, think about where it will be used next.

Different use cases require different optimizations:

- Talking photo → clear face, balanced lighting

- Lipsync → accurate facial alignment

- Image to video → consistency across frames

- Social content or emoji → strong subject clarity and contrast

If you skip this step, your image may look fine on its own but break when used in a larger workflow.

For example, an image that looks acceptable as a standalone edit may fail in text to video pipelines because lighting shifts between frames or identity is not stable enough.

Step 8: Build a repeatable workflow

The real advantage of Flux Kontext is not one good result. It’s the ability to repeat results across multiple images.

Once you find a prompt structure that works:

- Save it

- Reuse it

- Adjust only small variables (background, lighting, style)

This is how you scale from one-off edits to production workflows.

Whether you’re creating assets for content, marketing, or automation pipelines, consistency is what separates usable outputs from random ones.

10 Practical Flux Kontext Recipes

Below are 10 tested prompt patterns. Each includes input, prompt structure, constraints, and common failure cases.

1. Natural skin retouch (portrait cleanup)

Input: Close-up portrait with visible skin texture

Prompt pattern:

“Remove blemishes and smooth skin texture. Preserve natural pores and facial features. Keep original lighting and color tone. Avoid plastic or overly soft skin.”

Constraints:

- Keep identity intact

- Do not alter face shape

Common failures:

- Over-smoothing (plastic skin)

- Loss of fine details around eyes

2. Background replacement (clean swap)

Input: Subject with clear edges

Prompt pattern:

“Replace background with a modern office interior. Keep subject unchanged. Match lighting direction and color temperature to subject.”

Constraints:

- Maintain shadow direction

- Preserve edge details

Common failures:

- Halo artifacts around subject

- Mismatched lighting

This is similar to workflows like replace face in video online free, where consistency between layers is critical.

3. Lighting relight (golden hour effect)

Input: Outdoor photo with flat lighting

Prompt pattern:

“Adjust lighting to warm golden hour tones. Add soft directional sunlight from the side. Maintain realistic shadows and skin tones.”

Constraints:

- Avoid color oversaturation

- Preserve detail in highlights

Common failures:

- Orange color cast

- Blown highlights

4. Outfit change (clothes swapper style)

Input: Full-body image

Prompt pattern:

“Change outfit to casual streetwear with neutral tones. Preserve body proportions and pose. Maintain fabric realism and lighting consistency.”

Constraints:

- Keep wrinkles and fabric texture

- Avoid floating clothing artifacts

Common failures:

- Unrealistic folds

- Clothing blending into body

5. Expression adjustment (subtle emotion shift)

Input: Neutral face portrait

Prompt pattern:

“Slightly adjust expression to a soft smile. Preserve identity and facial structure. Maintain natural muscle movement.”

Constraints:

- Avoid exaggerated expressions

Common failures:

- Face distortion

- Asymmetry

6. Style transfer (editorial look)

Input: Portrait

Prompt pattern:

“Restyle into high-fashion editorial photography. Maintain subject identity. Use soft studio lighting and neutral background.”

Constraints:

- Keep proportions realistic

Common failures:

- Over-stylization

- Identity drift

7. Product photo cleanup

Input: Product on cluttered background

Prompt pattern:

“Remove background and replace with clean white studio backdrop. Enhance product sharpness. Maintain realistic shadows.”

Constraints:

- Keep edges crisp

Common failures:

- Floating product

- Missing shadow

8. Convert to talking photo asset

Input: Face portrait

Prompt pattern:

“Prepare image for talking photo animation. Ensure neutral expression, clean edges, and balanced lighting. Preserve identity and clarity.”

Constraints:

- Avoid blur

Common failures:

- Inconsistent facial alignment

Useful when preparing assets for lipsync or animation workflows.

9. Social media meme-ready edit

Input: Casual photo

Prompt pattern:

“Enhance image clarity and contrast. Keep natural colors. Prepare for meme generator use with clean composition and subject focus.”

Constraints:

- Avoid over-editing

Common failures:

- Over-sharpening

10. GIF-ready sequence consistency

Input: Series of images

Prompt pattern:

“Ensure consistent lighting, color grading, and subject appearance across frames. Preserve identity and alignment.”

Constraints:

- Maintain frame-to-frame consistency

Common failures:

- Flickering colors

This is critical for workflows like gif generator or face swap gif content.

Common mistakes and fixes

1. Overloading a single prompt with too many goals

A common instinct is to combine everything into one instruction: retouch, relight, replace background, and apply a style in a single run. The result often looks unstable or partially correct.

This happens because Flux Kontext has to balance competing instructions at once. When tasks conflict, the model prioritizes inconsistently.

The fix is to break your workflow into passes. Treat each edit as a controlled step, similar to how you would work in an image editor. Start with retouching, then lighting, then background, then styling. This not only improves quality but also makes it easier to debug when something goes wrong. It becomes especially important when your images are later used in pipelines like image to video or lipsync, where small inconsistencies get amplified.

2. Vague prompts that lack constraints

Prompts like “make it better” or “enhance the image” rarely produce reliable results. They leave too much room for interpretation, which leads to unpredictable changes.

Flux Kontext responds best to structured instructions. You need to clearly define what should change and what must stay the same. Without that, the model may alter elements you never intended to touch.

A more effective approach is to always include constraints: specify lighting direction, preserve identity, maintain proportions, and explicitly mention what to avoid. This level of control becomes critical when preparing assets for meme generator or gif generator workflows, where even minor inconsistencies can break the final output.

3. Not preserving identity or key elements

One subtle but serious issue is identity drift. After a few edits, the subject no longer looks like the original, even if each individual step seemed correct.

This usually happens because the prompt never explicitly says what must be preserved. The model assumes flexibility and gradually modifies features over multiple passes.

To fix this, always anchor your prompts with preservation rules such as maintaining facial structure, proportions, or key visual traits. This is essential in use cases like face swap, headshot generator, or talking photo, where accuracy matters more than style.

4. Poor input quality and expecting the model to fix it

Many users try to fix low-resolution, noisy, or poorly lit images purely through prompting. This rarely works well and often introduces artifacts.

Flux Kontext can enhance an image, but it cannot reliably reconstruct missing detail from weak inputs. If the source image is flawed, those flaws will carry through every edit.

The practical fix is to clean your input before editing. Use an image upscaler if resolution is low, correct basic lighting issues, and simplify the composition if needed. A strong input gives you far more control and reduces the need for complex prompts later.

5. Ignoring consistency for downstream use

An image might look good on its own but fail when used in a larger workflow. This is a common oversight, especially when the image is part of a sequence or animation.

The problem usually shows up as inconsistent lighting, slight identity shifts, or mismatched color grading across images. These issues become obvious in formats like face swap gif, text to video, or other multi-frame outputs.

The fix is to think one step ahead. Before finalizing an edit, consider how the image will be used. Optimize for that context by keeping lighting stable, preserving alignment, and avoiding unnecessary stylistic variation. Consistency, not just visual quality, is what makes an image usable in production workflows.

“Good result” checklist

Before exporting, verify the following:

- Subject identity is preserved

- Lighting direction is consistent

- Edges are clean with no halos

- Colors look natural, not oversaturated

- No texture artifacts on skin or fabric

- Background matches perspective and depth

If any of these fail, rerun with tighter constraints instead of adding more instructions.

Variations

You can extend these workflows depending on your goal.

One variation is combining Flux Kontext with an image generator free tool to create base images, then refining them with precise edits.

Another approach is preparing assets for animation pipelines like text to video or emoji-based content systems, where consistency matters more than single-frame quality.

You can also integrate Flux Kontext outputs into image to video workflows, especially for character-driven content where visual consistency across frames is critical.

Where Flux Kontext fits in your workflow

1. The refinement layer between generation and final output

Most AI workflows follow a simple structure: generate → refine → publish. Flux Kontext sits in the middle, where most of the real quality improvements happen.

When you use an image generator free tool, you often get results that are close but not production-ready. Lighting might be inconsistent, backgrounds messy, or subjects slightly off. Instead of regenerating multiple times, Flux Kontext lets you fix those exact issues with controlled edits.

This shifts your workflow from randomness to precision. Instead of hoping for a perfect generation, you generate once and refine deliberately. That alone makes your pipeline more efficient and predictable, especially when working at scale.

2. The preparation step for downstream use (video, animation, social)

Flux Kontext becomes critical when your image is not the final asset, but an input to something else.

For example:

- In talking photo or lipsync workflows, facial clarity and alignment matter more than style

- In image to video or text to video pipelines, lighting and identity must stay consistent across frames

- In formats like face swap gif or short-form content, small visual errors become very obvious

If you skip this step, downstream tools will amplify flaws. A slightly off face or lighting mismatch might look fine in a static image but will break once animated.

Using Flux Kontext as a preparation layer ensures your images are clean, consistent, and technically ready. It’s less about making the image “look better” and more about making it “work reliably” in the next stage.

3. The consistency engine for repeatable workflows

The biggest difference between one-off results and scalable workflows is consistency. Anyone can get one good image. Producing ten that match in lighting, style, and identity is much harder.

Flux Kontext helps you standardize outputs by applying the same prompt structure across multiple images. Once you define a working pattern, you can reuse it to:

- Clean and align batches of images

- Maintain identity across variations

- Keep visual style consistent for content series

This is especially useful in workflows involving meme generator content, emoji-based assets, or any repeated format where visual coherence matters.

Over time, Flux Kontext stops being just a tool and becomes part of your system logic. It ensures that every image entering your pipeline follows the same rules, which is what makes automation and scaling possible.

FAQs

What is Flux Kontext used for?

Flux Kontext is used for prompt-based image editing. It allows you to retouch, relight, replace, or restyle images without manual editing tools.

How is it different from a traditional image editor?

Instead of manual controls, you describe changes using prompts. This makes it faster for iteration but requires clear instructions.

Can I use it for face swap or animation?

It is not built specifically for face swap or animation, but it works well as a preparation step for those workflows.

What is the best way to write flux kontext prompts?

Use structured prompts with clear instructions, constraints, and preservation rules. Avoid vague descriptions.

Can beginners use it effectively?

Yes, but results depend on how clearly you define the task. Start with simple edits before moving to complex transformations.

Does it work for social content like memes or GIFs?

Yes. It is especially useful for preparing clean, consistent images for meme generator or gif generator workflows.