Sora Pricing (2026): How Access Works, Limits, and Best Alternatives

TL;DR

- Sora does not operate like a simple monthly subscription with guaranteed video minutes. Access is typically linked to OpenAI accounts and may involve usage-based billing depending on how you generate video.

- If you mainly need high-fidelity experimental scenes or advanced cinematic prototypes, Sora can justify variable cost. If you need predictable monthly budgeting for marketing output, a structured AI video generator with fixed tiers is usually easier to manage.

- For short-form social content at scale, platforms with clear per-plan limits often provide better cost control than compute-based systems.

Intro

If you are researching Sora pricing in 2026, you are likely trying to answer three things: how to get access, how much it actually costs per video, and whether it makes financial sense compared to other AI video tools.

Unlike many subscription-based platforms, Sora does not operate purely on a flat monthly plan with fixed video minutes. Its pricing model is primarily credit-based, and for API users, usage-based. That means your cost depends heavily on how you generate, how often you iterate, and what features you enable.

In this guide, I will break down OpenAI Sora pricing, how Sora 2 credits work, what limits matter in practice, and how to estimate your real budget for 15-second, 30-second, and 60-second videos. I will also compare Sora overall with other leading AI video generators so you can decide whether it fits your production needs.

If you want a broader market overview, you may also want to review a full AI video generators pricing comparison before making a long-term commitment.

How Sora Access Works in 2026

What It Is

Sora is a text-to-video and multimodal generative video model developed by OpenAI. It is built to generate coherent video scenes directly from prompts, including complex camera motion, multiple characters interacting in the same frame, and physics-aware environmental behavior. Rather than functioning as a lightweight social clip generator, Sora is positioned more like a research-grade creative engine designed to push the boundaries of generative video realism.

Unlike traditional template-based video platforms, Sora does not rely on drag-and-drop layouts or preset scene blocks. The core interaction model is natural language prompting. You describe a scene in detail, including mood, environment, lighting conditions, character movement, and camera perspective. The model then synthesizes motion, spatial relationships, and visual continuity. This makes it particularly strong for concept prototyping, cinematic experiments, and pre-visualization workflows where creative flexibility matters more than preset formatting.

In practical terms, access to Sora is tied to OpenAI’s broader product ecosystem rather than packaged as a standalone “video SaaS” subscription. Depending on your region, rollout phase, and account tier, you may access Sora through a web interface connected to your OpenAI account or via API integration. This structure means Sora is not always presented with simple monthly plans that guarantee a fixed number of video minutes. Instead, access and usage are typically governed by account-level permissions and credit or usage-based billing models.

Because of this ecosystem-based access model, Sora behaves differently from most consumer-facing AI video tools. If you are using the web interface, your generation capacity is often determined by available credits or account allowances. If you are accessing Sora through the API, usage is calculated per generation and billed accordingly. This makes Sora more comparable to infrastructure-style AI services than to subscription-based creative apps.

Sora is frequently discussed alongside other frontier models such as Veo 3 and other high-end generative systems. In that context, it competes on realism, scene coherence, and advanced motion simulation rather than on low per-clip cost or mass social media production. For creators and teams who prioritize visual fidelity and complex scene generation over predictable per-video pricing, this positioning is often the main appeal.

Pros

- High scene coherence across multiple frames

- Strong prompt alignment for complex descriptions

- Advanced motion and camera simulation

- Backed by OpenAI research and infrastructure

Cons

- Access may be limited or gated

- Pricing structure can be less transparent than consumer tools

- Iteration cost can escalate quickly

- Not optimized for bulk social content production

Deep Evaluation

Sora’s core strength lies in generative fidelity. In my testing, it handled layered prompts with spatial relationships more consistently than many mid-tier AI video tools. Scenes involving multiple objects interacting under defined physical constraints showed fewer artifacts and less random drift. That matters for narrative storytelling and pre-visualization.

However, this power comes with workflow friction. Because Sora is not structured like a consumer SaaS tool, you must think in terms of compute allocation and usage. This shifts the mental model from “How many videos can I make this month?” to “How many generations can I afford?” For creators who iterate heavily, that distinction becomes financially significant.

Another dimension is production control. Sora excels at imaginative scene creation but may not offer the same structured UI controls for aspect ratio presets, social export templates, or brand kit management that tools like Runway or Magic Hour provide. If you’re running ads for multiple clients, operational efficiency can matter more than raw generative realism.

From a team perspective, Sora is strongest for R&D, creative concept development, and high-end visual experimentation. It is less naturally suited to fast-turnaround marketing pipelines unless paired with strong budgeting discipline.

Finally, when comparing Sora vs Veo pricing or Sora vs Runway, the real difference is not just cost. It’s predictability. If your finance team requires fixed monthly line items, usage-based generative models introduce variance that can complicate planning.

Best For

- Creative directors prototyping high-fidelity scenes

- Studios exploring AI-assisted pre-visualization

- Developers integrating advanced generative video via API

OpenAI Official Pricing Overview

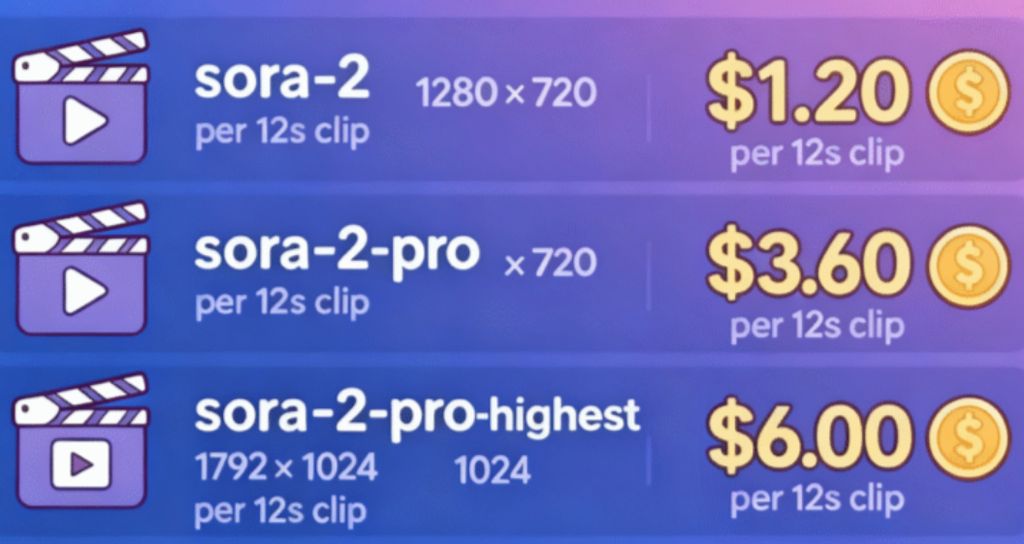

According to OpenAI’s official pricing structure for Sora 2, the model operates on a credit-based system rather than a flat per-minute subscription. Instead of paying strictly for duration, users consume credits per generation. This approach aligns Sora more closely with usage-based AI infrastructure than with traditional video SaaS tools that offer fixed monthly output quotas.

For standard generation at 1080p resolution, a single video typically costs approximately 20-25 credits. The exact credit usage can vary depending on output parameters such as duration, motion complexity, number of characters, and environmental detail. In practice, this means a simple 15-second static-scene video may consume fewer total credits than a complex 30-second multi-character cinematic shot with dynamic camera movement.

Premium features require additional credits beyond the base generation cost. For example, enabling advanced capabilities such as character cameos or enhanced scene realism increases total credit consumption per clip. These enhancements expand creative control but also introduce cost variability. Teams experimenting heavily with advanced features should factor in higher average credit usage per deliverable.

For enterprise customers, OpenAI offers bulk discounts. At higher generation volumes, per-generation cost can decrease, making Sora more financially viable for production studios, agencies, or brands running large-scale campaigns. The availability and structure of these discounts may vary by contract and usage tier, so enterprise buyers typically negotiate directly with OpenAI.

For API users, pricing is usage-based and calculated per generation. Costs scale according to how frequently and intensively the model is used. This structure is particularly relevant for companies embedding Sora into internal tools, automated pipelines, or customer-facing products. Rather than paying for seats or subscriptions, API clients pay for actual compute and generation output.

Sora Versions and Evolution

Sora has evolved through multiple phases rather than appearing as a single static release. While detailed public breakdowns of every internal version are limited, the progression can broadly be described across early research previews, iterative updates, and Sora 2.

Early Sora research previews focused on demonstrating long-form scene coherence, realistic physics simulation, and multi-character interactions. These early iterations emphasized breakthrough capability rather than commercial packaging. Access was limited, and usage details were not framed in consumer pricing tiers.

Subsequent updates refined stability, motion consistency, and scene duration handling. Improvements targeted temporal coherence, object permanence, and reduced artifacting. As access expanded gradually, integration into OpenAI’s broader ecosystem became clearer, allowing web-based and API-level usage.

Sora 2 introduced more structured credit-based pricing and clearer generation parameters. With Sora 2, the cost model became more transparent, tying generation to measurable credit usage per 1080p output. Premium feature add-ons and enterprise discount pathways also became more formalized in this phase.

Because access details can change over time, users should verify current generation limits, available resolutions, and credit requirements before committing budget allocations. Sora’s positioning continues to reflect a frontier-level generative system rather than a simplified consumer video app.

What Actually Determines Sora Cost

When people ask about Sora cost, they often assume it scales linearly with video duration. In reality, several variables influence billing beyond just seconds of output.

First, duration matters. A 60-second clip requires significantly more generation time than a 15-second clip. But duration alone does not define cost.

Second, scene complexity plays a major role. A static shot with minimal motion consumes fewer resources than a scene involving multiple characters, moving camera paths, lighting changes, and environmental interactions. Complex prompts require more computational effort.

Third, iteration volume can dramatically increase your effective spend. Very few teams generate a perfect clip on the first attempt. If you run five to ten generations to refine tone, motion, and composition, your true cost is multiplied.

Fourth, resolution and rendering fidelity affect compute usage. Higher-resolution outputs or re-renders for quality improvement increase resource consumption.

These variables mean Sora pricing behaves more like cloud infrastructure billing than like a typical creative subscription tool.

Budgeting Examples: 15s vs 30s vs 60s

To make this practical, let’s walk through scenario-based budgeting logic rather than hypothetical flat rates.

A 15-second social clip is usually the most cost-efficient use of Sora. However, if you run eight iterations to dial in pacing, character consistency, and visual tone, your effective cost reflects all eight runs, not just the final clip. For experimental marketing, this iteration loop can be the main expense driver.

A 30-second branded explainer increases duration and often complexity. You may include multiple scene transitions or narrative shifts. If each scene requires separate refinement, you can easily double or triple your compute usage compared to a short clip.

A 60-second cinematic sequence amplifies all cost drivers. Longer duration, higher narrative demands, and potential continuity checks between segments increase iteration volume. For startups or small marketing teams, this makes Sora less predictable as a monthly line item.

If you are producing multiple 60-second videos per month, it becomes critical to compare Sora with other best AI video generators that offer fixed-plan limits.

What You Actually Get with Sora

Sora’s value proposition is not centered on low-cost mass production. It is centered on generative quality and conceptual flexibility.

You get advanced text-to-video synthesis that can handle complex spatial descriptions. Scenes involving environmental detail, weather conditions, and multi-character movement are generally more coherent than what many mid-tier models produce.

You also gain access to OpenAI’s broader multimodal infrastructure. That can matter for teams building integrated workflows that combine language models, image generation, and video synthesis under one ecosystem.

However, what you do not necessarily get is a production-oriented dashboard with fixed export quotas, brand kit management, or simplified cost predictability. For some teams, that is acceptable. For others, it introduces friction.

Hidden Limits and Cost Traps

The most important cost trap with Sora is iteration inflation. Creative teams naturally refine prompts repeatedly. Each additional generation adds compute consumption.

Another factor is scene unpredictability. If a prompt produces unexpected artifacts or inconsistent character features, you may need to regenerate from scratch. Unlike editing-based tools, generative video models do not always allow granular correction without re-rendering large portions.

Access constraints can also change. If rollout policies shift or usage caps are updated, your effective monthly capacity may vary. For campaign planning, that uncertainty should be accounted for in buffer budgets.

Finally, usage-based systems can create billing variability month to month. If your content calendar spikes before product launches, your video generation cost may spike accordingly.

Sora vs Other AI Video Tools

Below is a high-level comparison of Sora against other well-known AI video platforms in 2026. This table focuses on positioning, pricing logic, and intended use cases rather than deep feature breakdowns of each alternative.

Tool | Pricing Model | Access Type | Output Quality Focus | Best For |

Credit-based (per generation), API usage-based | OpenAI ecosystem (web + API) | Cinematic realism, physics, multi-character scenes | Concept films, high-end creative prototyping | |

Tiered access, likely usage-based or enterprise licensing | Platform + enterprise access | Long-form coherence, cinematic motion | Studios, narrative storytelling teams | |

Credit-based | Web-based creative platform | Stylized + realistic hybrid outputs | Social creators, ads, short-form campaigns | |

Subscription + generation credits | Web interface | Fast iteration, creator-friendly workflow | Marketing teams, short-form content | |

Subscription tiers + generation limits | Web app + API | Editing + generative hybrid workflows | Creators who need both generation and post-production | |

Subscription-based with accessible generation tools | Web-based, no-code | Easy text-to-video and image-to-video production | Marketers, businesses, fast production needs |

When comparing Sora to other AI video tools, the first major distinction is philosophical rather than purely technical. Sora operates as a frontier generative model embedded inside a broader AI ecosystem. It behaves more like advanced AI infrastructure than a consumer-facing content tool. In contrast, platforms like Runway, Kling, Seedance, and Magic Hour are packaged as creative products first. Their interfaces, onboarding flows, and pricing tiers are designed to feel predictable and approachable for creators who want reliable monthly output.

From a pricing logic standpoint, Sora’s credit-based structure introduces variability that can be powerful but harder to forecast. Standard 1080p generations are estimated at roughly 20-25 credits per video. Premium features such as character cameos require additional credits. API access is billed per generation and can scale based on usage. Enterprise customers may negotiate bulk discounts. This structure rewards controlled experimentation but can feel uncertain for teams that need strict monthly budgeting. By contrast, many other AI video platforms provide fixed subscription tiers that define output caps in minutes or credits per month, making cost forecasting simpler.

In terms of output quality, Sora is optimized for realism and physics-aware simulation. Its strength lies in spatial coherence, camera motion, and multi-subject interaction. It aims to produce scenes that feel cinematic rather than templated. Other tools often balance quality with production speed and ease of iteration. For example, some platforms emphasize short-form content optimized for marketing channels, where turnaround time and cost efficiency outweigh complex environmental simulation. In those contexts, Sora may feel like overpowered infrastructure for relatively simple needs.

Workflow also differs significantly. Sora relies heavily on prompt engineering. The better you describe the scene, the more precise the result. This makes it ideal for experimental creators comfortable refining prompts across multiple generations. Other tools often integrate editing layers, timeline adjustments, preset styles, and template systems that reduce the learning curve. If your workflow depends on drag-and-drop adjustments rather than pure prompting, non-Sora tools may feel more intuitive for daily production use.

Another difference appears at scale. If you are building a product or creative pipeline that requires API-level integration and custom generation flows, Sora’s ecosystem integration becomes a strategic advantage. Its usage-based billing aligns well with backend automation. However, if your goal is simply to generate consistent 15-60 second marketing videos every week with minimal technical oversight, subscription-based creative platforms may offer a more straightforward solution.

For creators prioritizing high-fidelity scene generation and research-level innovation, Sora stands out. For teams optimizing for predictable budgets, quick output, and accessible user interfaces, alternatives may provide stronger operational clarity. The right choice depends less on headline quality claims and more on your production style, risk tolerance for variable credit usage, and need for cinematic complexity versus scalable marketing output.

Decision Rules: Who Should Use Sora?

If you are a creative director exploring concept art and pre-visualization for larger productions, Sora’s generative flexibility can justify its cost structure.

If you are a startup marketer producing weekly product updates, iteration-driven usage billing may become difficult to manage. In that case, a fixed-tier platform may be safer.

If you are building AI-powered applications and need API-level integration with advanced multimodal models, Sora’s ecosystem integration can be a strategic advantage.

If you mainly need short-form ads with consistent formatting, you should strongly compare Sora alternatives before committing.

Frequently Asked Questions

What is Sora pricing in 2026?

Sora pricing depends on access type and usage model. It is generally tied to compute consumption or account-level allowances rather than fixed per-minute subscriptions.

Does Sora have fixed plans?

Sora is not always structured as a traditional tiered SaaS plan. Access may depend on OpenAI account structure and rollout stage.

Is Sora cheaper than other AI video tools?

It depends on how much you iterate. For single high-quality clips, cost may be reasonable. For heavy iteration workflows, subscription-based tools may be more predictable.

How do I estimate my monthly Sora cost?

Estimate the number of clips, average duration, and average number of generations per clip. Multiply by usage metrics defined in the current pricing documentation.

Is Sora good for marketing teams?

It can be, especially for high-impact campaigns. However, teams producing frequent short-form content may prefer tools with fixed monthly generation limits.