Seedance 2.0 Pricing (2026): Plans, Credits, Real Cost Drivers, and How to Get the Best Output

TL;DR

- Seedance 2.0 pricing scales with duration, resolution, quality tier, references, and retries. Short drafts at low resolution are the simplest way to control spend.

- If you create short 6-15 second marketing loops, a mid-tier plan is usually enough - but heavy 1080p + high-quality passes will push you up a tier fast.

- The most reliable way to reduce cost is a draft → lock → high-quality workflow with strict retry caps.

Intro

Seedance 2.0 uses a credit-based pricing model designed for creators and marketing teams who need controllable, production-ready AI video. The real cost is not just the monthly subscription - it depends on resolution, clip length, regeneration frequency, and how efficiently you prompt.

This guide breaks down:

- Seedance 2.0 pricing plans and credit structure

- What each plan realistically allows you to produce

- The limits that most affect output quality and cost

- How Seedance compares to Kling, Veo 3, Runway, and Magic Hour

- When to choose an alternative based on use case

If you're evaluating Seedance for commercial video workflows, this breakdown focuses on what actually impacts budget and results.

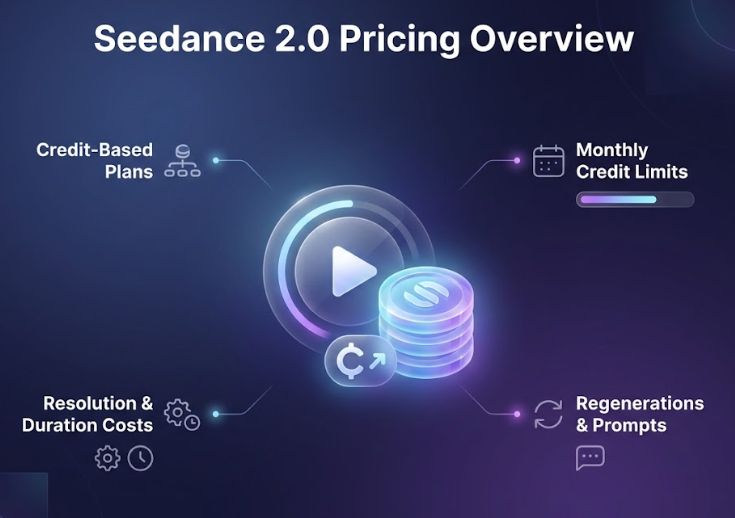

Seedance 2.0 Pricing Overview

Seedance typically uses a credit-based model. Instead of charging per minute directly, each generation deducts credits depending on:

- Duration

- Resolution

- Quality tier (draft vs high)

- Additional processing steps

- Retry count

Exact numbers vary depending on your subscription tier and access route. In some cases, Seedance is accessed through partner ecosystems rather than a standalone checkout page.

If you need official pricing tiers and plan breakdowns, check inside your Seedance workspace.

What Actually Drives Seedance 2.0 Cost

Here’s what consistently moved the meter in my account across repeated runs.

Duration

Duration is obvious, but it compounds quietly.

A 6-second clip extended to 12 seconds does more than double cost in practice. It doubles:

- Render time

- Retry temptation

- Micro-adjustments

My rule: I never start long.

If I’m unsure about motion style or pacing, I render 4-6 seconds first. If that base reads well, I extend.

This alone reduced unnecessary spend in week one.

Resolution and Quality Tier

Moving from 720p to 1080p consistently increased cost. Moving from draft to high-quality increased it again.

Upscaling, temporal smoothing, and higher sampling settings all count.

I treat preview passes like thumbnails:

- Low resolution

- Fast render

- Accept imperfection

If a shot earns its place visually, then I pay for the clean version.

High-quality passes are best reserved for final exports, not experiments.

References (Images, Style Frames, Motion Cues)

References improve consistency. They also add processing overhead.

Not dramatically. But noticeably.

Heavy reference stacks - multiple images, style frames, motion cues - tend to increase computational load. In my testing, reference-heavy runs felt more expensive over time.

One change made a difference:

I reused the same approved reference bundle instead of uploading new images for every run. That improved consistency and reduced random iteration noise.

Retries and Micro-Tweaks

Retries are the silent budget eater.

One wording tweak. One seed change. A 10% slower camera move. Suddenly you have five near-identical clips.

I started setting a hard cap:

- Two draft attempts

- One final pass

If I hit the cap and still want to tweak, the issue is usually the prompt, not the model.

This rule alone cut my credit burn in half.

Transforms and Post Steps

Some transforms stack quietly:

- Stabilization

- Re-timing

- Color adjustments

- Additional smoothing passes

One transform is fine. Chaining them casually turns a simple clip into a multi-stage render.

If I see artifacts, I prefer a clean re-render at the correct base settings instead of layering fixes.

Concurrency

Running multiple jobs in parallel feels efficient. It also hides cost spikes.

For exploration, I queue sequentially.

I batch only after a pattern is locked.

This reduced the “I forgot I had three runs going” problem.

A Simple Pre-Run Estimation Method

I don’t want cost math in my head while judging motion. So I use a five-line worksheet in Notes before rendering.

I write down:

- Duration (D)

- Quality tier (Q)

- Resolution (R)

- References (Ref)

- Expected retries (Rt)

Then I assign relative weights based on observed patterns.

Not platform numbers. Just directional multipliers.

Example mental model:

- Base unit per second at draft, 720p = 1x

- 1080p ≈ 1.4x

- 4K ≈ 2-3x

- High quality ≈ 1.5-2x over draft

- Light references ≈ +0.1-0.2x

- Heavy references ≈ +0.3-0.5x

- Each retry ≈ another full run at same multipliers

Then I sanity check:

Estimated cost score =

D × resolution multiplier × quality multiplier × (1 + reference factor) × (1 + retries)

It’s not precise. It’s directionally strong.

If one setup scores 120 and another scores 45, I know which one to test first.

Two Real Scenarios

Social loop test

6 seconds, 720p, draft, no references, 1 retry.

Low score. I allow myself two experiments.

Product reel shot

12 seconds, 1080p, high quality, heavy references, 0-1 retry.

High score. I only run this after validating motion in a 6-second draft.

This takes under 20 seconds to calculate. It keeps creative and cost conversations separate.

Draft → Lock → High-Quality Workflow

This workflow made Seedance pricing feel predictable.

Step 1: Draft

- 4-6 seconds

- Lowest resolution

- No references

- Only judging motion and pacing

If it doesn’t work here, I re-prompt instead of retrying at higher quality.

Step 2: Lock

- Same duration

- Add references

- Still draft quality

Now I focus on continuity and style. If this fails, I adjust prompt or reference, not resolution.

Step 3: High-Quality Pass

- Extend duration

- Increase resolution

- Switch to high quality

One variable per retry.

This ladder reduced my retries by roughly half. It didn’t save clock time immediately, but it removed indecision.

Cost Guardrails That Helped

When I first started using Seedance 2.0 in a paid workspace, the problem wasn’t that pricing was unclear. It was that creative momentum made it easy to overspend. The model is responsive. The interface encourages iteration. And iteration, if unmanaged, multiplies quietly.

So instead of trying to “optimize” every run, I set guardrails. Not complex spreadsheets. Just behavioral rules that keep cost predictable without killing creative flow.

Here are the guardrails that actually worked.

1. Hard Retry Caps Per Shot

Retries are where most credit systems become unpredictable.

In the beginning, I would tell myself, “Just one more tweak.” Change the seed. Slightly slower camera. Slightly warmer tone. A minor phrasing shift. Each change felt small. Collectively, they stacked into five near-identical clips.

So I set a rule:

- Two draft attempts

- One final high-quality pass

If I hit that ceiling and still feel unsatisfied, I stop generating and review the prompt instead.

This forced me to separate two problems:

- Is the model failing?

- Or is my brief unclear?

Most of the time, it was the brief.

Once I tightened prompt structure and clarified motion direction up front, retries naturally dropped. The retry cap didn’t just save credits. It improved prompting discipline.

2. Draft Small, Decide Fast

Long clips feel more “serious.” They also amplify cost and indecision.

A 15-second shot at 1080p with references is expensive. A 5-second draft at lower resolution is not. The smaller clip gives you 80% of the information you need about motion quality.

So I committed to shrinking first.

If I’m testing:

- A new camera move

- A product reveal motion

- A character style

I generate 4-6 seconds only. I judge pacing and readability in under two seconds of playback. If it doesn’t read immediately, extending it won’t fix it.

This one habit reduced my wasted long renders dramatically. It also made creative reviews cleaner. Fewer bloated drafts. Faster decisions.

3. One Variable Per Retry

When something looks “off,” the instinct is to change multiple things at once.

- Different seed

- Adjust motion speed

- Reword lighting

- Add reference

- Change framing

That feels efficient. It’s not.

If I change five variables at once, I learn nothing. If the next output works, I don’t know why. If it fails, I don’t know what broke it.

Now I change one thing per retry. Only one.

This makes retries strategic rather than emotional. It also reduces the total number of attempts required because each iteration teaches something useful.

Credit spend becomes proportional to insight gained.

4. Batch Only After Locking the Pattern

Parallel rendering is convenient. It’s also dangerous during exploration.

Early on, I would queue three variations simultaneously to “save time.” What actually happened:

- I judged outputs mid-render.

- I thought of new tweaks.

- I launched more jobs before the first three finished.

The result was scattered output and unclear direction.

Now I separate phases:

Exploration phase:

- Sequential runs only.

- Small drafts.

- Slow, deliberate testing.

Production phase:

- Batch runs once the look is locked.

- Variations generated in one clean block.

- No mid-batch fiddling.

Batching is powerful once you know what you want. Before that, it multiplies noise.

5. Reuse Reference Bundles Instead of Rebuilding Them

References improve consistency. They also create subtle overhead when re-uploaded or reconfigured repeatedly.

I created a stable reference folder:

- Approved product angles

- Color swatches

- Style frames

- Character stills

Instead of uploading new references every time, I reuse the same approved bundle for a given concept.

This does three things:

- Reduces variation drift

- Reduces mental fatigue

- Avoids unnecessary experimentation that triggers more retries

Consistency is a cost control mechanism.

6. Fix Artifacts at the Root, Not With Quality Upgrades

A common mistake is assuming higher quality settings will fix visible flaws.

If I see:

- Hand warping

- Motion jitter

- Strange object deformation

Increasing resolution rarely solves it. It just makes the artifact sharper.

Instead, I:

- Adjust the prompt

- Simplify the scene

- Add clearer spatial guidance

- Refine references

Only after the issue disappears at draft level do I increase quality.

This prevents paying premium rates for broken outputs.

7. Shorten Before You Extend

If a longer clip feels unstable, the temptation is to regenerate the full length.

Instead, I isolate the strongest middle 4-6 seconds and test that segment alone.

If the core works, extension becomes safe.

If it fails, I haven’t burned a long-duration render.

This rule is especially helpful for 20-30 second concept reels. Long timelines amplify small structural issues.

8. Separate Creative Judgement From Cost Anxiety

One unexpected benefit of guardrails is psychological.

Without rules, every retry feels like:

“Am I wasting money?”

With guardrails, the decision tree is predefined. I know:

- How many drafts I allow.

- When I escalate to high quality.

- When I stop and rethink.

That removes hesitation during evaluation. Creative judgement becomes clearer when cost structure is controlled in advance.

9. Pre-Define What “Good Enough” Means

Perfection is expensive in generative video.

Before running a final high-quality pass, I define acceptance criteria:

- Motion reads clearly in two seconds.

- Subject is identifiable.

- No major anatomical or physics artifacts.

- Brand tone matches brief.

If those boxes are checked, I stop iterating.

Without a “good enough” threshold, retries become aesthetic micro-adjustments that most viewers will never notice.

10. Document What Worked

This is less obvious, but powerful.

When a prompt pattern consistently performs well, I save it with notes:

- Duration used

- Resolution

- Quality tier

- Reference bundle

Next time I run a similar concept, I start from a known baseline rather than reinventing.

The fewer exploratory runs required, the lower the credit volatility.

Seedance vs Kling, Veo 3, Runway, and Magic Hour

Pricing alone never tells the full story. Two tools can look similar on paper and behave very differently once you start generating real clips.

Below is a structured comparison focused on what actually affects creators and marketers: cost predictability, iteration speed, workflow fit, and when each model makes financial sense.

High-Level Comparison

Tool | Pricing Model | Cost Predictability | Strengths | Weaknesses | Best For |

Seedance 2.0 | Credit-based | Medium (depends on retries + quality) | Cinematic motion, strong visual realism | Cost scales quickly with duration + quality | Marketing loops, concept reels |

Kling | Credit-based | Medium | Dynamic motion, fast experimentation | Can require multiple retries for complex scenes | Motion-heavy social clips |

Veo 3 | Platform-dependent (often enterprise) | Low-Medium | High-end output potential | Access + pricing often unclear | High-budget, brand work |

Runway | Tiered subscription + credits | Medium-High | Integrated editing + video tools | Premium tiers required for heavy use | Teams needing full pipeline |

Magic Hour | Structured annual tiers | High | Predictable pricing, multi-workflow support | Less granular cinematic control vs niche models | Budget-conscious creators, structured teams |

Now let’s unpack what this means in real-world usage.

1. Pricing Model Philosophy

Seedance and Kling: Performance-Driven Credit Systems

Both Seedance and Kling operate primarily on a credit-based system. You pay for generation runs, and the cost scales with:

- Duration

- Resolution

- Quality tier

- Retry frequency

This creates flexibility. You can run small experiments cheaply. But it also introduces volatility. If your workflow involves many retries or longer timelines, cost becomes less predictable.

In my experience, Seedance feels slightly more sensitive to resolution jumps, while Kling tends to encourage motion experimentation, which increases retries.

If you are disciplined with draft-first workflows, both remain manageable. If you experiment loosely, both can spike.

Veo 3: Enterprise-Oriented Access

Veo 3 often sits in a different pricing context. Access may depend on platform integration, enterprise agreements, or API-level usage.

That makes it harder to evaluate purely as a “creator subscription” tool. Pricing transparency varies depending on how you access it.

This typically positions Veo 3 for:

- Agencies

- Brand campaigns

- Teams with formal budgets

Not casual weekly content creation.

Runway: Subscription Hybrid

Runway blends subscription tiers with generation limits. Compared to pure credit models, this often feels more stable month to month.

You are paying for:

- Video generation

- Editing tools

- Collaboration features

This ecosystem approach means the value isn’t just in raw generation cost. It’s in workflow consolidation.

However, heavier generation use still pushes you into higher tiers.

Runway makes sense if you want:

- One interface for generation + editing

- Team collaboration

- Fewer external tools

Magic Hour: Structured Annual Pricing

Magic Hour approaches this differently with structured annual billing tiers:

Magic Hour Pricing (Annual Billing)

Basic - Free

Creator - $10/month (billed annually at $120/year)

Pro - $30/month (billed annually at $360/year)

Business - $66/month (billed annually at $792/year)

This structure emphasizes predictability over micro-metering.

Instead of thinking in “credits per retry,” you think in:

- Tier capability

- Workflow access

- Monthly creative output capacity

For creators who dislike fluctuating credit burn, this can feel simpler.

2. Cost Behavior Under Real Workflows

Here’s how these tools behave under common scenarios.

Scenario A: 6-10 Second Social Loop

Tool | Cost Behavior |

Seedance | Efficient at draft level; cost increases sharply at 1080p high-quality |

Kling | Similar to Seedance; motion-heavy experimentation increases retries |

Veo 3 | Overkill unless quality is mission-critical |

Runway | Predictable if within subscription limits |

Magic Hour | Stable tier-based usage; no mental credit math |

For short loops, cost discipline matters more than raw output quality.

Scenario B: 20-30 Second Concept Reel

Tool | Cost Behavior |

Seedance | Duration multiplier becomes significant; draft-first strategy required |

Kling | May require multiple passes for stable long motion |

Veo 3 | Strong candidate if budget allows |

Runway | Works well if you need editing integration |

Magic Hour | Works best when structured workflows matter more than cinematic extremes |

Longer timelines magnify retry cost in credit-based systems.

Scenario C: Team Production Environment

Tool | Fit |

Seedance | Good if team is disciplined with prompts |

Kling | Similar discipline required |

Veo 3 | Enterprise-friendly |

Runway | Strong collaboration layer |

Magic Hour | Predictable budgeting + workflow variety |

Teams usually care less about per-run nuance and more about monthly budget clarity.

3. Motion Style and Retry Sensitivity

Pricing discussions ignore an important dimension: how often a tool forces retries.

Seedance:

- Strong cinematic push-ins and smooth camera paths

- Complex scenes may need structured prompting

Kling:

- Strong dynamic movement

- High-energy scenes can require refinement

Veo 3:

- High-end realism

- Less casual iteration

Runway:

- Balanced quality

- Often used as part of larger creative stack

Magic Hour:

- Multi-modal focus (text-to-video + image-to-video)

- Emphasis on practical production rather than purely cinematic experimentation

The more experimental your motion style, the more credit-based tools fluctuate.

Who Should Choose Seedance?

Choose Seedance if:

- You prioritize cinematic realism.

- You are comfortable managing credits.

- You want detailed control over motion.

Consider alternatives if:

- You need predictable monthly cost.

- You produce high volumes of short clips.

- You want integrated editing inside one ecosystem.

FAQs

Does Seedance 2.0 charge per minute?

No. It uses a credit system where generation cost depends on duration, resolution, and quality settings.

What increases Seedance costs the most?

Long duration, high resolution, heavy references, and repeated retries.

Is 1080p worth it?

Only for final exports. Draft in lower resolution first.

How can I reduce retries?

Use short draft runs, reuse references, and change one variable at a time.

Is Seedance cheaper than Kling?

It depends on your workflow and retry rate. Motion-heavy experimentation increases cost in both systems.

.jpg)