How to Use FaceFusion 3.6.0: Complete Setup, Settings, and Best Results Guide

Quick answer: To use FaceFusion: install via conda (conda create --name facefusion python=3.12), clone the GitHub repo, run python install.py with your onnxruntime flag, then launch with python facefusion.py run --open-browser. Upload a source face image and a target image or video, then click Start. For best results, use the hyperswap_1a_256 face swapper model and gfpgan_1.4 face enhancer. |

What's new in FaceFusion 3.6.0

|

FaceFusion is one of the most actively developed open-source face swap platform available in 2026. It runs entirely on your local hardware, processes everything privately without uploading anything to a server, and produces output quality that consistently rivals commercial tools, particularly when paired with the right face enhancer models.

This guide covers the complete workflow: installing FaceFusion 3.6.0 cleanly on your machine, running a basic face swap, and then tuning the settings that actually make a meaningful difference to output quality. The best settings section is designed to be used as a reference you return to each time you start a new project.

If you hit an error during installation, the FaceFusion Discord community is the fastest resolution path. If you want a face swap without any installation at all, Magic Hour handles both photo and video face swap in the browser with a free plan and no watermark.

Installing FaceFusion 3.6.0

FaceFusion uses conda for environment management. This replaced the older venv approach and is now the officially supported method. If you do not have conda installed, download Miniconda from the official conda site before starting.

FaceFusion also offers one-click installers for Windows and macOS via Pinokio, which handles the entire installation process automatically. If the terminal steps below feel complex, Pinokio is the recommended starting point for non-technical users.

Step 1: Prepare your Conda Environment

Initialize conda for your terminal (run once if you have not done this before):

conda init --all

Create the environment with Python 3.12:

conda create --name facefusion python=3.12 pip=25.0

Activate the environment:

conda create --name facefusion python=3.12 pip=25.0

Step 2: Clone the Repository

git clone https://github.com/facefusion/facefusion

cd facefusion

Step 3: Install the Application

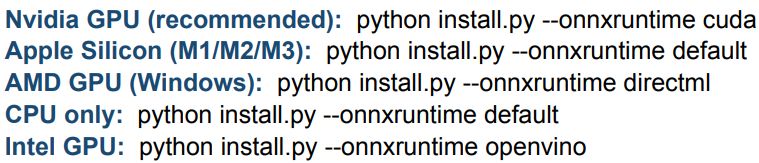

Choose the install command that matches your hardware. This is the most important step — choosing the wrong onnxruntime means either no GPU acceleration or installation failure.

Step 4: Reload the Environment and Run

conda deactivate

conda activate facefusion

python facefusion.py run --open-browser

If installation is successful, your default browser will open to the FaceFusion GUI automatically. If it does not, check the terminal output for errors. Paste the error message into ChatGPT or post it in the FaceFusion Discord for the fastest resolution.

Don't want to install? MimicPC offers cloud-based FaceFusion that runs in your browser with no setup. Magic Hour also offers browser-based face swap for photo and video with 400 free credits, no watermark, and no credit card required. Click here to try for FREE! |

Basic Usage: Your First Face Swap

Once the GUI is open, the interface has three main areas: the source panel (top left), the target panel (top right), and the settings and preview below. For a first face swap, you only need to touch three things.

1. Set your Execution Provider

Before uploading any media, check the execution provider setting at the top. This determines whether FaceFusion uses your GPU or CPU to process the swap.

- Nvidia GPU: select CUDA

- Apple M1/M2/M3: select CoreML

- AMD GPU on Windows: select DirectML

- No dedicated GPU: leave as CPU (processing will be significantly slower)

Getting this wrong does not break anything — it just means slower processing. But on a machine with a GPU, using the CPU can make a 30-second clip take 30 minutes instead of 3.

2. Upload Source and Target

Drag and drop your source image into the source panel. The source is the face you want to apply a clear, front-facing photo to produce the best results. Then drag and drop your target image or video into the target panel. The target is what you want to modify.

Source photo tip: Use a high-resolution, well-lit, front-facing photo of the face. One person only in the frame. No sunglasses, no obstructions. The quality of your source photo is the single biggest factor in output quality. |

3. Click Start

For a simple single-person swap, the default settings are reasonable as a starting point. Click the Start button and wait. The preview window will show results as they render. For video, use the frame slider to scrub through different points in the clip and verify quality before the full render completes.

That is the complete basic workflow. Everything else in FaceFusion is optional tuning that improves results in specific situations.

FaceFusion Best Settings for Quality Results

This is the section most users return to. The default settings in FaceFusion are deliberately conservative as they prioritize speed over quality and compatibility over performance. Changing the settings below consistently produces better output.

Setting | Recommended value | Why it matters |

Face swapper model | hyperswap_1a_256 (default) | Best overall quality-to-speed ratio in 3.6.0 |

Face swapper model (quality-first) | inswapper_128_fp16 | Highest quality, significantly slower — use for final renders |

Face enhancer model | gfpgan_1.4 | Most realistic skin texture and natural output |

Face enhancer blend | 80-90 | Balances enhancement with natural look, avoids over-sharpening |

Pixel boost | 512x512 or 768x768 | Higher = better quality at cost of processing time |

Execution provider (Nvidia GPU) | CUDA | Required for GPU acceleration on Nvidia cards |

Execution provider (Apple Silicon) | CoreML | Optimal for M1/M2/M3 shared memory architecture |

Thread count (Apple Silicon) | 10-16 | Works best within shared memory range |

Thread count (Nvidia GPU) | 2x GPU GB (e.g. 32 for 16GB) | Matches VRAM capacity, prevents memory overflow |

Output audio encoder | aac | Change from default flac for broader media player support |

Face detector angles | Add 90 for side-facing shots | Catches non-frontal faces that default 0 degrees misses |

Face selector mode | Reference (specific person), Many (all faces) | Match to your target — Reference prevents accidental multi-swaps |

Background remover model | corridor_key_1024 or _2048 (new in 3.6.0) | New higher-resolution background remover models |

Settings verified against FaceFusion 3.6.0. April 2026.

The most impactful single setting change is adding a face enhancer. By default, FaceFusion runs only the face swapper. Enabling the gfpgan_1.4 face enhancer on top of the swap significantly improves skin texture and realism, particularly visible on close-up footage or high-resolution images.

Quick quality stack: For the best output on most use cases: face swapper set to hyperswap_1a_256 + face enhancer set to gfpgan_1.4 at blend 85 + pixel boost at 512x512. This combination delivers noticeably better results than defaults with acceptable processing time. |

Advanced Features

FaceFusion includes several optional processors and settings beyond the basic swap workflow as each one targets a specific quality or workflow problem, and none are required for a standard result.

Face Selector Modes

FaceFusion offers three face selector modes that control which faces get swapped when multiple people appear in the target.

Reference Mode: Select a specific face from the target by clicking it in the reference face panel. Only that face gets swapped. Use this when your target has multiple people and you want to swap one specific person.

One Mode: Swaps a single face — FaceFusion picks the most prominent one detected. Use the face analyzers (age, gender) to help the model select the right person if it picks incorrectly.

Many Mode: Swaps all detected faces simultaneously. Use for group photos or multi-person scenes where you want every face replaced with the source face. Requires more fine-tuning to look natural.

Face Debugger

Enable the face debugger processor to visualize exactly what FaceFusion is detecting before committing to a full render. It overlays bounding boxes, key points, and mask areas onto the preview, showing you which faces are being detected, where the mask boundaries sit, and how much padding is applied around each face.

This is particularly useful for debugging partial face detections, masks that cut into the hairline, or cases where FaceFusion is selecting the wrong face in a multi-person scene. Run the debugger on a single frame first, adjust the settings until the visualization looks correct, then disable it and run the actual swap.

Expression Restorer

The expression restorer (powered by Live Portrait technology) re-adds fine facial expressions that tend to get flattened during face swapping, including subtle muscle movements, wrinkles, and micro-expressions. Enable it as an additional processor alongside the face swapper for footage where natural expression is important. It adds processing time but meaningfully improves realism on close-up speaking footage.

Background Remover

FaceFusion 3.6.0 adds new corridor_key_1024 and corridor_key_2048 background remover models, which provide higher-resolution background separation than earlier models. The background remover is useful for isolating the face area when working with complex backgrounds that interfere with face detection or when you want to composite the swap result onto a different background.

The new --background-remover-despill-color argument controls color bleeding at background edges, which was a common artifact with the older models on high-contrast backgrounds.

Age Modifier

The age modifier processor includes the new fran model in 3.6.0, which provides more natural-looking age transformation than earlier options. Use it to apply aging or de-aging effects on top of the face swap, or as a standalone processor on any target footage. The fran model is particularly effective at preserving facial identity through the age transformation, which the earlier models struggled with.

Trim Frames for Video

When working with long video clips, use the Trim Frame Start and Trim Frame End settings to define exactly which section you want to process. This is critical for two reasons: it lets you test a short section to verify quality before committing to a long render, and it lets you skip problem frames at the beginning or end of a clip where the face is partially visible or obscured.

Set trim frame start and end in the preview panel by scrubbing to the right frame and clicking the set buttons. Always run a 5-10 second test render before processing a full clip.

Output Options

A few output settings worth knowing:

- Keep FPS: maintains the original frame rate of your target video. Leave this on unless you specifically need to change the output frame rate.

- Output Audio Encoder: change from the default flac to aac for better compatibility with most media players and social platforms. Flac is lossless but many players do not support it for video.

- Output Path: FaceFusion does not remember a custom output path between sessions. You need to set it each time. Avoid spaces in the path.

Headless Mode for Batch Processing

FaceFusion supports running entirely from the command line without the GUI through headless-run mode. This is useful for batch processing multiple files or integrating FaceFusion into an automated pipeline. The basic headless command structure:

python facefusion.py headless-run --source-paths source.jpg --target-path target.mp4 --output-path output/ --processors face_swapper face_enhancer --face-swapper-model hyperswap_1a_256 --face-enhancer-model gfpgan_1.4 --execution-providers cuda

The batch-run command extends this further, allowing you to define and queue multiple jobs at once and run them sequentially without manual intervention.

Common Problems and Fixes

Most FaceFusion issues come down to three things: wrong execution provider, low-quality source input, or a setting mismatch between your hardware and the processing mode. Here are the most common ones and how to fix them.

Face not Being Detected

Cause: The source or target photo has the face at an extreme angle, too small in the frame, or partially obstructed.

Fix: Add 90 degrees to the face detector angles setting if faces are turning sideways. Enable the face debugger to see exactly what is being detected. For video, try a frame where the face is clearly visible and front-facing.

Output Looks Blurry or has Visible Artifacts

Cause: The face swapper model is running at too low a resolution, or no face enhancer is enabled.

Fix: Enable the gfpgan_1.4 face enhancer. Increase pixel boost to 512x512 or 768x768. Switch from hyperswap_1a_256 to inswapper_128_fp16 for higher quality at the cost of speed.

Processing is Very Slow

Cause: The wrong execution provider is selected, or the thread count is not optimized for your hardware.

Fix: Verify the execution provider matches your hardware (CUDA for Nvidia, CoreML for Apple Silicon). Increase thread count: 10-16 for Apple Silicon, 2x GPU GB for Nvidia. Running multiple frame processors simultaneously (face swapper + face enhancer + expression restorer) multiplies processing time.

Output Path Error

Cause: The output path contains spaces or does not exist.

Fix: Use an output path with no spaces. Create the output directory before running if it does not already exist. FaceFusion does not create new directories automatically.

Green Lines or Color Artifacts in Video Output

Cause: RGB to YUV color conversion issue, or a video codec compatibility problem.

Fix: Add libx264rgb to your output video encoder options. If the target video was modified by QuickTime or another editor, re-encode it first with ffmpeg: ffmpeg -i input.mp4 -c:v libx264 -c:a aac fixed_input.mp4

Wrong Face Being Swapped in Multi-Person Video

Cause: Face selector mode is set to One or Many when Reference mode is needed.

Fix: Switch to Reference mode and click the specific face you want to swap in the reference face panel. Use the face debugger to verify the selection before running the full render.

Frequently Asked Questions

What hardware do I need to run FaceFusion?

FaceFusion runs on any modern computer with Python 3.12 and conda installed. For practical video processing speed, an Nvidia GPU with at least 4GB VRAM or Apple Silicon (M1 or newer) is strongly recommended. CPU-only processing works but is significantly slower — a 30-second video that takes 3 minutes on an Nvidia GPU can take 30+ minutes on CPU alone.

Is FaceFusion free?

Yes. FaceFusion is completely free and open source under its own license. There is no subscription, no credit system, and no watermark. Processing happens entirely on your own hardware. It runs locally on your hardware, and tools like Pinokio can simplify the installation process without requiring any manual setup.

What is the difference between the face swapper models?

hyperswap_1a_256 is the default and best starting point — good quality with reasonable processing speed. inswapper_128_fp16 produces the highest quality output but takes significantly longer. The ghost models offer an alternative approach that works better on some skin tones. For most use cases, start with hyperswap_1a_256 and only switch to inswapper_128_fp16 for final renders where quality is critical.

Why does the face swap look unnatural?

The most common causes are no face enhancer enabled, mismatched lighting between source and target, source photo at an angle or with obstructions, and pixel boost set too low. The single most effective fix for unnatural-looking swaps is enabling the gfpgan_1.4 face enhancer at a blend level of 80-90. Enabling the expression restorer processor also helps on footage with significant facial movement.

Can I use FaceFusion without a GPU?

Yes. Set the execution provider to CPU during installation (--onnxruntime default) and in the GUI. Processing will work correctly, but will be much slower. For image face swap, CPU-only is practical. For video, particularly anything over 10-15 seconds, GPU processing is strongly recommended for reasonable turnaround times.

What is FaceFusion headless mode?

Headless mode runs FaceFusion entirely from the command line without opening the GUI. It is used for batch processing multiple files, running FaceFusion on a remote server, or integrating face swap into an automated workflow. Use python facefusion.py headless-run with the appropriate arguments. See the advanced features section above for an example command.

What is the difference between FaceFusion and Magic Hour?

FaceFusion runs locally on your own hardware — nothing is uploaded, it is free, and quality can be very high with the right settings. It requires technical setup, including Python, conda, and command-line comfort. Magic Hour is a browser-based tool that requires no installation, works on any device, including mobile, and offers a free plan with no watermark.

Magic Hour is the right choice for creators who want a fast, accessible face swap without setup. FaceFusion is the right choice for privacy-first workflows, technical users, and anyone who wants maximum control over processing parameters.

Want face swap without the setup?

Magic Hour does face swap for photos and videos in your browser. No installation, no GPU required, no watermark on the free plan. 400 free credits, no credit card needed.

Try Face Swap for FREE